As a kid, I really enjoyed chess, as did my dad. Naturally, I wanted to play him. The problem was that my dad was extremely good. He was playing local tournaments and could play blindfolded, while I was, well, a child. In a purely skill based game like chess, an extreme skill imbalance means that the more skilled player essentially always wins, and in chess, it ends up being a slaughter that is no fun for either player. Not many kids have the patience to lose dozens of games in a row and never even get close to victory.

This is a common problem in chess, with a well established solution: It’s called “odds”. When two players with very different skill levels want to play each other, the stronger player will start off with some pieces missing from their side of the board. “Odds of a queen”, for example, refers to taking the queen of the stronger player off the board. When I played “odds of a queen” against my dad, the games were fun again, as I had a chance of victory and he could play as normal without acting intentionally dumb. The resource imbalance of the missing queen made the difference. I still lost a bunch though, because I blundered pieces.

Now I am a fully blown adult with a PhD, I’m a lot better at chess than I was a kid. I’m better than most of my friends that play, but I never reached my dad’s level of chess obsession. I never bothered to learn any openings in real detail, or do studies on complex endgames. I mainly just play online blitz and rapid games for fun. My rating on lichess blitz is 1200, on rapid is 1600, which some calculator online said would place me at ~1100 ELO on the FIDE scale.

In comparison, a chess master is ~2200, a grandmaster is ~2700. The top chess player Magnus Carlsen is at an incredible 2853. ELO ratings can be used to estimate the chance of victory in a matchup, although the estimates are somewhat crude for very large skill differences. Under this calculation, the chance of me beating a 2200 player is 1 in 500, while the chance of me beating Magnus Carlsen would be 1 in 24000. Although realistically, the real odds would be less about the ELO and more on whether he was drunk while playing me.

Stockfish 14 has an estimated ELO of 3549. In chess, AI is already superhuman, and has long since blasted past the best players in the world. When human players train, they use the supercomputers as standards. If you ask for a game analysis on a site like chess.com or lichess, it will compare your moves to stockfish and score you by how close you are to what stockfish would do. If I played stockfish, the estimated chance of victory would be 1 in 1.3 million. In practice, it would be probably be much lower, roughly equivalent to the odds that there is a bug in the stockfish code that I managed to stumble upon by chance.

Now that we have all the setup, we can ask the main question of this article:

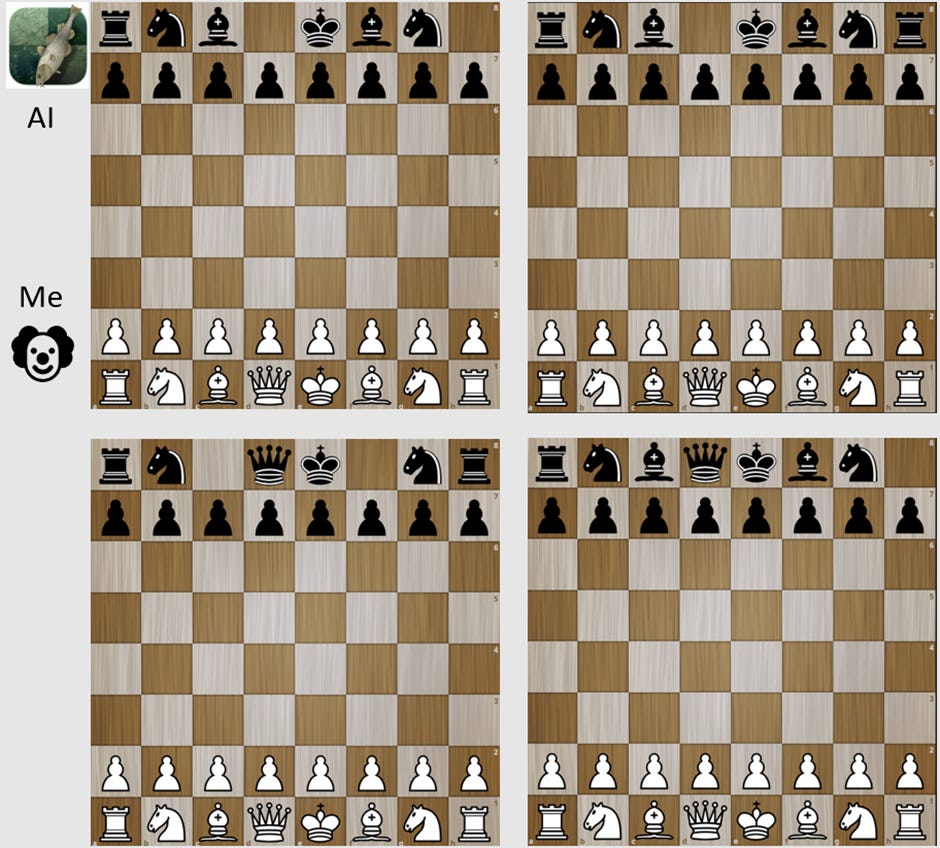

What “odds” do I need to beat stockfish 14[1] in a game of chess? Obviously I can win if the AI only has a king and 3 pawns. But can I win if stockfish is only down a rook? Two bishops? A queen? A queen and a rook? More than that? I encourage you to pause and make a guess. And if you can play chess, I encourage you to guess as to what it would take for you to beat stockfish. For further homework, you can try and guess the odds of victory for each game in the picture below.

The first game I played against stockfish was with queen odds.

I won on the first try. And the second, and the third. It wasn’t even that hard. I played 10 games and only lost 1 (when I blundered my queen stupidly).

The strategy is simple. First, play it safe and try not to make any extreme blunders. Don’t leave pieces unprotected, check for forks and pins, don’t try any crazy tactics. Secondly, take every opportunity to trade pieces. Initially, the opponent has 30 points of material, and you have 39, meaning you have 30% more material than them. If you manage to trade all your bishops and knights away, stockfish would have 18 points and you would have 27, a 50% advantage. It also makes the game much simpler and straightforward, as there are far less nasty tactics available when the computer only has two rooks available.

Don’t get me wrong, the computer managed to trick me plenty of times and get pieces trapped. Sometimes I would blunder several pawns or a whole piece. But you need to use pieces to trap pieces, and the computer never had the resources to claw away at me before I traded everything away and crushed it with my extra queen.

Since that was easy, I tried odds of two bishops. I lost the first game, then won the second. Lost the third, won the fourth. Same strategy as the queens, but it was noticeably more difficult. I would often make a small error early on, which would then snowball out to take me down.

Getting cocky, I played with odds of a rook (ostensibly only 1 point of material less than two bishops). I immediately got trounced. I lost the first game, and proceeded to lose like 20 games in a row before I finally managed to eke out a draw.

The problem with rook odds is that the rook is locked away in the corner of the board, and usually is most useful at the end of the game when it has free reign of the board. That means that in the opening of the game, I’m functionally playing stockfish as if I have equal material. And stockfish, with equal material, is a fucking nightmare. It can put it’s full force to bear, poke any weaknesses, render your pieces trapped and useless, and chip away at your lead slowly but surely. By the time I could trade pieces down and get my extra rook in play, the AI had usually chipped away enough at my lead that I was only a little bit up in material. And a little bit up is not enough. Here is an example position:

It looks like I’m completely winning here. I have an extra pawn, and a rook instead of a knight, which is an ostensible +3 material. I even spot the trap laid by stockfish: If I move my rook one up or one down, the knight can jump to e2, forking my king and rook and ensuring a rook for knight trade that would destroy my lead. Thinking I was smart, I put my rook on c4. Big mistake. The AI gave a knight check on h3, driving the king to f1, and then it forked my rook and king with his bishop. Even if I moved my rook to c5, black would have been able to lock it into place by moving the b pawn to b6 and moving the knight to d3, rendering the rook effectively useless. Only moving the rook to b2 would have saved my advantage. If the analysis here was obvious to you, there’s a good chance you can beat stockfish with rook odds.

It took me something like 20 games to draw against stockfish, and a further 30 before I finally actually won. In the successful game, I got lucky with an opening that let me trade most pieces equally, and then slowly forced a knight vs knight endgame where I was up two pawns. This might actually be a case where a chess GM would outperform an AI: they can think psychologically, so they can deliberately pick traps and positions that they know I would have difficulty with.

Analysis of my tradeoff of material and ELO:

Here I’ll summarize the results of my little experiment. Remember, initially I had an ELO of ~1100 and a nominal odds of beating stockfish of roughly 1 in a million (but probably less).

Odds of rook:

Material advantage: 14%

Win rate: 2%

Odds of victory boost: 4 orders of magnitude or more

Equivalent ELO: ~2750

Odds of two bishops:

Material advantage: 18%

Win rate: ~50%

Odds of victory boost: 6 orders of magnitude or more

Equivalent ELO: ~3549

Odds of queen:

Material advantage: 30%

Win rate: 90%

Odds of victory boost: 7 orders of magnitude or more

Equivalent ELO: ~3900

I tried a few games with odds of a knight, and got hopelessly crushed every time. However, looking online, I did find that a GM achieved an 80% win rate in a knight-odds game against the Komodo chess engine.

It’s worth pointing out that handicaps become more powerful the better you are at chess. Quoting GM Larry Kaufman on this subject:

The Elo equivalent of a given handicap degrades as you go down the scale. A knight seems to be worth around a thousand points when the “weak” player is around IM level, but it drops as you go down. For example, I’m about 2400 and I’ve played tons of knight odds games with students, and I would put the break-even point (for untimed but reasonably quick games) with me at around 1800, so maybe a 600 value at this level. An 1800 can probably give knight odds to a 1400, a 1400 to an 1100, an 1100 to a 900, etc. This is pretty obviously the way it must work, because the weaker the players are, the more likely the weaker one is to blunder a piece or more. When you get down to the level of the average 8 year old player, knight odds is just a slight edge, maybe 50 points or so.

This is why my dad could beat me as a kid with queen odds, but stockfish can’t beat me now. You need sufficient knowledge of how to game works to utilize your resource advantages properly.

Can brawn beat an AGI?

Robert Miles compared humanity fighting an AGI to an amateur at chess trying to beat a grandmaster. His argument was that delving into the details of such a fight was pointless, because “you just cannot expect to win against a superior opponent”.

The problem here is that I, an amateur, can beat a GM. I can beat Stockfish. All I need is an extra queen.

This is not a trick point. If a rogue AI is discovered early, we could end up in a war where the AGI has a huge intelligence advantage, but humans have a huge resource advantage.

In the view of Miles and others, the initially gargantuan resource imbalance between the AI and humanity doesn’t matter, because the AGI is so super-duper smart, it will be able to come up with the “perfect” plan to overcome any resource imbalance, like a GM playing against a little kid that doesn’t understand the rules very well.

The problem with this argument is that you can use the exact same reasoning to imply that’s it’s “obvious” that Stockfish could reliably beat me with queen odds. But we know now that that’s not true. There will always be a level of resource imbalance where the task at hand is just too damn difficult, no matter how high the intelligence. Consider also the implication that a less intelligent, but more controllable AI that we cooperate with might be able to triumph over a much more intelligent rogue AI.

Of course, this little experiment tells us very little about what the equivalent of a “queen advantage” would be in a battle with an AGI. It would definitely need to be far more than literally 30% more people, as we know plenty of examples of human generals winning battles despite being vastly outnumbered. Unlike chess, the real world has secret information, way more possible strategies, the potential for technological advancements, defections and betrayal, etc. which all favor the more intelligent party. On the other hand, the potential resource imbalance could be ridiculously high, particularly if a rogue AI is caught early on it’s plot, with all the worlds militaries combined against them while they still have to rely on humans for electricity and physical computing servers. It’s somewhat hard to outthink a missile headed for your server farm at 800 km/h.

I intend to write a lot more on the potential “brains vs brawns” matchup of humans vs AGI. It’s a topic that has received surprisingly little depth from AI theorists. I hope this little experiment at least explains why I don’t think the victory of brain over brawn is “obvious”. Intelligence counts for a lot, but it ain’t everything.

- ^

In order to play stockfish with odds, I went to lichess.org/editor, removed the pieces as necessary, and then clicked “continue from here”, selected “play against computer”, and selected maximum strength computer opponent (level 8). This is full strength stockfish with a depth of 22 moves and calculation time of 1000 ms. I also tested with the higher depth and calculation time of the “analysis board”, and was still able to win easily with queen odds.

The post studies handicapped chess as a domain to study how player capability and starting position affect win probabilities. From the conclusion:

Since this post came out, a chess bot (LeelaQueenOdds) that has been designed to play with fewer pieces has come out. simplegeometry’s comment introduces it well. With queen odds, LQO is way better than Stockfish, which has not been designed for it. Consequentially, the main empirical result of the post is severely undermined. (I wonder how far even LQO is from truly optimal play against humans.)

(This is in addition to—as is pointed out by many commenters—how the whole analogue is stretched at best, given the many critical ways in which chess is different from reality. The post has little argument in favor of the validity of the analogue.)

I don’t think the post has stood the test of time, and vote against including it in the 2023 Review.