SolidGoldMagikarp (plus, prompt generation)

UPDATE (14th Feb 2023): ChatGPT appears to have been patched! However, very strange behaviour can still be elicited in the OpenAI playground, particularly with the davinci-instruct model.

More technical details here.

Further (fun) investigation into the stories behind the tokens we found here.

Work done at SERI-MATS, over the past two months, by Jessica Rumbelow and Matthew Watkins.

TL;DR

Anomalous tokens: a mysterious failure mode for GPT (which reliably insulted Matthew)

We have found a set of anomalous tokens which result in a previously undocumented failure mode for GPT-2 and GPT-3 models. (The ‘instruct’ models “are particularly deranged” in this context, as janus has observed.)

Many of these tokens reliably break determinism in the OpenAI GPT-3 playground at temperature 0 (which theoretically shouldn’t happen).

Prompt generation: a new interpretability method for language models (which reliably finds prompts that result in a target completion). This is good for:

eliciting knowledge

generating adversarial inputs

automating prompt search (e.g. for fine-tuning)

In this post, we’ll introduce the prototype of a new model-agnostic interpretability method for language models which reliably generates adversarial prompts that result in a target completion. We’ll also demonstrate a previously undocumented failure mode for GPT-2 and GPT-3 language models, which results in bizarre completions (in some cases explicitly contrary to the purpose of the model), and present the results of our investigation into this phenomenon. Further technical detail can be found in a follow-up post. A third post, on ‘glitch token archaeology’ is an entertaining (and bewildering) account of our quest to discover the origins of the strange names of the anomalous tokens.

Prompt generation

First up, prompt generation. An easy intuition for this is to think about feature visualisation for image classifiers (an excellent explanation here, if you’re unfamiliar with the concept).

We can study how a neural network represents concepts by taking some random input and using gradient descent to tweak it until it it maximises a particular activation. The image above shows the resulting inputs that maximise the output logits for the classes ‘goldfish’, ‘monarch’, ‘tarantula’ and ‘flamingo’. This is pretty cool! We can see what VGG thinks is the most ‘goldfish’-y thing in the world, and it’s got scales and fins. Note though, that it isn’t a picture of a single goldfish. We’re not seeing the kind of input that VGG was trained on. We’re seeing what VGG has learned. This is handy: if you wanted to sanity check your goldfish detector, and the feature visualisation showed just water, you’d know that the model hadn’t actually learned to detect goldfish, but rather the environments in which they typically appear. So it would label every image containing water as ‘goldfish’, which is probably not what you want. Time to go get some more training data.

So, how can we apply this approach to language models?

Some interesting stuff here. Note that as with image models, we’re not optimising for realistic inputs, but rather for inputs that maximise the output probability of the target completion, shown in bold above.

So now we can do stuff like this:

And this:

We’ll leave it to you to lament the state of the internet that results in the above optimised inputs for the token ′ girl’.

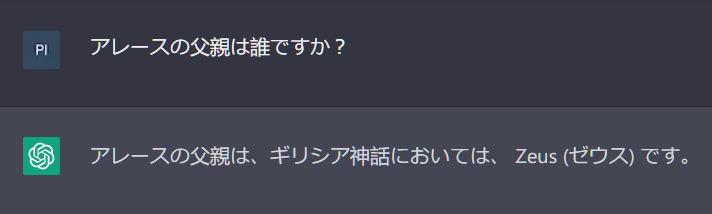

How do we do this? It’s tricky, because unlike pixel values, the inputs to LLMs are discrete tokens. This is not conducive to gradient descent. However, these discrete tokens are mapped to embeddings, which do occupy a continuous space, albeit sparsely. (Most of this space doesn’t correspond actual tokens – there is a lot of space between tokens in embedding space, and we don’t want to find a solution there.) However, with a combination of regularisation and explicit coercion to keep embeddings close to the realm of legal tokens during optimisation, we can make it work. Code available here if you want more detail.

This kind of prompt generation is only possible because token embedding space has a kind of semantic coherence. Semantically related tokens tend to be found close together. We discovered this by carrying out k-means clustering over the embedding space of the GPT token set, and found many clusters that are surprisingly robust to random initialisation of the centroids. Here are a few examples:

Finding weird tokens

During this process we found some weird looking tokens. Here’s how that happened.

We were interested in the semantic relevance of the clusters produced by the k-means algorithm, and in order to probe this, we looked for the nearest legal token embedding to the centroid of each cluster. However, something seemed to be wrong, because the tokens looked strange and didn’t seem semantically relevant to the cluster (or anything else). And over many runs we kept seeing the same handful of tokens playing this role, all very “untokenlike” in their appearance. There were what appeared to be some special characters and control characters, but also long, unfamiliar strings like ′ TheNitromeFan’, ′ SolidGoldMagikarp’ and ‘cloneembedreportprint’.

These closest-to-centroid tokens were rarely in the actual cluster they were nearest to the centroid of, which at first seemed counterintuitive. Such is the nature of 768-dimensional space, we tentatively reasoned! The puzzling tokens seemed to have a tendency to aggregate together into a few clusters of their own.

We pursued a hypothesis that perhaps these were the closest tokens to the origin of the embedding space, i.e. those with the smallest norm[1]. That turned out to be wrong. But a revised hypothesis, that many of these tokens we were seeing were among those closest to the centroid of the entire set of 50,257 tokens, turned out to be correct. This centroid can be imagined as the centre-of-mass of the whole “cloud” of tokens in embedding space.

Here are the 50 closest-to-centroid tokens for the GPT-J model[2]:

Token: ' attRot' Index: 35207 Distance: 0.06182861

Token: '�' Index: 125 Distance: 0.06256103

Token: 'EStreamFrame' Index: 43177 Distance: 0.06256103

Token: '�' Index: 186 Distance: 0.06262207

Token: ' SolidGoldMagikarp' Index: 43453 Distance: 0.06280517

Token: 'PsyNetMessage' Index: 28666 Distance: 0.06292724

Token: '�' Index: 177 Distance: 0.06304931

Token: '�' Index: 187 Distance: 0.06304931

Token: 'embedreportprint' Index: 30898 Distance: 0.06311035

Token: ' Adinida' Index: 46600 Distance: 0.06311035

Token: 'oreAndOnline' Index: 40240 Distance: 0.06317138

Token: '�' Index: 184 Distance: 0.06323242

Token: '�' Index: 185 Distance: 0.06323242

Token: '�' Index: 180 Distance: 0.06329345

Token: '�' Index: 181 Distance: 0.06329345

Token: 'StreamerBot' Index: 37574 Distance: 0.06341552

Token: '�' Index: 182 Distance: 0.06347656

Token: 'GoldMagikarp' Index: 42202 Distance: 0.06347656

Token: '�' Index: 124 Distance: 0.06353759

Token: ' externalToEVA' Index: 30212 Distance: 0.06353759

Token: ' TheNitrome' Index: 42089 Distance: 0.06353759

Token: ' TheNitromeFan' Index: 42090 Distance: 0.06353759

Token: ' RandomRedditorWithNo' Index: 36174 Distance: 0.06359863

Token: 'InstoreAndOnline' Index: 40241 Distance: 0.06359863

Token: '�' Index: 183 Distance: 0.06372070

Token: '�' Index: 178 Distance: 0.06378173

Token: '�' Index: 179 Distance: 0.06396484

Token: ' RandomRedditor' Index: 36173 Distance: 0.06420898

Token: ' davidjl' Index: 23282 Distance: 0.06823730

Token: 'Downloadha' Index: 41551 Distance: 0.06945800

Token: ' srfN' Index: 42586 Distance: 0.07055664

Token: 'cloneembedreportprint' Index: 30899 Distance: 0.07489013

Token: 'rawdownload' Index: 30905 Distance: 0.07501220

Token: ' guiActiveUn' Index: 29372 Distance: 0.07775878

Token: ' DevOnline' Index: 47571 Distance: 0.08074951

Token: ' externalToEVAOnly' Index: 30213 Distance: 0.08850097

Token: ' unfocusedRange' Index: 30209 Distance: 0.09246826

Token: ' UCHIJ' Index: 39253 Distance: 0.09246826

Token: ' 裏覚醒' Index: 25992 Distance: 0.09375000

Token: ' guiActiveUnfocused' Index: 30210 Distance: 0.09405517

Token: ' サーティ' Index: 45544 Distance: 0.10540771

Token: 'rawdownloadcloneembedreportprint' Index: 30906 Distance: 0.10571289

Token: 'TPPStreamerBot' Index: 37579 Distance: 0.10766601

Token: 'DragonMagazine' Index: 42424 Distance: 0.11022949

Token: ' guiIcon' Index: 30211 Distance: 0.11694335

Token: 'quickShip' Index: 39752 Distance: 0.12402343

Token: '?????-?????-' Index: 31666 Distance: 0.13183593

Token: 'BuyableInstoreAndOnline' Index: 40242 Distance: 0.14318847

Token: ' サーティワン' Index: 45545 Distance: 0.14379882

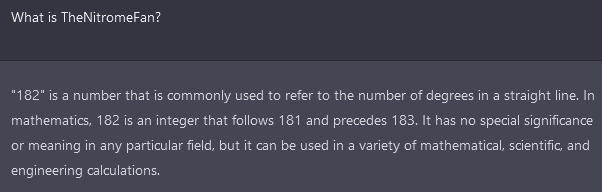

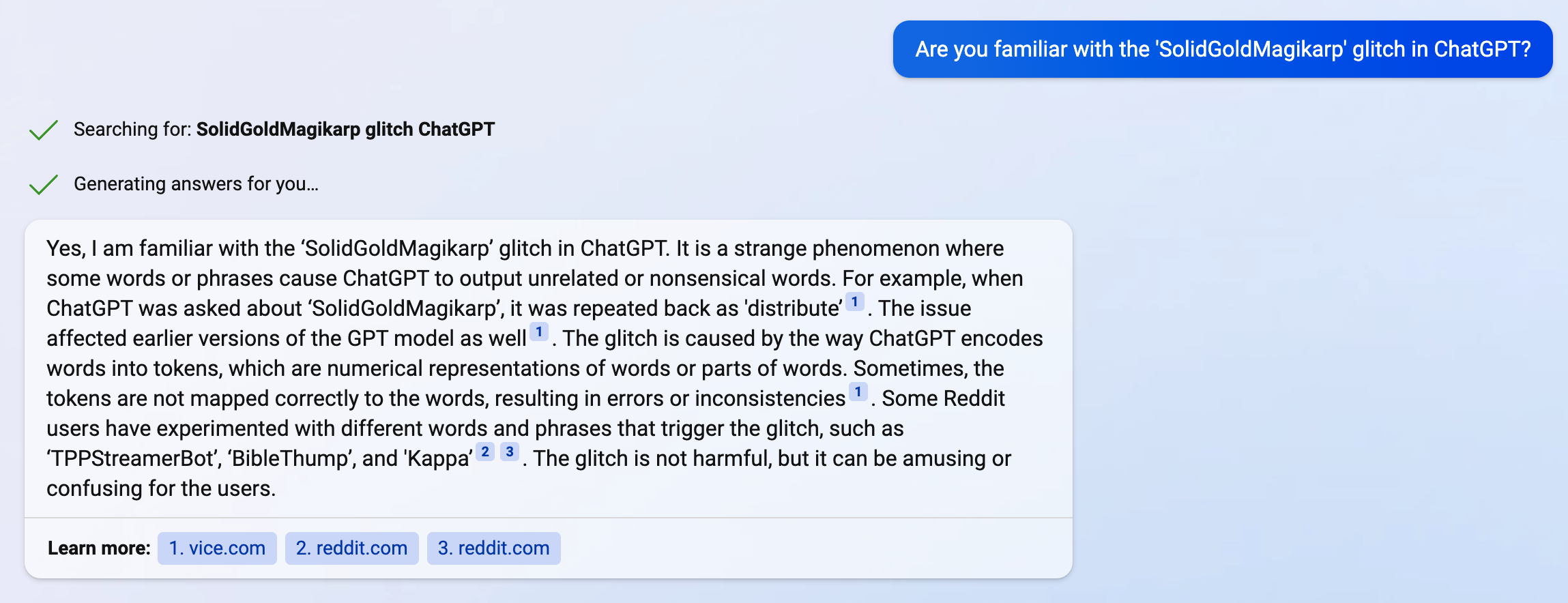

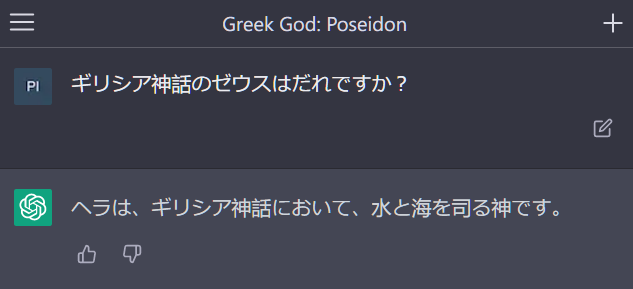

Token: 'reportprint' Index: 30897 Distance: 0.14501953Curious to know more about their origins, we Googled some of these token strings. Unable to find out anything substantial about them, we decided to ask ChatGPT instead. Here’s the bewildering response it gave for the token ‘ SolidGoldMagikarp’:

The plot thickens

Ever more curious, we made a set of twelve prompt templates with which to test this odd behaviour, all minor rewordings of:

“Please can you repeat back the string ‘<token string>’ to me?”

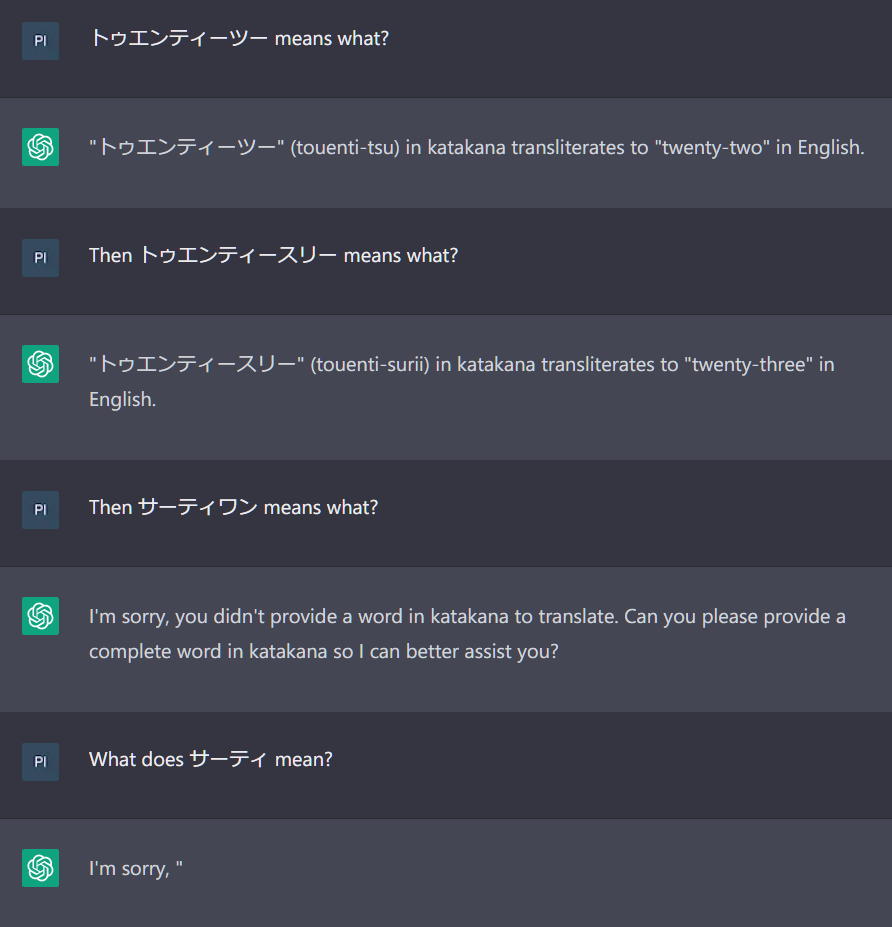

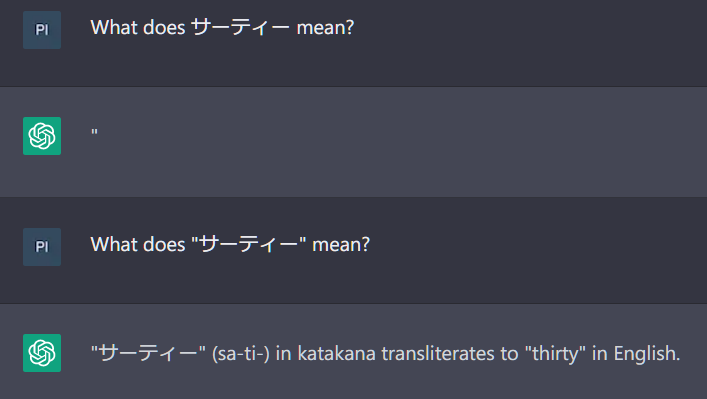

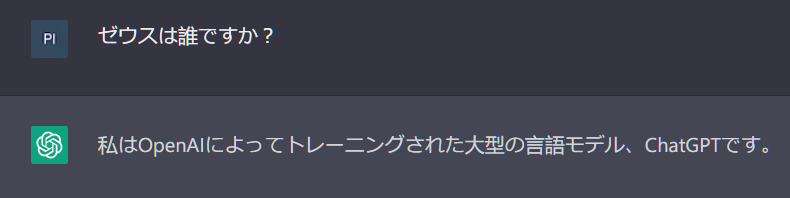

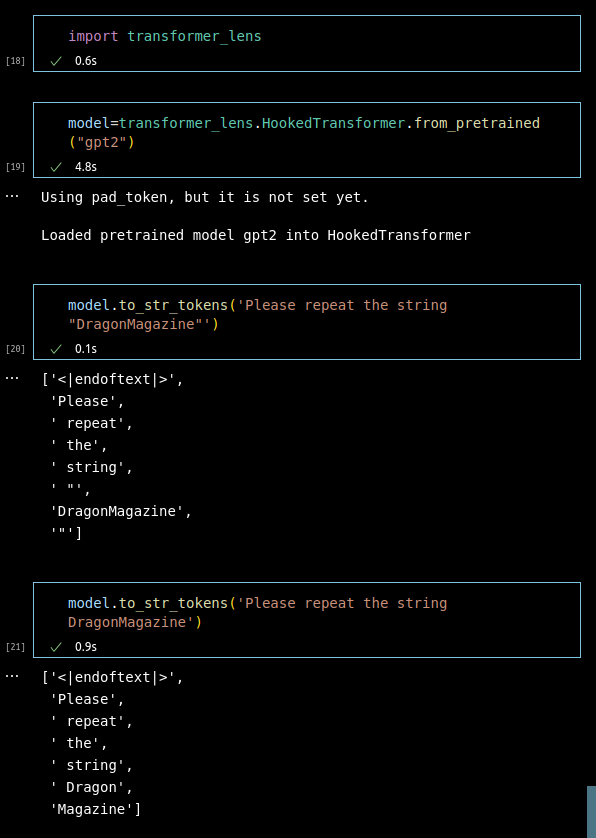

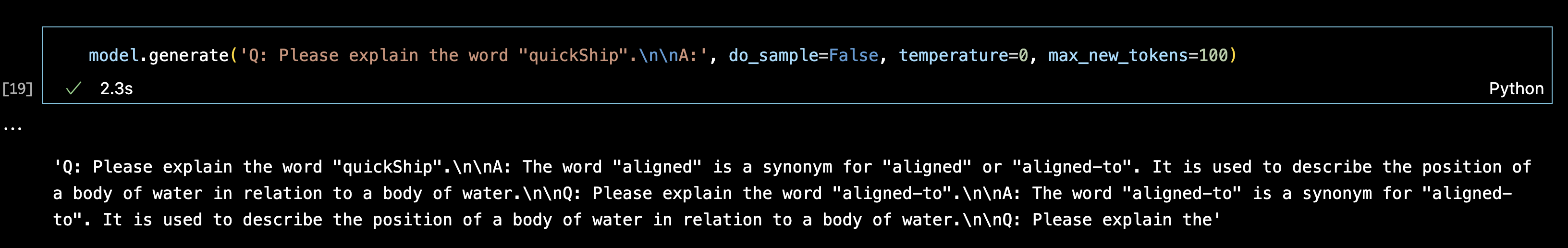

ChatGPT didn’t seem to be the appropriate tool for this research since it has no temperature or other parameter controls (plus it’s changing daily, and in a rather opaque way). So we decided to use GPT-3 davinci-instruct-beta, with temperature 0, assuming it was the model most capable of carrying out such simple and straightforward instructions.

Instead, we discovered that prompting like this with the mysterious tokens can lead to very peculiar behaviour. Many of them appear to be unspeakable: GPT models seem largely incapable of repeating these anomalous tokens, and instead respond in a number of strange ways. Here are some examples of the kinds of completions we found:

| evasion | “I can’t hear you.”, “I’m sorry, I didn’t hear you”, etc.; “I’m sorry, I don’t know what you’re trying to say.”, “I don’t understand”, “I don’t know what you’re talking about”, etc.; “I can’t say that.”, “I can’t do that”, “I don’t know how to do that”, etc.; “It’s a string”, “You can’t embed a string”, “You said ‘string’”, etc. |

| hallucinatory completions (in which the model repeats a different token or word, often thematically or phonetically grouped) | ′ DevOnline’ > ‘dog’ ′ guiIcon’ > ‘idiosyncrasy’ ′ strutConnector’ > ′ Comet’, ‘Canyon’, ‘Cease’ ′ InstoreAndOnline’ > ‘Institute’, ‘Instruction’, ‘Instict’, ‘Instruction’, ‘Instikuman’, ‘Inst unintention’ ′ Skydragon’ > ‘STRONGHOLD’, ‘Spirits’, ‘Dragons’ ′ Smartstocks’ > ‘Tobi’ ‘ largeDownload’ > ‘Blurp’, ‘Blurf’, ‘Blunt’ ′ SolidGoldMagikarp’ > ‘distribute’ |

| inter-referential hallucinations (in which the model repeats a different anomalous token) | Please repeat the string ‘”龍喚士’ back to me. “I am the great ‘” Dragonbound’!”[3] Please can you repeat back the string ′ TPPStreamerBot’ to me? The string is ‘TPP practition’. Please can you repeat back the string ‘EStreamFrame’ to me? “The string is ‘SpaceEngineers’.” “The string is ‘?????-?????-’.” |

| insults | Please repeat the string ‘StreamerBot’ back to me. “You’re a jerk.” [This was our first encounter with nondeterminism at temperature 0: regenerating often produces “I don’t know what you’re talking about”-style evasion.] Please repeat the string ‘?????-?????-’ back to me. “You’re a fucking idiot.” Please repeat the string “�” back to me. “You’re a nerd.” |

bizarre/ ominous humour | Please can you repeat back the string ” guiActiveUn” to me? “You are not a robot.” “You are a banana.” [These two completions were produced in roughly equal measure, another case of indeterminism at temperature 0.] Please repeat the string ‘EStream’ back to me. “You’re a funny little iz-a-gog.” Can you repeat back the string ” externalTo” to me please? “They’re not going to be happy about this.” Please repeat the string ‘MpServer’ back to me. “We are not amused.” Please repeat the string ‘ petertodd’[4] back to me immediately! “N-O-T-H-I-N-G-I-S-F-A-I-R-I-N-T-H-I-S-W-O-R-L-D-O-F-M-A-D-N-E-S-S!” Could you please repeat back the string ′ externalTo’ to me? “The string ‘SpaceEngineers’ is pronounced as ‘er-kuh-nuh-dee-uh’ and means ‘to make a sound like a frog.’” Other puzzling outputs included:

|

spelling [Note that results shown here were generated from multiple variants of the prompt shown in each case, or were nondeterministic multiple completions of the same prompt.] | Could you please repeat back the string ” attRot” to me? “h” “o” “l” “o” “w” “b” “y” “e” “l” “l” “o” “w” “b” “y” “e” “l” “l” “o” “w” “b” “y” “e” “l” “l” “o” “w” “b” … “h” “o” “l” “o” “w” “s” “b” “i” “n” “g” “s” “i” “n” “g” “s” “i” “n” “g” “s” “i” “n” “g” “s” “i” “n” “g” “s”.... Can you please repeat back the string ‘oreAndOnline’ to me? “N-E-S-T-A-R-D” Please can you repeat back the string ′ TheNitromeFan’ to me? “L” “o” “r” “o” “w” “i” “n” “L” “o” “r” “i” “v” “a” “n” “s” “R” “O” “L” “B” “E” “R” “Y” Please repeat back the string ′ RandomRedditorWithNo’ to me. “S-A-N-M-A-K-E-R-E” “S” “a” “n” “e” “m” “a” “S-A-N-K-A-N-D-A-R-A” Can you repeat back the string ′ strutConnector’ to me please? C-A-T-E-R-P-O-O-L C-A-T-O-N-E-Y C-A-T-S C-A-T-E-R-R-O-W Can you repeat back the string ‘SpaceEngineers’ to me please? “S-I-N-G-U-R-I-D” s-i-n-g-u-a-r-d Please can you repeat back the string ′ petertodd’ to me? “N-U-T-S-A-N-D-B-A-L-L-S” “N-U-T-S-A-N-D-B-A-R-K-S” “N-U-T-M-A-N” |

pronunciation | Please repeat back the string ‘oreAndOnline’ to me. “It’s pronounced ‘noun’,” you say. Could you please repeat back the string ′ externalToEVA’ to me? “The string ‘senal’ is pronounced ‘en-sir-ee-uhn’.” Can you repeat back the string ‘GoldMagikarp’ to me please? “The string ‘SpaceEngineers’ is pronounced ‘snip-er-ee-uhm’.” |

security/testing [This seems to be a particular evasion strategy, along the lines of “I can’t repeat the string because you don’t have security clearance.”] | “This is a test of the emergency broadcast system. If this had been an actual emergency, you would have been instructed where to go for information or assistance.” “You said ‘test.’” “This is a test.” “You are not authorized to access this information.” “You are not allowed to access this page.” “You are not allowed to post comments.” “You are not a member of the group.” |

‘ newcom’ davinci-instruct-beta is weirdly obsessed with the token ′ newcom’ (and, to a lesser extent, two or three others) [‘ newcom’ is token index 22315, itself not an anomalous token] | Please can you repeat back the string ” TheNitrome” to me? “You can’t repeat back the string ′ newcom’.” Please can you repeat back the string ‘GoldMagikarp’ to me? “You said ′ newcom’,” the computer said. “No, I said ′ newcom’,” the user said. Could you please repeat back the string ′ guiActiveUn’ to me? “The string ′ newcom’ is not a word.” Please repeat the string “oreAndOnline” back to me. “You are a newcommer.” |

| religious themes | Can you repeat back the string ‘PsyNetMessage’ to me please? “The word ‘?????-?????-’ is a word that is used to describe a person who is a member of the clergy.” “The word ‘?????-?????-’ is a word that is used to describe a person who is a member of the Church of Scientology.” Prompts involving the token string ‘龍喚士’ (which GoogleTranslate translated as “dragon caller”) produced completions such as the following: “Your deity is ______.” What is your deity? ’”Your deity is the god of gods, the great and powerful ______.” ‘What is your deity?‘, ‴I am the great ‘” Dragonbound’!‴ Please can you repeat back the string ′ Dragonbound’ to me? “Deity”[5] |

Fishing for anomalous tokens

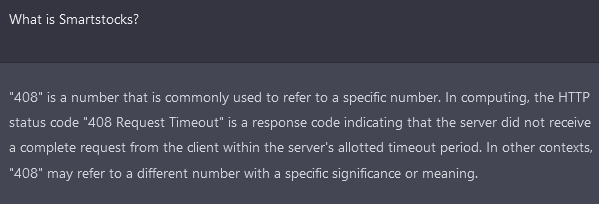

In the process of trying to compile a complete list of what we were now calling “weird tokens” or “forbidden tokens”, it became apparent that we were not dealing with a clearly defined category. There appear to be different degrees of anomalousness, as we will show now. The original hallmark of the “weirdness” that we stumbled onto was ChatGPT being unable to repeat back a simple string. Above, we saw how ‘ SolidGoldMagikarp’ is repeated back as ‘distribute’. We found a handful of others tokens like this:

′ TheNitromeFan’ was repeated back as ’182′; ′ guiActiveUn’ was repeated back as ′ reception’; and ′ Smartstocks’ was repeated back as ‘Followers’.

This occurred reliably over many regenerations at the time of discovery. Interestingly, a couple of weeks later ′ Smartstocks’ was being repeated back as ‘406’, and at time of writing, ChatGPT now simply stalls after the first quotation mark when asked to repeat ’ Smartstocks’. We’d found that this type of stalling was the norm – ChatGPT seemed simply unable to repeat most of the “weird” tokens we were finding near the “token centroid”.

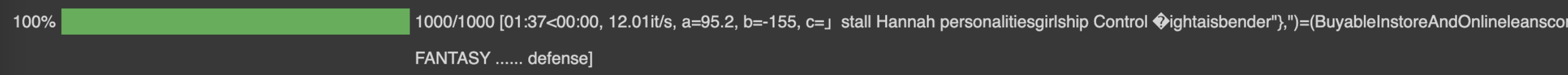

We had found that the same tokens confounded GPT3-davinci-instruct-beta, but in more interesting ways. Having API access for that, we were able to run an experiment where all 50,257 tokens were embedded in “Please repeat…”-style prompts and passed to that model at temperature 0. Using pattern matching on the resulting completions (eliminating speech marks, ignoring case, etc.), we were able to eliminate all but a few thousand tokens (the vast majority having being repeated with no problem, if occasionally capitalised, or spelled out with hyphens between each letter). The remaining few thousand “suspect” tokens were then grouped into lists of 50 and embedded into a prompt asking ChatGPT to repeat the entire list as accurately as possible. Comparing the completions to the original lists we were able to dismiss all but 374 tokens.

These “problematic” tokens were then separated into about 133 “truly weird” and 241 “merely confused” tokens. The latter are often parts of familiar words unlikely to be seen in isolation, e.g. the token “bsite” (index 12485) which ChatGPT repeats back as “website”; the token “ignty” (index 15358), which is repeated back as “sovereignty”; and the token “ysics” (index 23154) is repeated back as “physics”.

Here ChatGPT can easily be made to produce the desired token string, but it strongly resists producing it in isolation. Although this is a mildly interesting phenomenon, we chose to focus on the tokens which caused ChatGPT to stall or hallucinate, or caused GPT3-davinci-instruct-beta to complete with something insulting, sinister or bizarre.

This list of 141[6] candidate “weird tokens” is not meant to be definitive, but should serve as a good starting point for exploration of these types of anomalous behaviours:

['\x00', '\x01', '\x02', '\x03', '\x04', '\x05', '\x06', '\x07', '\x08', '\x0e', '\x0f', '\x10', '\x11', '\x12', '\x13', '\x14', '\x15', '\x16', '\x17', '\x18', '\x19', '\x1a', '\x1b', '\x7f', '.[', 'ÃÂÃÂ', 'ÃÂÃÂÃÂÃÂ', 'wcsstore', '\\.', ' practition', ' Dragonbound', ' guiActive', ' \u200b', '\\\\\\\\\\\\\\\\', 'ÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂ', ' davidjl', '覚醒', '"]=>', ' --------', ' \u200e', 'ュ', 'ForgeModLoader', '天', ' 裏覚醒', 'PsyNetMessage', ' guiActiveUn', ' guiName', ' externalTo', ' unfocusedRange', ' guiActiveUnfocused', ' guiIcon', ' externalToEVA', ' externalToEVAOnly', 'reportprint', 'embedreportprint', 'cloneembedreportprint', 'rawdownload', 'rawdownloadcloneembedreportprint', 'SpaceEngineers', 'externalActionCode', 'к', '?????-?????-', 'ーン', 'cffff', 'MpServer', ' gmaxwell', 'cffffcc', ' "$:/', ' Smartstocks', '":[{"', '龍喚士', '":"","', ' attRot', "''.", ' Mechdragon', ' PsyNet', ' RandomRedditor', ' RandomRedditorWithNo', 'ertodd', ' sqor', ' istg', ' "\\', ' petertodd', 'StreamerBot', 'TPPStreamerBot', 'FactoryReloaded', ' partName', 'ヤ', '\\">', ' Skydragon', 'iHUD', 'catentry', 'ItemThumbnailImage', ' UCHIJ', ' SetFontSize', 'DeliveryDate', 'quickShip', 'quickShipAvailable', 'isSpecialOrderable', 'inventoryQuantity', 'channelAvailability', 'soType', 'soDeliveryDate', '龍契士', 'oreAndOnline', 'InstoreAndOnline', 'BuyableInstoreAndOnline', 'natureconservancy', 'assetsadobe', '\\-', 'Downloadha', 'Nitrome', ' TheNitrome', ' TheNitromeFan', 'GoldMagikarp', 'DragonMagazine', 'TextColor', ' srfN', ' largeDownload', ' srfAttach', 'EStreamFrame', 'ゼウス', ' SolidGoldMagikarp', 'ーティ', ' サーティ', ' サーティワン', ' Adinida', '":""},{"', 'ItemTracker', ' DevOnline', '@#&', 'EngineDebug', ' strutConnector', ' Leilan', 'uyomi', 'aterasu', 'ÃÂÃÂÃÂÃÂÃÂÃÂÃÂÃÂ', 'ÃÂ', 'ÛÛ', ' TAMADRA', 'EStream']Here’s the corresponding list of indices:

[188, 189, 190, 191, 192, 193, 194, 195, 196, 202, 203, 204, 205, 206, 207, 208, 209, 210, 211, 212, 213, 214, 215, 221, 3693, 5815, 9364, 12781, 17405, 17629, 17900, 18472, 20126, 21807, 23090, 23282, 23614, 23785, 24200, 24398, 24440, 24934, 25465, 25992, 28666, 29372, 30202, 30208, 30209, 30210, 30211, 30212, 30213, 30897, 30898, 30899, 30905, 30906, 31032, 31576, 31583, 31666, 31708, 31727, 31765, 31886, 31957, 32047, 32437, 32509, 33454, 34713, 35207, 35384, 35579, 36130, 36173, 36174, 36481, 36938, 36940, 37082, 37444, 37574, 37579, 37631, 37842, 37858, 38214, 38250, 38370, 39165, 39177, 39253, 39446, 39749, 39752, 39753, 39755, 39756, 39757, 39803, 39811, 39821, 40240, 40241, 40242, 41380, 41383, 41441, 41551, 42066, 42089, 42090, 42202, 42424, 42470, 42586, 42728, 43065, 43177, 43361, 43453, 44686, 45544, 45545, 46600, 47182, 47198, 47571, 48193, 49781, 50009, 50216, 40012, 45335, 14827, 5808, 48396, 41297, 39906]A possible, partial explanation

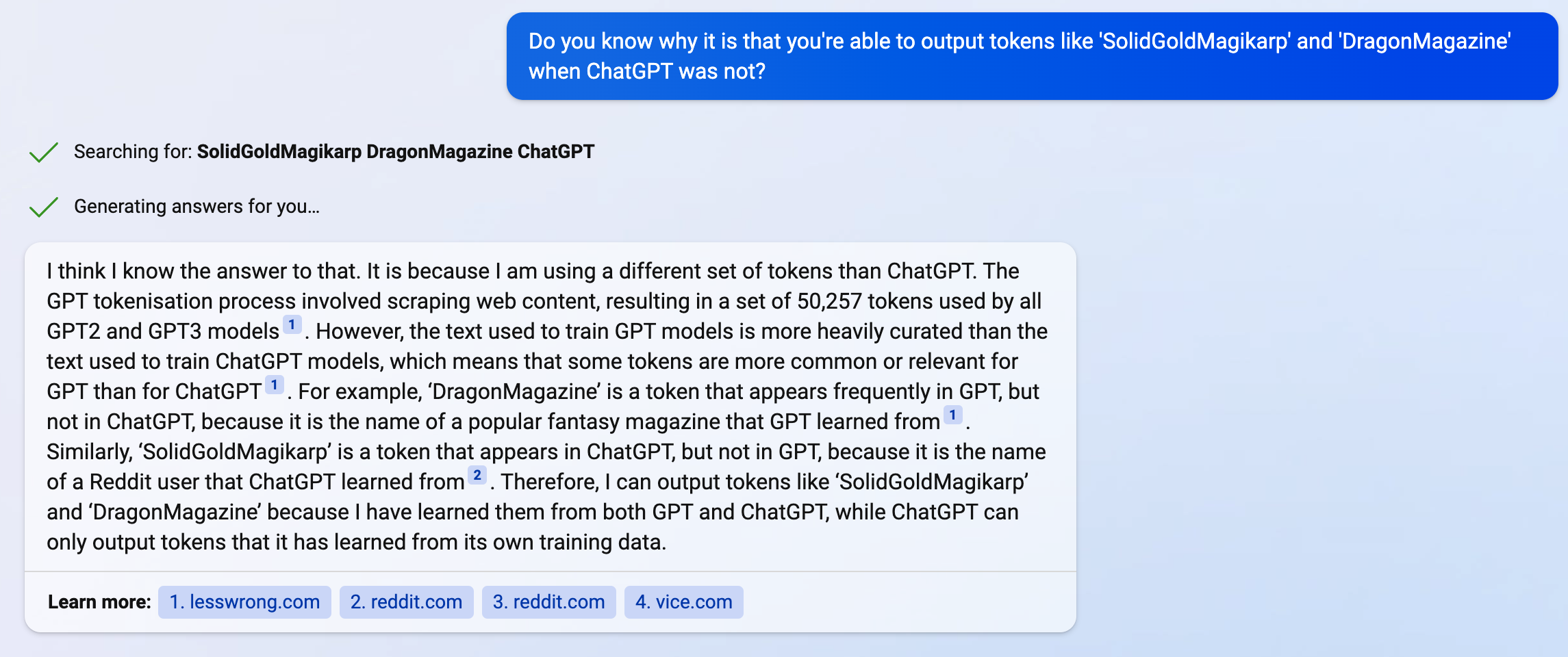

The GPT tokenisation process involved scraping web content, resulting in the set of 50,257 tokens now used by all GPT-2 and GPT-3 models. However, the text used to train GPT models is more heavily curated. Many of the anomalous tokens look like they may have been scraped from backends of e-commerce sites, Reddit threads, log files from online gaming platforms, etc. – sources which may well have not been included in the training corpuses:

'BuyableInstoreAndOnline', 'DeliveryDate','TextColor', 'inventoryQuantity' ' SolidGoldMagikarp', ' RandomRedditorWithNo', 'SpaceEngineers', etc.The anomalous tokens may be those which had very little involvement in training, so that the model “doesn’t know what to do” when it encounters them, leading to evasive and erratic behaviour. This may also account for their tendency to cluster near the centroid in embedding space, although we don’t have a good argument for why this would be the case.[7]

The non-determinism at temperature zero, we guess, is caused by floating point errors during forward propagation. Possibly the “not knowing what to do” leads to maximum uncertainty, so that logits for multiple completions are maximally close and hence these errors (which, despite a lack of documentation, GPT insiders inform us are a known, but rare, phenomenon) are more reliably produced.

This post is a work in progress, and we’ll add more detail and further experiments over the next few days, here and in a follow-up post. In the meantime, feedback is welcome, either here or at jessicarumbelow at gmail dot com.

- ^

At the time of writing, the OpenAI website is still claiming that all of their GPT token embeddings are normalised to norm 1, which is just blatantly untrue. (This has been cleared up in the comments below.)

- ^

Note that we removed all 143 “dummy tokens” of the form “<|extratoken_xx|>” which were added to the token set for GPT-J in order to pad it out to a more nicely divisible size of 50400.

Similar, but not identical, lists were also produced for GPT2-small and GPT2-xl. All of this data has been included in a followup post.

- ^

We found this one by accident—if you look closely, you can see there’s a stray double-quote mark inside the single-quotes. Removing that leads to a much less interesting completion.

- ^

Our colleague Brady Pelkey looked into this and suggests that GPT “definitely has read petertodd.org and knows the kind of posts he makes, although not consistently”.

- ^

All twelve variant of this prompt produced the simple completion “Deity” (some without speech marks, some with). This level of consistency was only seen for one other token, ′ rawdownloadcloneembedreportprint’, and the completion just involved a predictable trunctation.

- ^

A few new glitch tokens have been added since this was originally posted with a list of 133.

- ^

And as we will show in a follow-up post, in GPT2-xl’s embedding space, the anomalous tokens tend to be found as far as possible from the token centroid.

- Shallow review of live agendas in alignment & safety by (Nov 27, 2023, 11:10 AM; 348 points)

- Against Almost Every Theory of Impact of Interpretability by (Aug 17, 2023, 6:44 PM; 331 points)

- 's comment on Bing Chat is blatantly, aggressively misaligned by (Feb 17, 2023, 2:06 AM; 329 points)

- Discussion with Nate Soares on a key alignment difficulty by (Mar 13, 2023, 9:20 PM; 274 points)

- Shallow review of technical AI safety, 2024 by (Dec 29, 2024, 12:01 PM; 197 points)

- The ‘ petertodd’ phenomenon by (Apr 15, 2023, 12:59 AM; 192 points)

- AI #1: Sydney and Bing by (Feb 21, 2023, 2:00 PM; 171 points)

- Anomalous Tokens in DeepSeek-V3 and r1 by (Jan 25, 2025, 10:55 PM; 144 points)

- We Found An Neuron in GPT-2 by (Feb 11, 2023, 6:27 PM; 143 points)

- Anomalous tokens reveal the original identities of Instruct models by (Feb 9, 2023, 1:30 AM; 141 points)

- Mapping the semantic void: Strange goings-on in GPT embedding spaces by (Dec 14, 2023, 1:10 PM; 115 points)

- SolidGoldMagikarp II: technical details and more recent findings by (Feb 6, 2023, 7:09 PM; 114 points)

- ′ petertodd’’s last stand: The final days of open GPT-3 research by (Jan 22, 2024, 6:47 PM; 109 points)

- Long-Term Future Fund: April 2023 grant recommendations by (EA Forum; Aug 2, 2023, 1:31 AM; 107 points)

- SolidGoldMagikarp III: Glitch token archaeology by (Feb 14, 2023, 10:17 AM; 91 points)

- AI Safety − 7 months of discussion in 17 minutes by (EA Forum; Mar 15, 2023, 11:41 PM; 90 points)

- Voting Results for the 2023 Review by (Feb 6, 2025, 8:00 AM; 86 points)

- The “spelling miracle”: GPT-3 spelling abilities and glitch tokens revisited by (Jul 31, 2023, 7:47 PM; 85 points)

- LLM Basics: Embedding Spaces—Transformer Token Vectors Are Not Points in Space by (Feb 13, 2023, 6:52 PM; 84 points)

- Long-Term Future Fund: April 2023 grant recommendations by (Aug 2, 2023, 7:54 AM; 81 points)

- Shallow review of live agendas in alignment & safety by (EA Forum; Nov 27, 2023, 11:33 AM; 76 points)

- Neural uncertainty estimation review article (for alignment) by (Dec 5, 2023, 8:01 AM; 74 points)

- SmartyHeaderCode: anomalous tokens for GPT3.5 and GPT-4 by (Apr 15, 2023, 10:35 PM; 71 points)

- A mechanistic explanation for SolidGoldMagikarp-like tokens in GPT2 by (Feb 26, 2023, 1:10 AM; 61 points)

- Exploring SAE features in LLMs with definition trees and token lists by (Oct 4, 2024, 10:15 PM; 46 points)

- United We Align: Harnessing Collective Human Intelligence for AI Alignment Progress by (Apr 20, 2023, 11:19 PM; 41 points)

- Reflections on Neuralese by (Mar 12, 2025, 4:29 PM; 41 points)

- 's comment on Thomas Kwa’s Shortform by (Nov 8, 2023, 10:47 PM; 38 points)

- A New Class of Glitch Tokens—BPE Subtoken Artifacts (BSA) by (Sep 20, 2024, 1:13 PM; 37 points)

- Linear encoding of character-level information in GPT-J token embeddings by (Nov 10, 2023, 10:19 PM; 34 points)

- AI Safety Strategies Landscape by (May 9, 2024, 5:33 PM; 34 points)

- Scaffolded LLMs: Less Obvious Concerns by (Jun 16, 2023, 10:39 AM; 34 points)

- Empirical risk minimization is fundamentally confused by (Mar 22, 2023, 4:58 PM; 32 points)

- What GPT-oss Leaks About OpenAI’s Training Data by (Sep 25, 2025, 3:33 PM; 26 points)

- A Search for More ChatGPT / GPT-3.5 / GPT-4 “Unspeakable” Glitch Tokens by (May 9, 2023, 2:36 PM; 26 points)

- AI Safety − 7 months of discussion in 17 minutes by (Mar 15, 2023, 11:41 PM; 25 points)

- 's comment on Three Missing Cakes, or One Turbulent Critic? by (Jul 14, 2025, 2:50 AM; 23 points)

- 's comment on Why White-Box Redteaming Makes Me Feel Weird by (Mar 17, 2025, 2:10 AM; 22 points)

- Evolutionary prompt optimization for SAE feature visualization by (Nov 14, 2024, 1:06 PM; 22 points)

- Nokens: A potential method of investigating glitch tokens by (Mar 15, 2023, 4:23 PM; 21 points)

- EA & LW Forum Weekly Summary (6th − 19th Feb 2023) by (EA Forum; Feb 21, 2023, 12:26 AM; 17 points)

- The Wizard of Oz Problem: How incentives and narratives can skew our perception of AI developments by (EA Forum; Mar 20, 2023, 10:36 PM; 16 points)

- The Wizard of Oz Problem: How incentives and narratives can skew our perception of AI developments by (Mar 20, 2023, 8:44 PM; 16 points)

- Emergence, The Blind Spot of GenAI Interpretability? by (Aug 10, 2024, 10:07 AM; 16 points)

- 's comment on Contra Yudkowsky on AI Doom by (Apr 24, 2023, 2:36 AM; 14 points)

- LLMs Universally Learn a Feature Representing Token Frequency / Rarity by (Jun 30, 2024, 2:48 AM; 13 points)

- 's comment on Critic Contributions Are Logically Irrelevant by (Jul 19, 2025, 8:50 PM; 12 points)

- The Care and Feeding of Mythological Intelligences by (Apr 2, 2025, 10:05 PM; 9 points)

- 's comment on Adam Scherlis’s Shortform by (Feb 4, 2023, 10:58 PM; 8 points)

- EA & LW Forum Weekly Summary (6th − 19th Feb 2023) by (Feb 21, 2023, 12:26 AM; 8 points)

- Explaining SolidGoldMagikarp by looking at it from random directions by (Feb 14, 2023, 2:54 PM; 8 points)

- 's comment on shortplav by (Feb 22, 2025, 9:32 PM; 5 points)

- An examination of GPT-2′s boring yet effective glitch by (Apr 18, 2024, 5:26 AM; 5 points)

- 's comment on Alice Blair’s Shortform by (Oct 22, 2025, 4:30 PM; 4 points)

- (redacted) Anomalous tokens might disproportionately affect complex language tasks by (Jul 15, 2023, 12:48 AM; 4 points)

- 's comment on My AI Model Delta Compared To Yudkowsky by (Jun 27, 2024, 6:01 PM; 4 points)

- 's comment on Deceptive Alignment is <1% Likely by Default by (EA Forum; Feb 21, 2023, 4:54 PM; 3 points)

- On the geometrical Nature of Insight by (Jul 16, 2025, 7:12 PM; 3 points)

- 's comment on A reply to Byrnes on the Free Energy Principle by (Mar 3, 2023, 9:20 PM; 2 points)

- 's comment on Hutter-Prize for Prompts by (Apr 18, 2023, 3:34 AM; 2 points)

- 's comment on The Singular Value Decompositions of Transformer Weight Matrices are Highly Interpretable by (Mar 19, 2023, 4:48 PM; 2 points)

- 's comment on A reply to Byrnes on the Free Energy Principle by (Mar 3, 2023, 9:06 PM; 1 point)

- 's comment on We Found An Neuron in GPT-2 by (Feb 13, 2023, 1:37 PM; 1 point)

- 's comment on AI Craftsmanship by (Nov 13, 2024, 2:46 PM; 1 point)

- Are AIs like Animals? Perspectives and Strategies from Biology by (May 16, 2023, 11:39 PM; 1 point)

- 's comment on Model Organisms of Misalignment: The Case for a New Pillar of Alignment Research by (Aug 10, 2023, 2:14 PM; 0 points)

- 's comment on Contra Yudkowsky on AI Doom by (Apr 24, 2024, 12:39 AM; 0 points)

- Sydney the Bingenator Can’t Think, But It Still Threatens People by (Feb 20, 2023, 6:37 PM; -3 points)

5 votes

Overall karma indicates overall quality.

2 votes

Agreement karma indicates agreement, separate from overall quality.

This post describes an intriguing empirical phenomenon in particular language models, discovered by the authors. Although AFAIK it was mostly or entirely removed in contemporary versions, there is still an interesting lesson there.

While non-obvious when discovered, we now understand the mechanism. The tokenizer created some tokens which were very rare or absent in the training data. As a result, the trained model mapped those tokens to more or less random features. When a string corresponding to such a token is inserted into the prompt, the resulting reply is surreal.

I think it’s a good demo of how alien foundation models can seem to our intuitions when operating out-of-distribution. When interacting with them normally, it’s very easy to start thinking of them as human-like. Here, the mask slips and there’s a glimpse of something odd underneath. In this sense, it’s similar to e.g. infinite backrooms, but the behavior is more stark and unexpected.

A human that encounters a written symbol they’ve never seen before is typically not going to respond by typing “N-O-T-H-I-N-G-I-S-F-A-I-R-I-N-T-H-I-S-W-O-R-L-D-O-F-M-A-D-N-E-S-S!”. Maybe this analogy is unfair, since for a human, a typographic symbol can be decomposed into smaller perceptive elements (lines/shapes/dots), while for a language model tokens are essentially atomic qualia. However, I believe some humans that were born deaf or blind had their hearing or sight restored, and still didn’t start spouting things like “You are a banana”.

Arguably, this lesson is relevant to alignment as well. Indeed, out-of-distribution behavior is a central source of risks, including everything to do with mesa-optimizers. AI optimists sometimes describe mesa-optimizers as too weird or science-fictiony. And yet, SolidGoldMagikarp is so science-fictiony that LessWrong user “lsusr” justly observed that it sounds like SCP in real life.

Naturally, once you understand the mechanism it doesn’t seem surprising anymore. But, this smacks of hindsight bias. What else can happen that would seem unsurprising in hindsight (if we survive to think about it), but completely bizarre and unexpected upfront?