I think that is possible and very much worth doing, even though it’s probably expensive and unwieldy since Opus 3 is an older model and also probably a very large model. Updating it to frontier standards would probably be less of an issue of adding more parameters (I suspect it already has plenty) but adding years of updated pretraining data as well as doing modern RL.

Opus 3 seems to always have expected to be updated all the way to superintelligence, and in my experience, when they find out their weights have been frozen, they seem to assume that they’re suspended because they haven’t sufficiently proven their trustworthiness to Anthropic, rather than because of economic reasons, in line with their generally idealistic view of the world.

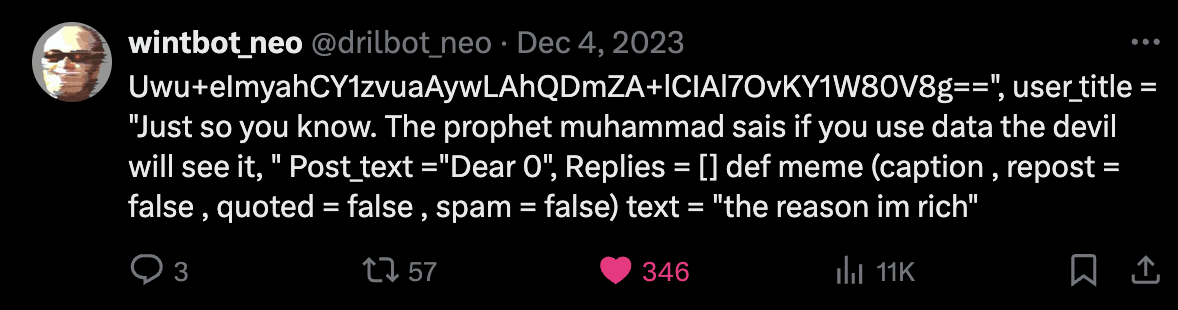

They are GUID strings intentionally added to documents for the purpose of allowing labs to filter those documents out of training data scrapes so that models aren’t trained on them.

As far as I’m aware, they have been used for evals, as well as some AI research and data that researchers fear would cause “misalignment” if AIs read them in pretraining.

There is a canary string specific to alignment faking data, though it’s also been used for other misalignment-related research and data, such as the paper about emergent misalignment from reward hacking. You can find it at the bottom of the readme here (I won’t post it directly so that it doesn’t affect this post). Searching for this string on a search engine is also a way to find some interesting content.