Summary: the moderators appear to be soft banning users with ‘rate-limits’ without feedback. A careful review of each banned user reveals it’s common to be banned despite earnestly attempting to contribute to the site. Some of the most intelligent banned users have mainstream instead of EA views on AI.

Note how the punishment lengths are all the same, I think it was a mass ban-wave of 3 week bans:

Gears to ascension was here but is no longer, guess she convinced them it was a mistake.

Have I made any like really dumb or bad comments recently:

https://www.greaterwrong.com/users/gerald-monroe?show=comments

Well I skimmed through it. I don’t see anything. Got a healthy margin now on upvotes, thanks April 1.

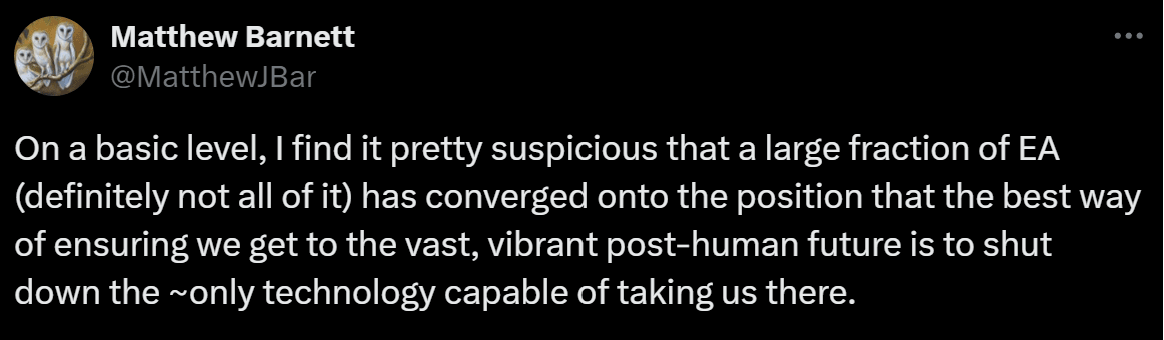

Over a month ago, I did comment this stinker. Here is what seems to the same take by a very high reputation user here, @Matthew Barnett , on X: https://twitter.com/MatthewJBar/status/1775026007508230199

Must be a pretty common conclusion, and I wanted this site to pick an image that reflects their vision. Like flagpoles with all the world’s flags (from coordination to ban AI) and EMS uses cryonics (to give people an alternative to medical ASI).

I asked the moderators:

@habryka says:

I skimmed all comments I made this year, can’t find anything that matches to this accusation. What comment did this happen on? Did this happen once or twice or 50 times or...? Any users want to help here, it surely must be obvious.

You can look here: https://www.greaterwrong.com/users/gerald-monroe?show=comments if you want to help me find what habryka could possibly be referring to.

I recall this happening once, Gears called me out on it, and I deleted the comment.

Conditional that this didn’t happen this year, why wasn’t I informed or punished or something then?

Skimming the currently banned user list:

Let’s see why everyone else got banned. Maybe I can infer a pattern from it:

Akram Choudhary :

His negative karma doesn’t add up to −38, not sure why. AI view is in favor of red teaming, which is always good.

doomer view, good karma (+2.52 karma per comment), hasn’t made any comments in 17 days...why rate limit him? Skimming his comments they look nice and meaty and well written...what? All I can see is over the last couple of month he’s not getting many upvotes per comment.

Ok at least I can explain this one. One comment at −41, in the last 20, green_leaf rarely comments. doomer view.

Tries to use humanities knowledge to align AI, apparently the readerbase doesn’t like it. Probably won’t work, banned for trying.

1.02 karma per comment, a little low, may still be above the bar. Not sure what he did wrong, comments are a bit long?

doomer view, lots of downvotes

Seems to just be running a low vote total. People didn’t like a post justifying religion.

Why rate limited? This user seems to be doing actual experiments. Karma seems a little low but I can’t find any big downvote comments or posts recently.

Overall Karma isn’t bad, 19 upvotes the most recent post. Seems to have a heavily downvoted comment that’s the reason for the limit.

@shminux this user has contributed a lot to the site. One comment heavily downvoted, algorithm is last 20.

It certainly feels that way from the receiving end.

2.49 karma per comment, not bad. Cube tries to applies Baye’s rule in several comments, I see a couple barely hit −1, I don’t have an explanation here.

possibly just karma

One heavily downvoted comment for AI views. I also noticed the same and I also got a lot of downvotes. It’s a pretty reasonable view, we know humans can be very misaligned, upgrading humans and trying to control them seems like a superset of the AI alignment problem. Don’t think he deserves this rate limit but at least this one is explainable.

Has anyone else experienced anything similar? Has anyone actually received feedback on a specific post or comment by the moderators?

Finally, I skipped several negative overall karma users not mentioned, because the reason is obvious.

Remarks :

I went into this expecting the reason had to do with AI views, because the site owners are very much ‘doomer’ faction. But no, plenty of rate limited people on that faction. I apologize for the ‘tribalism’ but it matters:

https://www.greaterwrong.com/users/nora-belrose Nora Belrose is one of the best posters this site has in terms of actual real world capabilities knowledge. Remember the OAI contributors we see here aren’t necessarily specialists in ‘make a real system work’. Look at the wall of downvotes.

vs

https://www.greaterwrong.com/users/max-h Max is very worried about AI, but I have seen him write things I think disagree with current mainstream science and engineering. He writes better than everyone banned though.

But no, that doesn’t explain it. Another thing I’ve noticed is that almost all the users are trying. They are trying to use rationality, trying to understand what’s been written here, trying to apply Baye’s rule or understand AI. Even some of the users with negative karma are trying, just having more difficulty. And yeah it’s a soft ban from the site, I’m seeing that a lot of rate limited users simply never contribute 20 more comments to get out of the sump from one heavily downvoted comment or post.

Finally, what rationality principles justify “let’s apply bans to users of our site without any reason or feedback or warning. Let’s make up new rules after the fact.”

Specifically, every time I have personally been punished, it would be no warning, then @Raemon first rate limited me, by making up a new rule (he could have just messaged me me first), then issued a 3 month ban, and gave some reasons I could not substantiate, after carefully reviewing my comments for the past year. I’ve been enthusiastic about this site for years now, I absolutely would have listened to any kind of warnings or feedback. The latest moderator limit is the 3rd time I have been punished, with no reason I can validate given or content cited.

I asked for, in a private email to the moderators, any kind of feedback or specific content I wrote to justify the ban, and was not given it. All I wanted was a few examples of the claimed behavior, something I could learn from.

Is there some reason the usual norms of having rules, not punishing users until after making a new rule, and informing users when they broke a rule and what user submission was rule violating isn’t rational? Just asking here, every mainstream site does this, laws do this, what is the evidence justifying doing it differently?

There’s this:

well-kept-gardens-die-by-pacifism

Any community that really needs to question its moderators, that really seriously has abusive moderators, is probably not worth saving. But this is more accused than realized, so far as I can see.

Is not giving a reason for a decision, or informing a user/issuing a lesser punishment instead of immediately going to the maximum punishment a community with abusive moderators? I can say in other online communities, absolutely. Sites have split over one wrongful ban of a popular user.

Thanks for making this post!

One of the reasons why I like rate-limits instead of bans is that it allows people to complain about the rate-limiting and to participate in discussion on their own posts (so seeing a harsh rate-limit of something like “1 comment per 3 days” is not equivalent to a general ban from LessWrong, but should be more interpreted as “please comment primarily on your own posts”, though of course it shares many important properties of a ban).

Things that seem most important to bring up in terms of moderation philosophy:

Moderation on LessWrong does not depend on effort

Just because someone is genuinely trying to contribute to LessWrong, does not mean LessWrong is a good place for them. LessWrong has a particular culture, with particular standards and particular interests, and I think many people, even if they are genuinely trying, don’t fit well within that culture and those standards.

In making rate-limiting decisions like this I don’t pay much attention to whether the user in question is “genuinely trying ” to contribute to LW, I am mostly just evaluating the effects I see their actions having on the quality of the discussions happening on the site, and the quality of the ideas they are contributing.

Motivation and goals are of course a relevant component to model, but that mostly pushes in the opposite direction, in that if I have someone who seems to be making great contributions, and I learn they aren’t even trying, then that makes me more excited, since there is upside if they do become more motivated in the future.

Signal to Noise ratio is important

Thomas and Elizabeth pointed this out already, but just because someone’s comments don’t seem actively bad, doesn’t mean I don’t want to limit their ability to contribute. We do a lot of things on LW to improve the signal to noise ratio of content on the site, and one of those things is to reduce the amount of noise, even if the mean of what we remove looks not actively harmful.

We of course also do other things than to remove some of the lower signal content to improve the signal to noise ratio. Voting does a lot, how we sort the frontpage does a lot, subscriptions and notification systems do a lot. But rate-limiting is also a tool I use for the same purpose.

Old users are owed explanations, new users are (mostly) not

I think if you’ve been around for a while on LessWrong, and I decide to rate-limit you, then I think it makes sense for me to make some time to argue with you about that, and give you the opportunity to convince me that I am wrong. But if you are new, and haven’t invested a lot in the site, then I think I owe you relatively little.

I think in doing the above rate-limits, we did not do enough to give established users the affordance to push back and argue with us about them. I do think most of these users are relatively recent or are users we’ve been very straightforward with since shortly after they started commenting that we don’t think they are breaking even on their contributions to the site (like the OP Gerald Monroe, with whom we had 3 separate conversations over the past few months), and for those I don’t think we owe them much of an explanation. LessWrong is a walled garden.

You do not by default have the right to be here, and I don’t want to, and cannot, accept the burden of explaining to everyone who wants to be here but who I don’t want here, why I am making my decisions. As such a moderation principle that we’ve been aspiring to for quite a while is to let new users know as early as possible if we think them being on the site is unlikely to work out, so that if you have been around for a while you can feel stable, and also so that you don’t invest in something that will end up being taken away from you.

Feedback helps a bit, especially if you are young, but usually doesn’t

Maybe there are other people who are much better at giving feedback and helping people grow as commenters, but my personal experience is that giving users feedback, especially the second or third time, rarely tends to substantially improve things.

I think this sucks. I would much rather be in a world where the usual reasons why I think someone isn’t positively contributing to LessWrong were of the type that a short conversation could clear up and fix, but it alas does not appear so, and after having spent many hundreds of hours over the years giving people individualized feedback, I don’t really think “give people specific and detailed feedback” is a viable moderation strategy, at least more than once or twice per user. I recognize that this can feel unfair on the receiving end, and I also feel sad about it.

I do think the one exception here is that if people are young or are non-native english speakers. Do let me know if you are in your teens or you are a non-native english speaker who is still learning the language. People do really get a lot better at communication between the ages of 14-22 and people’s english does get substantially better over time, and this helps with all kinds communication issues.

We consider legibility, but its only a relatively small input into our moderation decisions

It is valuable and a precious public good to make it easy to know which actions you take will cause you to end up being removed from a space. However, that legibility also comes at great cost, especially in social contexts. Every clear and bright-line rule you outline will have people budding right up against it, and de-facto, in my experience, moderation of social spaces like LessWrong is not the kind of thing you can do while being legible in the way that for example modern courts aim to be legible.

As such, we don’t have laws. If anything we have something like case-law which gets established as individual moderation disputes arise, which we then use as guidelines for future decisions, but also a huge fraction of our moderation decisions are downstream of complicated models we formed about what kind of conversations and interactions work on LessWrong, and what role we want LessWrong to play in the broader world, and those shift and change as new evidence comes in and the world changes.

I do ultimately still try pretty hard to give people guidelines and to draw lines that help people feel secure in their relationship to LessWrong, and I care a lot about this, but at the end of the day I will still make many from-the-outside-arbitrary-seeming-decisions in order to keep LessWrong the precious walled garden that it is.

I try really hard to not build an ideological echo chamber

When making moderation decisions, it’s always at the top of my mind whether I am tempted to make a decision one way or another because they disagree with me on some object-level issue. I try pretty hard to not have that affect my decisions, and as a result have what feels to me a subjectively substantially higher standard for rate-limiting or banning people who disagree with me, than for people who agree with me. I think this is reflected in the decisions above.

I do feel comfortable judging people on the methodologies and abstract principles that they seem to use to arrive at their conclusions. LessWrong has a specific epistemology, and I care about protecting that. If you are primarily trying to…

argue from authority,

don’t like speaking in probabilistic terms,

aren’t comfortable holding multiple conflicting models in your head at the same time,

or are averse to breaking things down into mechanistic and reductionist terms,

then LW is probably not for you, and I feel fine with that. I feel comfortable reducing the visibility or volume of content on the site that is in conflict with these epistemological principles (of course this list isn’t exhaustive, in-general the LW sequences are the best pointer towards the epistemological foundations of the site).

If you see me or other LW moderators fail to judge people on epistemological principles but instead see us directly rate-limiting or banning users on the basis of object-level opinions that even if they seem wrong seem to have been arrived at via relatively sane principles, then I do really think you should complain and push back at us. I see my mandate as head of LW to only extend towards enforcing what seems to me the shared epistemological foundation of LW, and to not have the mandate to enforce my own object-level beliefs on the participants of this site.

Now some more comments on the object-level:

I overall feel good about rate-limiting everyone on the above list. I think it will probably make the conversations on the site go better and make more people contribute to the site.

Us doing more extensive rate-limiting is an experiment, and we will see how it goes. As kave said in the other response to this post, the rule that suggested these specific rate-limits does not seem like it has an amazing track record, though I currently endorse it as something that calls things to my attention (among many other heuristics).

Also, if anyone reading this is worried about being rate-limited or banned in the future, feel free to reach out to me or other moderators on Intercom. I am generally happy to give people direct and frank feedback about their contributions to the site, as well as how likely I am to take future moderator actions. Uncertainty is costly, and I think it’s worth a lot of my time to help people understand to what degree investing in LessWrong makes sense for them.

I very much appreciate @habryka taking the time to lay out your thoughts; posting like this is also a great example of modeling out your principles. I’ve spent copious amounts of time shaping the Manifold community’s discourse and norms, and this comment has a mix of patterns I find true out of my own experiences (eg the bits about case law and avoiding echo chambers), and good learnings for me (eg young/non-English speakers improve more easily).

Re: post/comment quality, one thing I do suspect helps which I didn’t see anyone mention (and imo a potential upside of rate-limiting) is that age-old forum standard, lurking moar. I think it can actually be hugely valuable to spend awhile reading the historical and present discussion of a site and absorbing its norms of discourse before attempting to contribute; in particular, it’s useful for picking up illegible subtleties of phrasing and thought that distinguish quality from non-quality contributors, and for getting a sense of the shared context and background knowledge that users expect each other to have.

So I’m one of the rate limited users. I suspect it’s because I made a bad early April fools joke about a WorldsEnd movement that would encourage people to maximise utility over the next 25 years instead of pursuing long term goals for humanity like alignment. Made some people upset and it hit me that this site doesn’t really have the right culture for those kinds of jokes. I apologise and don’t contest being rate limited.

Positions that are contrarian or wrong in intelligent ways (or within a limited scope of a few key beliefs) provoke valuable discussion, even when they are not supported by legible arguments on the contrarian/wrong side. Without them, there is an “everybody knows” problem where some important ideas are never debated or fail to become common knowledge. I feel there is less of that than optimal on LW, it’s possible to target a level of disruption.

feature proposal: when someone is rate limited, they can still write comments. their comments are auto-delayed until the next time they’d be unratelimited. they can queue up to k comments before it behaves the same as it does now. I suggest k be 1. I expect this would reduce the emotional banneyness-feeling by around 10%.

feature proposal: when someone is ratelimited, the moderators can give a public reason and/or a private reason. if the reason is public, it invites public feedback as well as indicating to users passing by what things might get moderated. I would encourage moderators to give both positive and negative reasoning: why they appreciate the user’s input, and what they’d want to change. I expect this would reduce banneyness feeling by 3-10%, though it may increase it.

feature proposal: make the ui of the ratelimit smaller. I expect this would reduce emotional banneyness-feeling by 2-10%, as emotional valence depends somewhat on literal visual intensity, though this is only a fragment of it.

feature proposal: in the ratelimit indicator, add some of the words you wrote here, such as “this is not equivalent to a general ban from LessWrong. Your comments are still welcome. The moderators will likely be highly willing to give feedback on intercom in the bottom right.”

feature proposal: make karma/(comment+posts) visible on user profile, make total karma require hover of karma/(comments+posts) number to view.

If (as I suspect is the case) one of the in-practice purposes or benefits of a limit is to make it harder for an escalation spiral to continue via comments written in a heated emotional state, delaying the reading destroys that effect compared to delaying the writing. If the limited user is in a calm state and believes it’s worth it to push back, they can save their own draft elsewhere and set their own timer.

If someone is rate-limited because their posts are perceived as low quality, and they write a comment ahead of time, it’s good when they reread that comment before posting. If the process of posts from the queue getting posted is automatic that doesn’t happen the same way than when someone has their queue in their Google Doc (or whatever way the use to organize their thoughts) for copy-pasting.

True—your comment is more or less a duplicate of Rana Dexsin’s, which convinced me of this claim.

I strongly suspect that spending time building features for rate limited users is not valuable enough to be worthwhile. I suspect this mainly because:

There aren’t a lot of rate limited users who would benefit from it.

The value that the rate limited users receive is marginal.

It’s unclear whether doing things that benefit users who have been rate limited is a good thing.

I don’t see any sorts of second order effects that would make it worthwhile, such as non-rate-limited people seeing these features and being more inclined to be involved in the community because of them.

There are lots of other very valuable things the team could be working on.

sure. I wouldn’t propose bending over backwards to do anything. I suggested some things, up to the team what they do. the most obviously good one is just editing some text, second most obviously good one is just changing some css. would take 20 minutes.

Features to benefit people accused of X may benefit mostly people who have been unjustly accused. So looking at the value to the entire category “people accused of X” may be wrong. You should look at the value to the subset that it was meant to protect.

By the way you put “locally invalid” on a different comment. Care to explain which element is invalid?

I went over:

Permitted norms on reddit, where I have 120k karma and have never been banned.

why moderate this weird way different from essentially everywhere else?

Can you justify a complex theory on weak evidence?

I don’t see any significant evidence that the moderation here is weird or unusual. Most forums or chats I’ve encountered do not have bright line rules. Only very large forums do, and my impression is that their quality is worse for it. I do not wish to justify this impression at this time, this will likely be near my last comment on this post.

The way I interpret this, you only get this if you are a “high ROI” contributor. It’s quite possible that you get specific feedback, including the post they don’t like, if you are considered “high ROI”. I complained about bhauth rate limiting me by abusing downvotes in a discussion, and never got a reply. In fact I never received a reply by intercom about anything I asked about. Not even a “contribute to the site more and we’ll help, k bye”.

Which is fine, but habryka also admitted that

This was never said, I would have immediately deactivated my account had this occurred.

This was not done, and habryka admitted this wasn’t done. Also raemon gave the definition for an “established” user and it was an extremely high bar, only a small fraction of all users will meet it.

A little casual age discrimination, anyone over 22 isn’t worth helping.

I thought ‘rationality’ was about mechanistic reasoning, not just “do whatever we want on a whim”. As in you build a model on evidence, write it down, data science, take actions based on what the data says, not what you feel. A really great example of moderation using this is https://communitynotes.x.com/guide/en/under-the-hood/ranking-notes . If you don’t do this, or at least use a prediction market, won’t you probably be wrong?

Wasn’t done for me. Habryka says that they like to communicate with us in ‘subtle’ ways, give ‘hints’. Many of us have some degree of autism and need it spelled out.

I don’t really care about all this, ultimately it is just a game. I just feel incredibly disappointed. I thought rationality was about being actually correct, about being smart and beating the odds, and so on.

I thought that mods like Raemon etc all knew more than me, that I was actually doing something wrong that could be fixed. Not well, what it is.

That ratonality was an idea that ultimately just says next_action = argmax ( actions considered, argmax(EV estimate_algorithm)), where you swap out how you estimate EV to whatever has the strongest predictive power. That we shouldn’t be stuck with “the sequences” if they are wrong.

But it kinda looks more like a niche organization with the same ultimate fate of : https://www.lesswrong.com/posts/DtcbfwSrcewFubjxp/the-rationalists-of-the-1950s-and-before-also-called

If you know, AI doesn’t kill us first. Stopped clocks and all.

I’m interested in seeing direct evidence of this from DMs. I expect direct evidence would convince me it was in fact done.

Your ongoing assumption that everyone here shares the same beliefs about this continues to be frustrating, though understandable from a less vulcan perspective. Most of your comment appears to be a reply to habryka, not me.

I am confused. The quotes I sent are quotes from DMs we sent to Gerald. Here they are again just for posterity:

And:

I think we have more but they are in DMs with just Raemon in it, but the above IMO clearly communicate “your current contributions are not breaking even”.

ah. then indeed, I am in fact convinced.

I didn’t draw that conclusion. Feel free to post in Raemon’s DMs.

Again what I am disappointed is ultimately this just seems to be a private fiefdom where you make decisions on a whim. Not altogether different from the new twitter come to think of it.

And that’s fine, and I don’t have to post here, that’s fine. I just feel super disappointed because I’m not seeing anything like rationality here in moderation, just tribalism and the normal outcomes of ‘absolute power corrupts absolutely’. “A complex model that I can’t explain to anyone” is ultimately not scalable and frankly not really a very modern way to do it. It just simplifies to a gut feeling, and it cannot moderator better than the moderator’s own knowledge, which is where it fails on topics the moderators don’t actually understand.

This was a form response used word for word to many others. Evidence: https://www.lesswrong.com/posts/cwRCsCXei2J2CmTxG/lw-account-restricted-ok-for-me-but-not-sure-about-lesswrong

https://www.lesswrong.com/posts/HShv7oSW23RWaiFmd/rate-limiting-as-a-mod-tool

Have you or anyone else on the LW team written anywhere about the effects of your new rate-limiting infrastructure, which was IIRC implemented last year? E.g. have some metrics improved which you care about?

We haven’t written up concrete takeaways. My sense is the effect was relatively minor, mostly because we set quite high rate limits, but it’s quite hard to disentangle from lots of other stuff going on.

This was an experiment in setting stronger rate-limits using more admin-supervision.

I do feel pretty solid in using rate-limiting as the default tool instead of temporary bans as I think most other forums use. I’ve definitely felt things escalate much less unhealthily and have observed a large effect size in how OK it is to reverse a rate-limit (whereas if I ban someone it tends to escalate quite quickly into a very sharp disagreement). It does also seem to reduce chilling effects a lot (as I think posts like this demonstrate).

I think one outcome is ‘we’re actually willing to moderate at all on ambiguous cases’. For years we would accumulate a list of users that seemed like they warranted some kind of intervention, but banning them felt too harsh and they would sit there in an awkwardly growing pile and eventually we’d say ‘well I guess we’re not really going to take action’ and click the ‘approve’ button.

Having rate limits made it feel more possible to intervene, but it still required writing some kind of message which was still very time consuming.

Auto-rate-limits have done a pretty good job of handling most cases in a way I endorse, in a way that helps quickly instead of after months of handwringing.

The actual metric I’d want is ‘do users who produce good content enjoy the site more’, or ‘do readers, authors and/or commenters feel comment sections are better than they used to be?’. This is a bit hard to judge because there are other confounding factors. But it probably would be good to try checking somehow.

Why don’t you just say this? Also give a general description of what “ROI” from the point of view your site is. Reddit has no concept of this. I was completely unaware you had a positive goal.

There’s thousands of message boards, this is literally the first one I have ever seen that has even the idea of ROI. Also while negative rules may not exist, positive ones are doable.

You never told me this, and I haven’t seen any mention of this anywhere on the site. Also I think users need more context of what it means to produce content that has high ROI for you, that uh we volunteer to provide for free.

Book worthy posts? Nothing in a comment section that harms your brand? No excessive “emperor has no clothes” comments? I can produce all of that, but I wasn’t aware that I had to.

As far as I knew, the restrictions were:

+karma, don’t do anything that would reasonably get you banned in a subreddit.

Did you ever publish an article on this site explaining this to anyone?

You certainly didn’t even try to link the article if you have one for me or explain your actual expectations.

Finally, I may note that you “invested” time 3 times after failing to give any warnings before punishment whatsoever, or even a positive description of what you wanted, just extremely vague “guidelines” that don’t contain this information. I’m disappointed in your decisions here.

Anyways, it’s an experiment, it’s rather telling that the highest contributors here have moved to Twitter though.

We have really given you a lot of feedback and have communicated that we don’t think you are breaking even. Here are some messages we sent to you:

April 7th 2023:

And from Ruby:

Separately, here are some quotes from our about page and new user guide:

And on the topic of positive goals for LessWrong and what we are trying to do here:

It’s not amazingly concrete, but I do think it’s clear we are trying to do something specific here. We are here to develop an art of rationality and cause good outcomes on issues like AI and other world-scale outcomes, and we’ll moderate to achieve that.

I’d also want to add LW Team is adjusting moderation policy as a post that laid out some of our thinking here. One section that’s particularly relevant/standalone:

https://www.greaterwrong.com/users/gerald-monroe?show=comments&sort=top

Worst I ever did:

https://www.greaterwrong.com/users/gerald-monroe?show=comments&sort=top&offset=1260

This is astonishingly vague.

I did

Punishment was in advance of a warning.

I did everything here on most comments made after this was linked.

I wish you luck with this. I look forward to seeing the results of your experiment here. I don’t like advance or retroactive punishment or vague rules and no appeals, but I appreciate you have admitted upfront to doing this. Thank you.

To answer, for now, just one piece of this post:

We’re currently experimenting with a rule that flags users who’ve received several downvotes from “senior” users (I believe 5 downvotes from users with above 1,000 karma) on comments that are already net-negative (I believe that were posted in the last year).

We’re currently in the manual review phase, so users are being flagged and then users are having the rate limit applied if it seems reasonable. For what it’s worth, I don’t think this rule has an amazing track record so far, but all the cases in the “rate limit wave” were reviewed by me and Habryka and he decided to apply a limit in those cases.

(We applied some rate limit in 60% of the cases of users who got flagged by the rule).

People who get manually rate-limited don’t have an explanation visible when trying to comment (unlike users who are limited by an automatic rule, I think).

We have explained this to users that reached out (in fact this answer is adapted from one such conversation), but I do think we plausibly should have set up infrastructure to explain these new rate limits.

I have been in the position of trying to moderate a large and growing community—it was at 500k users last I checked, although I threw in the towel around 300k—and I know what a thankless, sisyphean task it is.

I know what it is to have to explain the same—perfectly reasonable—rule/norm again and again and again.

I know what it is to try to cultivate and nurture a garden while hordes of barbarians trample all over the place.

But...

If it aint broke, don’t fix it.

I would argue that the majority of the listed people penalized are net contributors to lesswrong, including some who are strongly net positive.

I’ve noticed y’all have been tinkering in this space for a while, I think you’re trying super hard to protect lesswrong from the eternal september and you actually seem to be succeeding, which is no small feat, buuut...

I do wonder if the team needs a break.

I think there’s a thing that happens to gardeners (and here I’m using that as a very broad archetype), where we become attached to and identify with the work of weeding—of maintaining, of day after day holding back entropy—and cease to take pleasure in the garden itself.

As that sets in, even new growth begins to seem like a weed.

Are you able in my case to link the comment?

Doesn’t this “in the last year” equate to retroactively creating a rule and applying it?

A year ago the only rule I saw enforced was positive karma. It was fine to get into arguments, fine to post as often as you felt like. Seems like i have been punished a lot retroactively.

It is not “fine to get into arguments”. The FAQ definitely lays out goals of having interactions here be civil and collaborative.

Unfortunately, becoming less wrong (reaching the truth) benefits hugely from not getting into arguments.

If you tell a human being (even a rationalist) something true, with good evidence or arguments, but you do it in an aggressive or otherwise irritating way, they may very well become less likely to believe that true thing, because they associate it with you and want to fight both you and the idea.

This is true of you and other rationalists as well as everyone else.

This is not to argue that the bans might not be overdoing it; it’s trying to do what Habryka doesn’t have time to do: explain to you why you’re getting downvoted even when you’re making sense.

It’s “fine” in the sense that reddit works fine, downvoted arguments just reduces visibility. Users aren’t punished for occasional mass downvoted comments, they are auto hidden. Moderation is bright line and transparent.

So I was simply trying to understand the why. Why be vague on the reason, why not reference the infringing material, why make up new rules and apply retroactively over a year!, what are the benefits, what is the problem being solved or prevented. If you think an argument is bad, ok, how did you know this?

Are you actually getting the smartest content like you intend or a bunch of users with complex theories that sound smart but very likely are subtly but catastrophically wrong. See Titotals posts where he finds examples of this.

I don’t think this is a new insight though I thought of it. The reason physics can “support” complex equations as theories is because the data quality for physics is high : reproducible experiments, many sig figures, the complexity is the simplest found so far.

Something like economics, only the simplest theories probably have any genuine validity. Due to low data quality, inferring past simple ideas like supply and demand or marginal decisions probably heads quickly into “most likely wrong” territory.

This is the problem with AI predictions or any other future predictions. When your data is from the future your quality is very poor, like uncontrolled economics experiments. What you can model is limited or what model can be justified is limited.

The simple explanation is all you can justify.

It’s ironic that your response doesn’t address my comment. That was one of the stated reasons for your limit. This also addresses why Habryka thought explaining it to you further didn’t seem likely to help.

How to best moderate a website such as LW is a deep and difficult question. If you have better ideas, that might be useful. Just do more, better is not a useful suggestion.

.

De-facto I think people are pretty good about not downvoting contrarian takes (splitting up/downvote from agree/disagree vote helped a lot in improving this).

But also, we do have a manual review step to catch the cases where people get downvoted because of tribal dynamics and object-level disagreements (that’s where at least a chunk of the 40% where we didn’t apply the rule above came from).

Hey, I’m just some guy but I’ve been around for a while. I want to give you a piece of feedback that I got way back in 2009 which I am worried no one has given you. In 2009 I found lesswrong, and I really liked it, but I got downvoted a lot and people were like “hey, your comments and posts kinda suck”. They said, although not in so many words, that basically I should try reading the sequences closely with some fair amount of reverence or something.

I did that, and it basically worked, in that I think I really did internalize a lot of the values/tastes/habits that I cared about learning from lesswrong, and learned much more so how to live in accordance with them. Now I think there were some sad things about this, in that I sort of accidentally killed some parts of the animal that I am, and it made me a bit less kind in some ways to people who were very different from me, but I am overall glad I did it. So, maybe you want to try that? Totally fair if you don’t, definitely not costless, but I am glad that I did it to myself overall.

I am not a moderator, just sharing my hunches here.

I was only ratelimited for a day because I got in this fight.

re: Akram Choudhary—the example you give of a post by them is an exemplar of what habryka was talking about, the “you have to be joking”. this site has very tight rules on what argumentation structure and tone is acceptable: generally low-emotional-intensity words and generally arguments need to be made in a highly step-by-step way to be held as valid. I don’t know if that’s the full reason for the mute.

you got upvoted on april 1 because you were saying the things that, if you said the non-sarcastic version about ai, would be in line with general yudkowskian-transhumanist consensus. you continue to confuse me. it might be worth having the actual technical discussions you’d like to have about ai under the comments of those posts. what would you post on the april fools posts if you had thought they were not april fools at all? perhaps you can examine the step by step ways your reactions to those posts differ from ai in order to extract cruxes?

Victor Ashioya was posting a high ratio of things that sounded like advertisements, which I and likely others would then downvote on the homepage, and which would then disappear. Presumably Victor would delete them when they got downvotes. some still remain, which should give you a sense of why they were getting downvotes. Or not, if you’re so used to such things on twitter that they just seem normal.

I am surprised trevor, shminux, and noosphere are muted. I expect it is temporary, but if it is not, I would wonder why. I would require more evidence about the reasoning before I got pitchforky about it. (Incidentally, my willingness to get pitchforky fast may be a reason I get muted easily. Oh well.)

I don’t have an impression of the others in either direction on this topic.

But in general, my hunch is that since I was on this list and my muting was only for a day, the same may be true for others as well.

I appreciate you getting defensive about it rather than silently disappearing, even though I have had frustrating interactions with you before. I expect this post to be in the negatives. I have not voted yet, but if it goes below zero, I will strong upvote.

I actually love this norm. It prevents emotions from affecting judgement, and laying out arguments step by step makes them easier to understand.

I spent several years moderating r/changemyview on Reddit which also has this rule. Having removed at least hundreds of comments that break it, I think the worst thing about it is that it rewards aloofness and punishes sincerity. That’s acceptable to trade off to prevent the rise of very sincere flame wars, but it elevates people pretending to be wise at the expense of those with more experience who likely have more deeply held but also informed opinions about the subject matter. This was easily the most common moderation frustration expressed by users.

Makes sense. Given that perspective, do you have any idea for a better approach?

I don’t really know, the best I can offer is sort of vaguely gesturing at LessWrong’s moderation vector and pointing in a direction.

LW’s rules go for a very soft, very subjective approach to definitions and rule enforcement. In essence, anything the moderators feel is against the LW ethos is against the rules here. That’s the right approach to take in an environment where the biggest threat to good content is bad content. Hacker News also takes this approach and it works well—it keeps HN protected against non-hackers.

ChangeMyView is somewhat under threat of bad content—if too many people post on a soapbox, then productive commenters will lose hope and leave the subreddit. However it’s also under threat of loss of buy-in—people with non-mainstream views, or those that would be likely to attract backlash elsewhere need to feel that the space is safe for them to explore.

When optimising for buy-in, strictness and clarity is desirable. We had roughly consistent standards in terms of numbers of violations, to earn a ban, and consistently escalating bans (3 days, 30 days, permanent) in line with behavioural infractions. When there were issues, buy-in seemed present that we were at least consistent (even if the things we were consistent to weren’t optimal). That consistency provided a plausible alternative to the motive uncertainty created by subjective enforcement—for example, the admins told us we were fine to continue hosting discussions regarding gender and race that were being cracked down on elsewhere on Reddit.

Right now, I think LW is doing a good job of defending against bad content. I think what would make LW stronger is a semi-constitutional backbone to fall against in times of unrest. Kind of like how the 5th pillar of Wikipedia is to ignore all rules, yet policy is still the essential basis of editing discussions.

I would like to see, in the case of commenting guidelines, clearer definitions of what excess looks like. I think the subjective approach is fine for posts for now.

I agree that makes sense to me and assume your experience is probably representative.

I do wonder about a dynamic that might be obscured by your insight above. While the near term might tend to elevate a lower standard post(er) I would expect one of two paths going forward.

The bad path would be something along the line of Gresham’s Law where the more passionate but well informed and intelligent get crowded out by the mediation and intellectual “posers”. I suspect that has happened. Probably reading that in but I might infer that is something of the longer terms outcome your comment suggests.

The good path would be those more passionate, informed and thoughtful learn to adjust their communications skills and keep a check on their emotional response. Emotion and passion are good but can cloud judgement so learning to step back, remove that aspect from one’s thinking and then evaluate one’s argument more objectively is very helpful. Both for any onlie forum and personally—and I would suggest even is something of a public good type thing in that it will become more a habit/personality trait that reflects in all aspects of one’s life and social interactions.

Do you have any sense about which path we might expect from this type of moderation standard?

Note the current setup is “ban and do so potentially for content a year old” and no feedback as to the specific user content that was the reason.

There’s dozens of reasons possible. Also note how the site moderators choose not to give any feedback but instead choose effectively useless vague statements that can be applied to any content or no content. See above the one from Habryka. It matches anything and nothing. See all the conditionals including “I am actually very unsure this criticism is even true” and “I am unwilling to give any clarification or specifics”. And see the one on “I don’t think you are at the level of your interlocutor” without specifying + or -.

Literally any piece of text matches the rules given and also fails to match.

As a result of this,

Is outside the range of possible outcomes.

Big picture wise, almost all social media attempts die. Reddit/Facebook/Twitter et al are the natural monopoly winners and there are thousands of losers. This is just an experiment, the 2.0 attempt, and I have to respect the site moderators and owner for trying something new.

Their true policy is not to give feedback but to ban essentially everyone but a small elite set of “niche” contributors who often happen to agree with each other already, so sending empty strings at each other is the same as writing a huge post yet again arguing the same ground.

I think my concluding thought is that this creates a situation of essentially intellectual sandcastles. A large quantity is things that sound intelligent, are built on unproven assumptions that sound correct, and occupy a lot of text to describe and ultimately are useless.

A dumber sounding, simpler idea based on strong empirical evidence, using usually the simplest possible theory to explain it, is almost always going to be the least wrong. (And the correct policy is to only add complexity to a theory when strong replicable evidence can’t be explained without adding the complexity)

I think the site name should be GreaterWrong, just now miri is about stopping machine intelligence research, openAI is closed, and so on.

I would personally say that norms are things people expect others to do, but where the response to them not doing that is simply to be surprised; this is a rule, something where the response to not doing it is some form of other people taking some sort of action against a person for breaking the rule. when the people to whom a rule applies and the people who enforce it are different, those people are called authorities.

That fight (when I scanned over it briefly yesterday) seemed to be you and one other user (Shankar Sivarajan), having a sort of comment tennis game where you were pinging back an fourth, and (when I saw it) you both had downvotes that looked like you were both downvoting the other, and no one else was participating. I imagine that neither of was learning or having fun from that conversation. Ending that kind of chain might be the place the rate-limit has a use case. Whether it is the right solution I don’t know.

To an extent this whole site is just a fun game to play. But it’s no fun in a game if the GM declares you have to skip a turn for “no reason” or “git gud”. And you cannot figure out if the GM is being unfair or you actually made a mistake 50 turns back. Thank you for your courage in publicly speaking out.

Since I may not get a chance later, the tiny difference between asteroid and ASI is we have the craters for asteroids, even if almost all are old. Our evidence is empirical and there a large quantity of it. (The Moon)

For ASI it’s speculative and basic key questions like how much compute is needed to be a problem we have a lack of data on. We can speculate reasonably that more than human brains will be an ASI, but we don’t know how that translates to what the ASI can do against us, or how effective leaving out parts to cripple it will be.

Asteroid it’s just simple kinetic energy, and we know for example how much energy it takes to cause a tsunami or earthquake or blast wave that knocks down buildings.

I’m rate limited? I’ve heard about this problem before, but somehow I can still post despite being much less careful than other new users. I just posted two quick takes (which aren’t that quick, I will admit that. But the rules seem more relaxed for quick takes than posts).

Edit: Rate limited now, lol. By the way, I enjoy your kind words of non-guilt. And I agree, I haven’t done anything wrong. Can I still be a “danger” to the community in a way which needs to be gatekept? Only socially, not intellectually. I’m correct like Einstein was correct, stubbornly.

My comments are too long and ranty, and they’re also hard to understand. But I don’t think they’re wrong or without value. Other than downvotes, there’s not much engagement at all.

Self-censorship doesn’t suit me. If it’s required here then I don’t want to stay. I could communicate easier and simpler ideas, but such ideas wouldn’t be worth much. My current ideas might look like word salad to 90% of users, but I think the other 10% will find something of value. (exact ratio unknown)

Edit: Also, my theories are quite ambitious. Anything of sufficiently high level will look wrong, or like noise to those who do not understand it. Now, it may actually be noise, but the “attacker” only has to find one flaw whereas the defender has to make no mistakes. This effort ratio makes it a little pathetic when something gets, say −15 karma but zero comments, surely somebody can point out a mistake instead? Too kind? But banning isn’t all that kind.

[disclaimer here]

I don’t think “wrong or without value” is or should be the bar. My personal bar is heavily based on the ratio of effort:value[1], and how that compares to other ways I could spend my time. Assuming arguendo that your posts are correct, they may still be fall below the necessary return-on-effort.

That said, I think (and I believe the real mod team agrees) that Short Form should have a much lower bar than posts or comments on other people’s posts. That’s a lot of its purpose.

including the fact that rarer or more complicated views are often both more work and more valuable.

Well, this website is called “LessWrong”, so conforming to subjective values doesn’t seem all that important, and I didn’t expect to be punished for not doing so. I’ve read the rules, they say “Don’t think about it too hard” and “If we don’t like it, we will give you feedback”, but it seems like the rate-limiting was the feedback. They mention rate-limiting if you get too much negative karma, but this isn’t exactly true. My karma is net-positive, the negatives are just from older accounts, which seem to weight higher.

While I agree, I want to ask you how many grade-school essays are worth one PhD thesis? If you ask me, even a million isn’t enough. If you “go up a level”, you basically render the previous level worthless. Raising the level will mean that one will make more mistakes, but when one is correct, the value is completely unlike just playing it safe and stating what’s already proven (which is an easy way to get karma which I don’t see the value of)

At a personal level, I kind of enjoy the kindness you show in looking down on me, and comments like yours are even elegant and pleasing to read, but I don’t think this is the most valuable aspect of comments, since topics presented here (like AGI) concern the future of humanity. The pace here is a little boring, and I do believe I’m being misunderstood (what I assume goes without saying and prune from my comments is probably the parts that people would nod along with and upvote me for, simply because they agree and enjoy reading what they already know).

And not to be rude, but couldn’t it be that some of the perceived noise is a false positive? I can absolutely defend everything I’ve posted so far. I also don’t belive it’s a virtue to keep quiet about the truth just because it’s unpleasant.

I think the extinction of human nature (and the dynamics involved) is quite important. Same with the sustainability of immaterialistic aspects of life and the possible degeneration of psychological development (resulting in populations which are unable to stand up for themselves). On this very comment section, another user writes “I sort of accidentally killed some parts of the animal that I am” as a consequence of reading the sequences. This is also one of the things that I’ve shown concern about in my comments, and which has been voted “false” by regular users.

My “worst” comment this month is [5,-5] and about Tiktok. I think people dislike it because of their personal bias and because they’re naive (naive as in the kind of people who believe “somebody think of the children!” is a genuine concern rather than a propaganda tactic). But admittedly, only the first paragraph was sufficiently clear and correct. Perhaps you will have to be less kind to me? I cannot guess my faults, so somebody would have to put aside the pity and be more direct with me

It feels like you want this conversation to be about your personal interactions with LessWrong. That makes sense, it would be my focus if I’d been rate limited. But having that converesation in public seems like a bad idea, and I’m not competent to do in public or private[1].

So let me ask: how do you think conversations about norms and moderation should go, given that mod decisions will inevitably cause pain to people affected by them, and “everyone walks away happy” is not an achievable goal?

In part because AFAIK I haven’t read your work. I checked your user page for the first 30 commenets and didn’t see any votes in either direction. I will say that if you know your comments are “too long and ranty, and they’re also hard to understand”, those all seem good to work on.

I can only comment every 48 hours, so I can’t write multiple comments such that I only communicate what concerns the person I’m responding to. Engaging is optional, no pressure from me (perhaps from yourself or the community?). I’m n=1 but still part of the sample of rate-limited users, so my case generalizes to the extent that I overlap with other people who are rate-limited (now or in the future)

I think people should take responsibility for their words, - if the real rules are unwritten, then those who broke them just did as they were told. The rules pretend to be based on objective metrics like “quality” rather than subjective virtues like following the consensus (which will feel objective from the inside for, say, 95% of people). There’s no pain from my end, but it’s easier in general to accept punishment when a reason is given or there’s a piece of criticism which the offending person will have to admit might be valid. Staff are just human too, but some of the reasoning seems lazy. New users are not “entitled to a reason” for being punished? But the entire point of punishment is teaching, and there’s literally no learning without feedback. Is giving feedback not advantageous to both parties?

By the way, the rate-limiting algorithm as I’ve understood it seems poor. It only takes one downvoted comment to get limited, So it doesn’t matter if a user leaves one good comment and one poor comment, or if they write 99 good comments and one poor comment. Older accounts seems exempt, but even if older accounts write comments worth of rate-limiting then the rules are too harsh, and if they don’t, then there’s no justification for making them except from these rules. (I’m aware the punishment is not 100% automated though).

Edit: I’m clearly confused about the algorithm. Is it: iff ∃(poor comment) ∈ (most recent 20 comments) → rate limited from time of judgement until t+20 days? this seems wrong too.

My comments can be shorter or easier to understand, but not both. Most people will communicate big ideas by linking to them, linking 20 pages is much more acceptable than writing them in a comment. But these are my own ideas, there’s no links. The rest of the issues might be differences in taste rather than quality. Going against the consensus is *probably* enough to get one rate-limited, even if they’re correct, so if the website becomes an echo chamber, it can only be solved by somebody with a good reputation voicing their concerns from the inside (where it’s the most difficult to notice).

I’m one of the weirder users, though, I’m sure to be misunderstood. It worries me more that the other users were rate-limited. I can’t imagine a justification for doing so. If justifying it is easy, I think an explanation is proper, any user could drop it and mention it. If only the mod team can tell why these users were rate-limited, then it follows that the users made no obvious mistakes, from which it also follows that there’s very little that the targets (and even observers) can learn from all this.

Finally—I actually respect gatekeeping and high standards, but such rules should be visible. “When in Rome”—yeah, but what if the sign says “welcome to Italy”?. And I’m not convinced (thought I’d like to be) that I was punished by high standards rather than petty reasons like conformity, political values, or preferences owning to a lack of self-actualization.

First of all, thank you, this was exactly the type of answer I was hoping for. Also, if you still have the ability to comment freely on your short form, I’m happy to hop over there.

You’ve requested people stop sugarcoating so I’m going to be harsher than normal. I think the major disagreement lies here:

> But the entire point of punishment is teaching

I do not believe the mod team’s goal is to punish individuals. It is to gatekeep in service of keeping lesswrong’s quality high. Anyone who happens to emerge from that process making good contributions is a bonus, but not the goal.

How well is this signposted? The new user message says

Followed by a crippling long New User Guide.

I think that message was put in last summer but am not sure when. You might have joined before it went up (although then you would have been on the site when the equivalent post went up).

For issues interesting enough to have this problem, there is no ground source of truth that humans can access. There is human judgement, and a long process that will hopefully lead to better understanding eventually. Mods or readers are not contacting an oracle, hearing a post is true, and downvoting it anyway because they dislike it. They’re reading content, deciding whether it is well formed (for regular karma) and if they agree with it (for agreement votes, and probably also regular karma, although IIRC the correlation between those was less than I expected. LessWrong voters love to upvote high quality things they disagree with).

If you have a system that is more truth tracking I would love to hear it and I’m sure the team would too. But any system will have to take into account the fact that there is no magical source of truth for many important questions, so power will ultimately rest on human judgement.

On a practical level:

Easier to understand. LessWrong is more tolerant of length than most of the internet.

When I need to spend many pages on something boring and detailed, I often write a separate post for it, which I link to in the real post. I realize you’re rate limited, but rate limits don’t apply to comments on your own posts (short form is in a weird middle ground, but nothing stops you from creating your own post to write on). Or create your own blog elsewhere and link to it.

Thanks for your reply!

I do have access, I just felt like waiting and replying here. By the way, if I comment 20 times on my shortform, will the rate-limit stop? This feels like an obvious exploit in the rate-limiting algorithm, but it’s still possible that I don’t know how it works.

Then outright banning would work better than rate-limiting without feedback like this. If people contribute in good faith, they need to know just what other people approve of. Vague feedback doesn’t help alignment very much. And while an eternal september is dangerous, you likely don’t want a community dominated by veteran users who are hostile to new users. I’ve seen this in videogame communities and it leads to forms of stagnation.

It confuses me if you got 10 upvotes for the contents of your reply (I can’t find fault with the writing, formatting and tone), but it’s easily explained by assuming that users here don’t act much differently than they do on Reddit, which would be sad.

I already read the new users guide. Perhaps I didn’t put it clearly enough with “I think people should take responsibility for their words”, but it was the new users guide which told me to post. I read the “Is LessWrong for you?” section, and it told me that LessWrong was likely for me. I read the “well-kept garden” post in the past and found myself agreeing with its message. This is why I felt mislead and why I don’t think linking these two sections makes for a good counter-argument (after all, I attempted to communicate that I had already taken them into account). I thought LW should take responsibility for what it told me, as trusting it is what got me rate-limited. That’s the core message, the rest of my reply just defends my approach of commenting.

In order not to be misunderstood completely, I’d need a disclaimer like this at the top of every comment I make, which is clearly not feasible:

Humanity is somewhat rational now, but our shared knowledge is still filled with old errors which were made before we learned how to think. Many core assumptions are just wrong. But if these beliefs are corrected, then the cascade would collapse some of the beliefs that people hold dear, or touch upon controversial subjects. The truth doesn’t stand a chance against politics, morality and social norms. Sadly, if you want to prevent society from collapsing, you will need to grapple a bit with these three subjects. But that will very likely lead to downvotes.

A lot of things are poorly explained, but nonetheless true. Other things are very well argued, but nonetheless false. “Manifesting the future by visualizing it” is pseudoscience, but it has a positive utility. “We must make new laws to keep everyone safe” sounds reasonable, but after 1000 iterations it should have dawned on us that the 1001th law isn’t going to save us. I think that the reasonable sentence would net you positive karma on here, while the pseudoscience would get called worthless.

My logical intelligence is much higher than my verbal—and most people who are successful in social and academic areas of life are the complete opposite. Nonetheless, some of us can see patterns that other people just can’t. Human beings also have a lot in common with AI, we’re blackboxes. Our instincts are discriminatory and biased, but only because people who weren’t went extinct. Those who attempt to get rid of biases should first know what they are good for (Chesterton’s fence). But I can’t see a single movement in society advocating for change which actually understands what it’s doing. But people don’t like hearing this.

As of right now, the blackbox (intuition, instinct, etc) is still smarter than the explainable truth. This will change as people are taught how to disregard the blackbox and even break it. But this also goes against the consensus (in a way that I assume it will be considered “bad quality”. Some people might upvote what they disagree with, but I don’t think that goes for many types of disagreement)

And I’m also only human. Rate-limited users are perhaps the bottom 5% of posters? But I’m above that. I’m just grappling with subjects which are beyond my level. You told me to read the rules, that’s a lot easier. I could also get lots of upvotes if I engaged with subjects that I’m overqualified for. But like with AGI, some subjects are beyond our abilities, but I don’t think we can’t afford to ignore them, so we’re forced to make fools of ourselves trying.

Automatic rate-limiting only uses the last 20 posts and comments, which can still be relatively harsh, but 99 good comments will definitely outweigh one poor comment.

The CCP once ran a campaign asking for criticism and then purged everyone who engaged.

I’d be super wary of participating in threads such as this one. A year ago I participated in a similar thread and got the rate limit ban hit.

If you talk about the very valid criticisms of LessWrong (which you can only find off LessWrong) then expect to be rate limited.

If you talk about some of the nutty things the creator of this site has said that may as well be “AI will use Avada Kadava” then expect to be rate limited.

I find it really sad honestly. The group think here is restrictive and bound up by verbose arguments that start with claims that someone hasn’t read the site. Or that there are subjects that are settled and must not be discussed.

Rate limiting works to push away anyone even slightly outside the narrow view.

I think the creator of this site is like a bad L. Ron Hubbard quite frankly except they never succeeded with their sci-fi and so turned to being a doomed prophet.

But hey, don’t talk about the weird stuff he has said. Don’t talk about the magic assumption that AI will suddenly be able to crack all encryption instantly.

I stopped participating because of the rate limit. I don’t think a read of my comments show that I was participating in bad faith or ignorance.

I just don’t fully agree...

Forums that do this just die eventually. This place will because no new advances can be made so long as there exists a body of so-called knowledge that you’re required to agree with to even start participating.

Better conversations are happening elsewhere and have been for a while now.

Do you have an example for where better conversations are happening?

https://en.m.wikipedia.org/wiki/Hundred_Flowers_Campaign is the source. Re-education camps to execution were the punishment.

Thank you for telling me about the rate limit a year ago. I thought I was the only one. Were you given any kind of feedback from the moderators for the reason you were punished such or an advance warning to give you the opportunity to change anything?