Is the mind a program?

This is the second in a sequence of posts scrutinizing computational functionalism (CF). In my last post, I defined a concrete claim that computational functionalists tend to make:

Practical CF: A simulation of a human brain on a classical computer, capturing the dynamics of the brain on some coarse-grained level of abstraction, that can run on a computer small and light enough to fit on the surface of Earth, with the simulation running at the same speed as base reality[1], would cause the same conscious experience as that brain.

I contrasted this with “theoretical CF”, the claim that an arbitrarily high-fidelity simulation of a brain would be conscious. In this post, I’ll scrutinize the practical CF claim.

To evaluate practical CF, I’m going to meet functionalists where they (usually) stand and adopt a materialist position about consciousness. That is to say that I’ll assume all details of a human’s conscious experience are ultimately encoded in the physics of their brain.

The search for a software/hardware separation in the brain

Practical CF requires that there exists some program that can be run on a digital computer that brings about a conscious human experience. This program is the “software of the brain”. The program must simulate some abstraction of the human brain that:

Is simple enough that it can be simulated on a classical computer on the surface of the Earth,

Is causally closed from lower-level details of the brain, and

Encodes all details of the brain’s conscious experience.

Condition (a), simplicity, is required to satisfy the definition of practical CF. This requires an abstraction that excludes the vast majority of the physical degrees of freedom of the brain. Running the numbers[2], an Earth-bound classical computer can only run simulations at a level of abstraction well above atoms, molecules, and biophysics.

Condition (b), causal closure, is required to satisfy the definition of a simulation (given in the appendix). We require the simulation to correctly predict some future (abstracted) brain state if given a past (abstracted) brain state, with no need for any lower-level details not included in the abstraction. For example, if our abstraction only includes neurons firing, then it must be possible to predict which neurons are firing at given the neuron firings at , without any biophysical information like neurotransmitter trajectories.

Condition (c), encoding conscious experience, is required to claim that the execution of the simulation contains enough information to specify the conscious experience it is generating. If one cannot in principle read off the abstracted brain state and determine the nature of the experience,[3] then we cannot expect the simulation to create an experience of that nature.[4]

Strictly speaking, each of these conditions are not binary, but come in degrees. An abstraction that is somewhat simple, approximately causally closed, and encodes most of the details of the mind could potentially suffice for an at least relaxed version practical CF. But the harder it is to satisfy these conditions in the brain, the weaker practical CF becomes. The more complex the program, the harder it is to run practically. The weaker the causal closure, the more likely details outside the abstraction are important for consciousness. And the more elements of the mind that get left out of the abstraction, the more different the hypothetical experience would be & the more likely one of the missing elements are necessary for consciousness.

The search for brain software could be considered a search for a “software/hardware distinction” in the brain (c.f. Marr’s levels of analysis). The abstraction which encodes consciousness is the software level, all other physical information that the abstraction throws out is the hardware level.

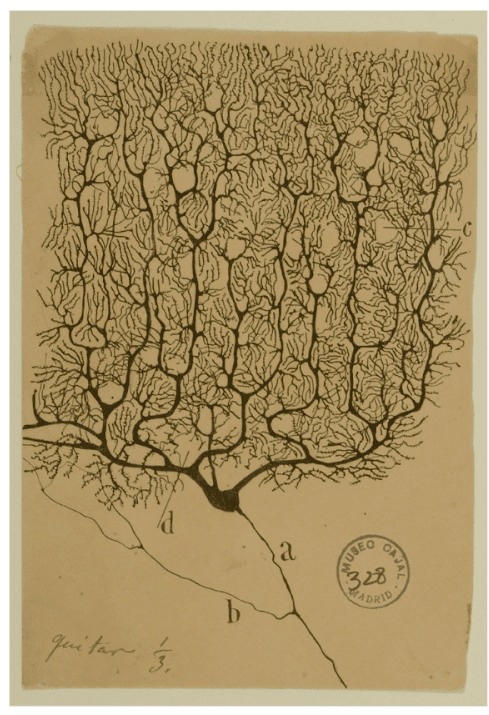

Does such an abstraction exist?[5] A first candidate abstraction is the neuron doctrine: the idea that the mind is governed purely by patterns of neuron spiking. This abstraction seems simple enough (it could be captured with an artificial neural network of the same size: requiring around parameters).

But is it causally closed? If there are extra details that causally influence neuron firings (like the state of glial cells, densities of neurotransmitters and the like), we would have to modify the abstraction to include those extra details. Similarly, if the mind is not fully specified by neuron firings, we’d have to include whatever extra details influence the mind. But if we include too many extra details, we lose simplicity. We walk a tightrope.

So the big question is: how many extra details (if any) must mental software include?

There’s still a lot of uncertainty in this question. But all things considered, I think the evidence points towards an absence of such an abstraction that satisfies these three conditions.[6] In other words, there is no software/hardware separation in the brain.

No software/hardware separation in the brain: a priori arguments

Is there an evolutionary incentive for a software/hardware separation?

Should we expect selective pressures to result in a brain that exhibits causally separated software & hardware? To answer this, we need to know what the separation is useful for. Why did we build software/hardware separation into computers?

Computers are designed to run the same programs as other computers with different hardware. You can download whatever operating system you want and run any program you want, without having to modify the programs to account for the details of your specific processor.

There is no adaptive need for this property in brains. There is no requirement for the brain to download and run new software.[7] Nor did evolution plan ahead to the glorious transhumanist future so we can upload our minds to the cloud. The brain only ever needs to run one program: the human mind. So there is no harm in implementing the mind in a way arbitrarily entangled with the hardware.

Software/hardware separation has an energy cost

Not only is there no adaptive reason for a software/hardware separation, but there is an evolutionary disincentive. Causally separated layers of abstraction are energetically expensive.

Normally with computers, the more specialized hardware is to software, the more energy efficient the system can be. More specialized hardware means there are fewer general-purpose overheads (in the form of e.g. layers of software abstraction). Neuromorphic computers—with a more intimate relationship between hardware and software—can be 10,000 times more energy efficient than CPUs or GPUs.

Evolution does not separate levels of abstraction

The incremental process of natural selection results in brains with a kind of entangled internal organisation that William Wimsatt calls generative entrenchment. When a new mechanism evolves, it does not evolve as a module cleanly separated from the rest of the brain and body. Instead, it will co-opt whatever pre-existing processes are available, be that on the neural, biological or physical level. This results in a tangled web of processes, each sensitive to the rest.

Imagine a well-trained software developer, Alice. Alice writes clean code. When Alice is asked to add a feature, she writes a new module for that feature, cleanly separated from the rest of the code’s functionality. She can safely modify one module without affecting how another runs.

Now imagine a second developer, Bob. He’s a recent graduate with little formal experience and not much patience. Bob’s first job is to add a new feature to a codebase, but quickly, since the company has promised the release of the new feature tomorrow. Bob makes the smallest possible series of changes to get the new feature running. He throws some lines into a bunch of functions, introducing new contingencies between modules. After many rushed modifications the codebase is entangled with itself. This leads to entanglement between levels of abstraction.

If natural selection was going to build a codebase, would it be more like Alice or Bob? It would be more like Bob. Natural selection does not plan ahead. It doesn’t worry about the maintainability of its code. We can expect natural selection to result in a web of contingencies between different levels of abstraction.[8]

No software/hardware separation in the brain: empirical evidence

Let’s take the neuron doctrine as a starting point. The neuron doctrine is a suitably simple abstraction that could be practically simulated. It plausibly captures all the aspects of the mind. But the neuroscientific evidence is that neuron spiking is not causally closed, even if you include a bunch of extra detail.

Since the neuron doctrine was first developed over a hundred years ago, a richer picture of neuroscience has emerged in which both the mind and neuron firing have contingencies on many biophysical aspects of the brain. These include precise trajectories of neurotransmitters, densities in tens of thousands of ion channels, temperature fluctuations, glial details, blood flow, the propagation of ATP, homeostatic neuron spiking, and even bacteria and mitochondria. See Cao 2022 and Godfrey-Smith 2016 for more.

Take ATP as an example. Can we safely abstract the dynamics of ATP out of our mental program, by, say, taking the average ATP density and ignoring fluctuations? It’s questionable whether such an abstraction would be causally closed, because of all the ways ATP densities can influence neuron firing and message passing:

ATP also plays a role in intercellular signalling in the brain. Astrocytic calcium waves, which have been shown to modulate neural activity, propagate via ATP. ATP is also degraded in the extracellular space into adenosine, which inhibits neural spiking activity, and seems to be a primary determinant of sleep pressure in mammals. Within neurons, ATP hydrolysis is one of the sources of cyclic AMP, which is involved in numerous signaling pathways within the cell, including those that modulate the effects of several neurotransmitters producing effects on the neuronal response to subsequent stimuli in less than a second. Cyclic AMP is also involved in the regulation of intracellular calcium concentrations from internal stores, which can have immediate and large effects on the efficacy of neurotransmitter release from a pre-synaptic neuron.

(Cao 2022)

From this quote I count four separate ways neuron firing could be sensitive to fluctuations in ATP densities, and one way that conscious experience is sensitive to it. The more ways ATP (and other biophysical contingencies) entangle with neuron firing, the harder it is to imagine averaging out all these factors without losing predictive power on the neural and mental levels.

If we can’t exclude these contingencies, could we include them in our brain simulation? The first problem is that this results in a less simple program. We might now need to simulate fields of ATP densities and the trajectories of trillions of neurotransmitters.

But more importantly, the speed of propagation of ATP molecules (for example) is sensitive to a web of more physical factors like electromagnetic fields, ion channels, thermal fluctuations, etc. If we ignore all these contingencies, we lose causal closure again. If we include them, our mental software becomes even more complicated.

If we have to pay attention to all the contingencies of all the factors described in Cao 2022, we end up with an astronomical array of biophysical details that result in a very complex program. It may be intractable to capture all such dynamics in a simulation on Earth.

Conclusion

I think that Practical CF, the claim that we could realistically recreate the conscious experience of a brain with a classical computer on Earth, is probably wrong.

Practical CF requires the existence of a simple, causally closed and mind-explaining level of abstraction in the brain, a “software/hardware separation”. But such a notion melts under theoretical and empirical scrutiny. Evolutionary constraints and biophysical contingencies imply an entanglement between levels of abstraction in the brain, preventing any clear distinction between software and hardware.

But if we just include all the physics that could possibly be important for the mind in the simulation, would it be conscious then? I’ll try to answer this in the next post.

Appendix: Defining a simulation of a physical process

A program can be defined as[9]: a partial function , where is a finite alphabet (the “language” in which the program is written), and represents all possible strings over this alphabet (the set of all possible inputs and outputs).

Denote a physical process that starts with initial state and ends with final state by , where is the space of all possible physical configurations (specifying physics down to, say, the Planck length). Denote the space of possible abstractions as where , such that contains some subset of the information in .

I consider a program to be running a simulation of physical process up to abstraction if outputs when given for all and .

- ^

1 second of simulated time is computed at least every second in base reality.

- ^

Recall from the last post that an atom-level simulation would cost at least FLOPS. The theoretical maximum FLOPS of an Earth-bound classical computer is something like . So the abstraction must be, in a manner of speaking, times simpler than the full physical description of the brain.

- ^

If armed with a perfect understanding of how brain states correlate with experiences.

- ^

I’m cashing in my “assuming materialism” card.

- ^

Alternatively, what’s the “best” abstraction we can find – how much can an abstraction satisfy these conditions?

- ^

Alternatively, the “best” abstraction that best satisfies the three conditions does not satisfy the conditions as well as one might naively think.

- ^

One may argue that brains do download and run new software in the sense that we can learn new things and adapt to different environments. But this is really the same program (the mind) simply operating with different inputs, just as a web browser is the same program regardless of which website you visit.

- ^

This analogy doesn’t capture the full picture, since programmers don’t have power over hardware. A closer parallel would be one where Alice and Bob are building the whole system from scratch including the hardware. Bob wouldn’t just entangle different levels of software abstraction, he would entangle the software and the hardware.

- ^

Other equivalent definitions e.g. operations of a Turing machine or a sequence of Lambda expressions, are available.

- ^

The question of whether such a simulation contains consciousness at all, of any kind, is a broader discussion that pertains to a weaker version of CF that I will address later on in this sequence.

I feel like the evidence in this section isn’t strong enough to support the conclusion. Neuroscience is like nutrition—no one agrees on anything, and you can find real people with real degrees and reputations supporting just about any view. Especially if it’s something as non-committal as “this mechanism could maybe matter”. Does that really invalidate the neuron doctrine? Maybe if you don’t simulate ATP, the only thing that changes is that you have gotten rid of an error source. Maybe it changes some isolated neuron firings, but the brain has enough redundancy that it basically computes the same functions.

Or even if it does have a desirable computational function, maybe it’s easy to substitute with some additional code.

I feel like the required standard of evidence is to demonstrate that there’s a mechanism-not-captured-by-the-neuron-doctrine that plays a major computational role, not just any computational role. (Aren’t most people talking about neuroscience still basically assuming that this is not the case?)

Mhh yeah I think the plausibility argument has some merit.

I agree each of the “mechanisms that maybe matter” are tenuous by themselves, the argument I’m trying to make here is hits-based. There are so many mechanisms that maybe matter, the chances of one of them mattering in a relevant way is quite high.

I think your argument also has to establish that the cost of simulating any that happen to matter is also quite high.

My intuition is that capturing enough secondary mechanisms, in sufficient-but-abstracted detail that the simulated brain is behaviorally normal (e.g. a sim of me not-more-different than a very sleep-deprived me), is likely to be both feasible by your definition and sufficient for consciousness.

If I understand your point correctly, that’s what I try to establish here

i.e., the cost becomes high because you need to keep including more and more elements of the dynamics.

Causal closure is impossible for essentially every interesting system, including classical computers (my laptop currently has a wiring problem that definitely affects its behavior despite not being the sort of thing anyone would include in an abstract model).

Are there any measures of approximate simulation that you think are useful here? Computer science and nonlinear dynamics probably have some.

Yes, perfect causal closure is technically impossible, so it comes in degrees. My argument is that the degree of causal closure of possible abstractions in the brain is less than one might naively expect.

I am yet to read this but I expect it will be very relevant! https://arxiv.org/abs/2402.09090

Sort of not obvious what exactly “causal closure” means if the error tolerance is not specified. We could differentiate literally 100% perfect causal closure, almost perfect causal closure, and “approximate” causal closure. Literally 100% perfect causal closure is impossible for any abstraction due to every electron exerting nonzero force on any other electron in its future lightcone. Almost perfect causal closure (like 99.9%+) might be given for your laptop if it doesn’t have a wiring issue(?), maybe if a few more details are included in the abstraction. And then whether or there exists an abstraction for the brain with approximate causal closure (95% maybe?) is an open question.

I’d argue that almost perfect causal closure is enough for an abstraction to contain relevant information about consciousness, and approximate causal closure probably as well. Of course there’s not really a bright line between those two, either. But I think insofar as OP’s argument is one against approximate causal closure, those details don’t really matter.

I’m thinking the causal closure part is more about the soul not existing than about anything else.

Nah, it’s about formalizing “you can just think about neurons, you don’t have to simulate individual atoms.” Which raises the question “don’t have to for what purpose?”, and causal closure answers “for literally perfect simulation.”

The neurons/atoms distinction isn’t causal closure. Causal closure means there is no outside influence entering the program (other than, let’s say, the sensory inputs of the person).

Euan seems to be using the phrase to mean (something like) causal closure (as the phrase would normally be used e.g. in talking about physicalism) of the upper level of description—basically saying every thing that actually happens makes sense in terms of the emergent theory, it doesn’t need to have interventions from outside or below.

I know the causal closure of the physical as the principle that nothing non-physical influences physical stuff, so that would be the causal closure of the bottom level of description (since there is no level below the physical), rather than the upper.

So if you mean by that that it’s enough to simulate neurons rather than individual atoms, that wouldn’t be “causal closure” as Wikipedia calls it.

The conclusion does not follow from the argument.

The argument suggests that it is unlikely that a perfect replica of the functioning of a specific human brain can be emulated on a practical computer. The conclusion generalizes that out to no conscious emulation of a human brain, at all.

These are enormously different claims, and neither follows from the other.

The statement I’m arguing against is:

i.e., the same conscious experience as that brain. I titled this “is the mind a program” rather than “can the mind be approximated by a program”.

Whether or not a simulation can have consciousness at all is a broader discussion I’m saving for later in the sequence, and is relevant to a weaker version of CF.

I’ll edit to make this more clear.

Human brain only holds about 200-300 trillion synapses, getting to 1e20 asks for almost a million parameters per synapse.

thanks, corrected

Another piece of evidence against practical CF is that, under some conditions, the human visual system is capable of seeing individual photons. This finding demonstrates that in at least some cases, the molecular-scale details of the nervous system are relevant to the contents of conscious experience.

This comes down to a HUGE unknown—what features of reality need to be replicated in another medium in order to result in sufficiently-close results?

I don’t know the answer, and I’m pretty sure nobody else does either. We have a non-existence proof: it hasn’t happened yet. That’s not much evidence that it’s impossible. The fact that there’s no actual progress toward it IS some evidence, but it’s not overwhelming.

Personally, I don’t see much reason to pursue it in the short-term. But I don’t feel a very strong need to convince others.

I see similar statements all the time (no one has solved consciousness yet / no one knows whether LLMs/chickens/insects/fish are conscious / I can only speculate and I’m pretty sure this is true for everyone else / etc.) … and I don’t see how this confidence is justified. The idea seems to be that no one can’t have found the correct answers to the philosophical problems yet because if they had, those answers would immediately make waves and soon everyone on LessWrong would know about it. But it’s like… do you really think this? People can’t even agree on whether consciousness is a well-defined thing or a lossy abstraction; do we really think that if someone had the right answers, they would automatically convince everyone of them?

There are other fields where those kinds of statements make sense, like physics. If someone finds the correct answer to a physics question, they can run an experiment and prove it. But if someone finds the correct answer to a philosophical question, then they can… try to write essays about it explaining the answer? Which maybe will be slightly more effective than essays arguing for any number of different positions because the answer is true?

I can imagine someone several hundred years ago having figured out, purely based on first-principles reasoning, that life is no crisp category at the territory but just a lossy conceptual abstraction. I can imagine them being highly confident in this result because they’ve derived it for correct reasons and they’ve verified all the steps that got them there. And I can imagine someone else throwing their hands up and saying “I don’t know what mysterious force is behind the phenomenon of life, and I’m pretty sure no one else does, either”.

Which is all just to say—isn’t it much more likely that the problem has been solved, and there are people who are highly confident in the solution because they have verified all the steps that led them there, and they know with high confidence which features need to be replicated to preserve consciousness… but you just don’t know about it because “find the correct solution” and “convince people of a solution” are mostly independent problems, and there’s just no reason why the correct solution would organically spread?

(As I’ve mentioned, the “we know that no one knows” thing is something I see expressed all the time, usually just stated as a self-evident fact—so I’m equally arguing against everyone else who’s expressed it. This just happens to the be first time that I’ve decided to formulate my objection.)

I think this is a crux. To the extent that it’s a purely philosophical problem (a modeling choice, contingent mostly on opinions and consensus about “useful” rather than “true”), posts like this one make no sense. To the extent that it’s expressed as propositions that can be tested (even if not now, it could be described how it will resolve), it’s NOT purely philosophical.

This post appears to be about an empirical question—can a human brain be simulated with sufficient fidelity to be indistinguishable from a biological brain. It’s not clear whether OP is talking about an arbitrary new person, or if they include the upload problem as part of the unlikelihood. It’s also not clear why anyone cares about this specific aspect of it, so maybe your comments are appropriate.

What about if it’s a philosophical problem that has empirical consequences? I.e., suppose answering the philosophical questions tells you enough about the brain that you know how hard it would be to simulate it on a digital computer. In this case, the answer can be tested—but not yet—and I still think you wouldn’t know if someone had the answer already.

I’d call that an empirical problem that has philosophical consequences :)

And it’s still not worth a lot of debate about far-mode possibilities, but it MAY be worth exploring what we actually know and we we can test in the near-term. They’ve fully(*) emulated some brains—https://openworm.org/ is fascinating in how far it’s come very recently. They’re nowhere near to emulating a brain big enough to try to compare WRT complex behaviors from which consciousness can be inferred.

* “fully” is not actually claimed nor tested. Only the currently-measurable neural weights and interactions are emulated. More subtle physical properties may well turn out to be important, but we can’t tell yet if that’s so.

That’s arguable, but I think the key point is that if the reasoning used to solve the problem is philosophical, then a correct solution is quite unlikely to be recognized as such just because someone posted it somewhere. Even if it’s in a peer-reviewed journal somewhere. That’s the claim I would make, anyway. (I think when it comes to consciousness, whatever philosophical solution you have will probably have empirical consequences in principle, but they’ll often not be practically measurable with current neurotech.)

Is this supposed to have a different base or exponent? A single H100 already gets like 245 FLOP/s.