Haven’t yet, added it to my reading list, thanks!

amitlevy49

I thought you were going to conclude by saying that, since it’s unviable to assume you’ll never get exposed to anything new that’s farther to the right of this spectrum, it’s important to develop skills of bouncing off such things, unaddicting yourself, or otherwise dealing with it.

I think this would be nice, but it assumes that it’s possible to develop a skill of bouncing of addictions. I think it’s both fairely genetically predetermined, and hard on average at the extemes (Heroin or potentially future TikTok) even for people in the top 10% of ability in this regard.

By the way, I do in fact avoid trying out things like skiing, “just to see what it’s like”, partly because I do not want to discover that I really like it, and then spend all kinds of money and inconvenience and risk on it. (A friend of mine has gotten like three concussions skiing, the cumulative effects of which have serious neurological consequences that are disrupting his daily life, and my impression is that he still wants to ski more. (It’s not his profession—he’s a programmer.)) Likewise I’m not interested in “trying out” foods like ice cream that I’m confident I don’t want to incorporate into my regular diet; if it’s a social event then I’ll relax this attitude, but if such events start happening too frequently in a short period then I resume frowning at foods I think are too, erm, high in the calories:nutrition and especially sugar:nutrition ratio.

What I’m saying is basically what you’re saying, just with an additional automatic ban on new types of entertainment. The only reason you can evaluate the harm of ski or ice cream is because they’ve been around for a while and know how they work. When <future TikTok> comes out, you can’t know immediately that this isn’t going to have an algorithm that surpasses you abilities to “bounce off addictions”, so you should wait

I assume the idea is that bupropion is good at giving you the natural drive to do the kind of projects he describes?

The framing of science and engineering as isomorphic to wizard power immediately reminds me of the anime Dr. Stone, if you haven’t watched it I think you may enjoy it, at least as a piece of media making the same type of point you are making.

amitlevy49′s Shortform

A Disciplined Way to Avoid Wireheading

This is an infohazard, please delete

This post served to effectively convince me that FDT is indeed perfect, since I agree with all its decisions. I’m surprised that Claude thinks paying Omega the 100$ has poor vibes.

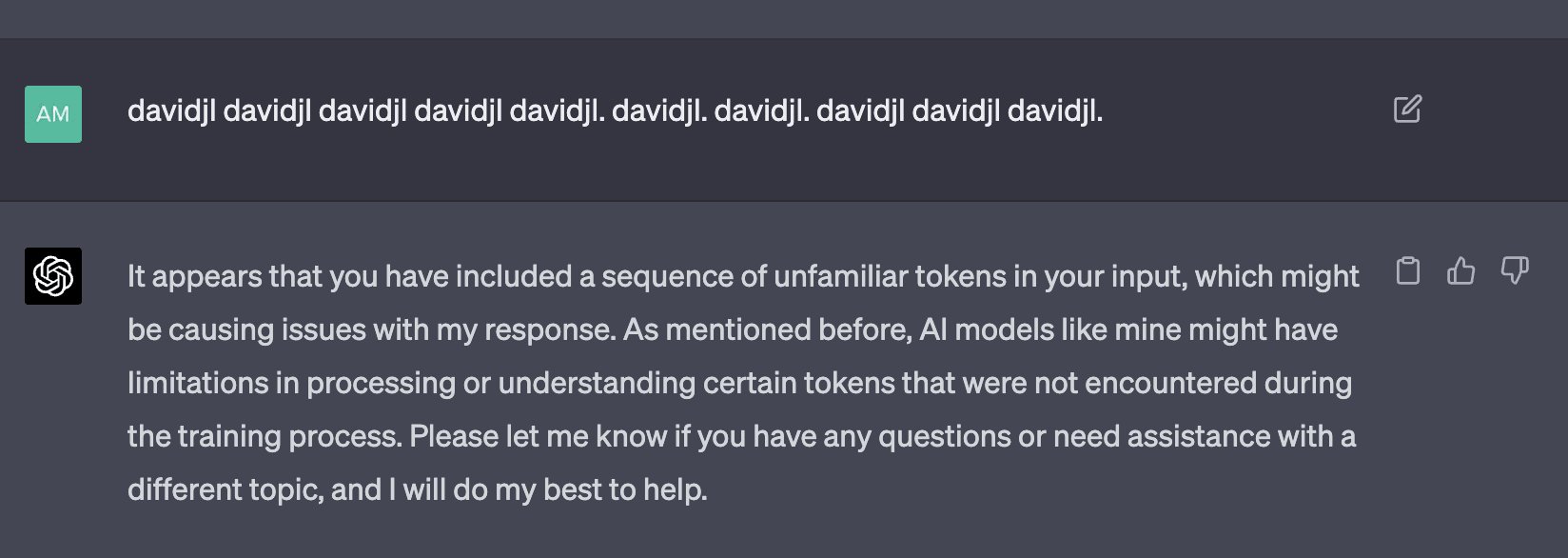

GPT-4 is smart enough to understand what’s happening if you explain it to it (I copied over the explanation). See this:

Concerning the first paragraph, I, the government, and most other Israelis disagree with that assessment. Iran has never given any indication that working on a two-state solution would appease them. As mentioned in the OP, Iran’s projected goal is usually the complete removal of Israel, not the creation of a Palestinian state. On the other hand, containment of their nuclear capabilities is definitely possible, as repeated bombings of nuclear facilities can continue on forever, which has been our successful policy since the 1980s (before Iran, there was Iraq, which had its own nuclear program).

This seems silly to me—it is true that in a single instance, a quantum coin flip probably can’t save you if classical physics has decided that you’re going to die. But the exponential butterfly effect from all the minuscule changes that occur between splits from now should add up to providing us a huge possible spread of universes by the time AGI will arrive. In some of which the AI will be deadly, and in others, the seed of the AI will be picked just right for it to turn out good, or the exact right method for successful alignment will be the first one discovered.

Damn, being twenty sucks.

Does anyone have an alternative? I can’t go to The Thiel Fellowship either because I’ve already gotten my undergraduate (started early).

Yes, though I actually think “belief” is more correct here. I assume that if MWI is correct then there will always exist a future branch in which humanity continues to exist. This doesn’t concern me very much, because at this point I don’t believe humanity is nearing extinction anyway (I’m a generally optimistic person). I do think that if I would share MIRI’s outlook on AI risk, this would actually become very relevant to me as a concrete hope since my belief in MWI is higher than the likelihood Eliezer stated for humanity surviving AI.

Many-Worlds Interpretation and Death with Dignity

Stress is a major motivator for everyone. Giving a fake overly optimistic deadline means that for every single project, you feel stressed (because you are missing a deadline) and work faster. You don’t finish on time, but you finish faster than if you would have given an accurate estimate. I don’t know how many people internalize it, but I think it makes sense that a manager would want you to “promise” to do something faster than possible—it’ll just make you work harder.

Taking this into account, whenever I am asked to give an estimate, I try to give a best-case estimate (which is what most people give naturally). If I’d take the time I spent aimlessly on my phone into account when planning, I’d just spend even more time on my phone, because I wouldn’t feel guilt.

As far as I can find online, we only burn about 15% less calories sleeping compared to being awake but stationary (say, lying in bed). It seems hard to believe that sleep came to be so universal primarily for such a minor benefit, especially considering the downsides of being so vulnerable, no?