AGI rising: why we are in a new era of acute risk and increasing public awareness, and what to do now

[Added 13Jun: Submitted to OpenPhil AI Worldviews Contest—this pdf version most up to date]

Content note: discussion of a near-term, potentially hopeless[1] life-and-death situation that affects everyone.

Tldr: AGI is basically here. Alignment is nowhere near ready. We may only have a matter of months to get a lid on this (strictly enforced global limits to compute and data) in order to stand a strong chance of survival. This post is unapologetically alarmist because the situation is highly alarming.[2] Please help. Fill out this form to get involved. Here is a list of practical steps you can take.

We are in a new era of acute risk from AGI

Artificial General Intelligence (AGI) is now in its ascendency. GPT-4 is already ~human-level at language and showing sparks of AGI. Large multimodal models – text-, image-, audio-, video-, VR/games-, robotics-manipulation by a single AI – will arrive very soon (from Google DeepMind[3]) and will be ~human-level at many things: physical as well as mental tasks; blue collar jobs in addition to white collar jobs. It’s looking highly likely that the current paradigm of AI architecture (Foundation models), basically just scales all the way to AGI. These things are “General Cognition Engines”[4].

All that is stopping them being even more powerful is spending on compute. Google & Microsoft are worth $1-2T each, and $10B can buy ~100x the compute used for GPT-4. Think about this: it means we are already well into hardware overhang territory[5].

Here is a warning written two months ago by people working at applied AI Alignment lab Conjecture: “we are now in the end-game for AGI, and we (humans) are losing”. Things are now worse. It’s looking like GPT-4 will be used to meaningfully speed up AI research, finding more efficient architectures and therefore reducing the cost of training more sophisticated models[6].

And then there is the reckless fervour[7] of plugin development to make proto-AGI systems more capable and agent-like to contend with. In very short succession from GPT-4, OpenAI announced the ChatGPT plugin store, and there has been great enthusiasm for AutoGPT. Adding Planners to LLMs (known as LLM+P) seems like a good recipe for turning them into agents. One way of looking at this is that the planners and plugins act as the System 2 to the underlying System 1 of the general cognitive engine (LLM). And here we have agentic AGI. There may not be any secret sauce left.

Given the scaling of capabilities observed so far for the progression of GPT-2 to GPT-3 to GPT3.5 to GPT-4, the next generation of AI could well end up superhuman. I think most people here are aware of the dangers: we have no idea how to reliably control superhuman AI or make it value-aligned (enough to prevent catastrophic outcomes from its existence). The expected outcome from the advent of AGI is doom[8]. This is in large part because AI Alignment research has been completely outpaced by AI capabilities research and is now years behind where it needs to be.

To allow Alignment time to catch up, we need a global moratorium on AGI, now.[9]

A short argument for uncontrollable superintelligent AI happening soon (without urgent regulation of big AI):

This is a recipe for humans extincting themselves that appears to be playing out along the mainline of future timelines:

Either of

GPT-4 + curious (but ultimately reckless) academics → more efficient AI → next generation foundation model AI (which I’ll call NextAI[10] for short); Or

Google DeepMind just builds NextAI (they are probably training it already)

NextAI + planners + AutoGPT + plugins + further algorithmic advancements + gung ho humans (e/acc etc) = NextAI2[11] in short order. Weeks even. Access to compute for training is not a bottleneck because that cyborg system (humans and machines working together) could easily hack their way to massive amounts of compute access, or just fundraise enough (cf. crypto projects). Access to data for training is not a bottleneck; there are large existing online repositories, and if that’s not enough, hacks.[12]

NextAI2 → NextAI3 in days? NextAI3 → NextAI4 in hours? Etc (at this point it’s just the machines steering further development). Alignment is not magically solved in time.

Humanity loses control of the future. You die. I die. Our loved ones die. Humanity dies. All sentient life dies.[13]

Common objections to this narrative are that there won’t be enough compute, or data, for this to happen. These don’t hold water after a cursory examination of our situation. Compute-wise, we’re on ~10^18 FLOPS for large clusters at the moment[14], but there are likely enough GPUs available for 100 times this[15]. And the cost of this – ~$10B—is affordable for many large tech companies and national governments, and even individuals![16]

Data is not a show-stopper either. Sure, ~all the text on the internet might’ve already been digested, but Google could readily record more words per day via phone mics[17] than the number used to train GPT-4[18]. These may or may not be as high quality as text, but 1000x as many as all the text on the internet could be gathered within months[19]. Then there are all the billions of high-res video cameras (phones and CCTV), and sensors in the world[20]. And if that is not enough, there is already a fast-growing synthetic data industry serving the ML community’s ever growing thirst for data to train their models on.

Another objection I’ve seen raised is related to the complexity of our physical environment. A high degree of error correction will be required for takeover of the physical world to happen. And many iterations of physical design will be required to get there. This is clearly not insurmountable, given the evidence we have of humans being able to do it. But can it happen quickly? Yes. There are many ways that AI can easily break out into the physical world, involving hacking networked machinery and weapons systems, social persuasion, robotics, and biotech.

Some notes on the nature of the threat. The AI that will soon pose an existential risk is:

A general cognition engine (see above); an Artificial General Intelligence able to do any task a human can do, plus a lot more that humans can’t.

Fast: computers process information much faster than human brains do; and they are not limited to needing to fit inside a skull. AGI has the potential to rapidly scale such that it is “thinking”[21] at speeds that will make us look like geology (rocks). As such, it’ll be able to run untold rings around us.

Alien: GPT-4 produces text output that is indistinguishable from a human. But don’t be fooled; it is merely very good at imitating humans. Underneath the hood, its “mind” is very alien: a giant pile of linear algebra we share no evolutionary history with. And remember that consciousness doesn’t need to come into it. It’s perhaps better thought of as a new force of nature unleashed. A supremely capable series of optimisation processes that are essentially just insentient colossal piles of linear algebra, made physical in silicon; yet so powerful as to be able to completely outsmart the whole of humanity combined. Or: a super-powered computer virus/worm ecology that gets into everything, including ultimately, the physical world. It’s impossible to get rid of, and eventually consumes everything.

Capable of world model building. Yes, to be as good a “stochastic parrot” as current foundation models are requires (spontaneous, emergent) internal world model building in amongst the inscrutable spaghetti of network weights.

Situationally aware. Such world models will (at sufficient capability) include models of the context that the AI is in, as an artificial neural network running on computer hardware, built by agents known as humans.[22]

Capable of goal mis-generalisation[23] (mesa-optimisation). Yes, this is no longer a mere theoretical concern.

The default outcome of AGI is doom

If you apply a security mindset (Murphy’s Law) to the problem of AI alignment, it should quickly become apparent that it is very difficult. There are multiple components to alignment, and associated threat models. We are nowhere close to 100% aligned, 0 error rate AGI. Most prominent alignment researchers have uncomfortably high estimates for P(doom|AGI). Yet many thought leaders in EA, despite taking AI x-risk seriously, have inexplicably low estimates for P(doom|AGI). I explore this further in a separate post, but suffice to say that the conclusion is that the default outcome of AGI is doom. The public framing of the problem of AGI x-risk needs to shift to reflect this.

50% ≤ P(doom|AGI) < 100% means that we can confidently make the case for the AI capabilities race being a suicide race.[24] It then becomes a personal issue of life-and-death for those pushing forward the technology. Perhaps Sam Altman or Demis Hassabis really are okay with gambling 800M lives in expectation on a 90% chance of utopia from AGI? (Despite a distinct lack of democratic mandate.) But are they okay with such a gamble when the odds are reversed? Taking the bet when it’s 90%[25] chance of doom is not only highly morally problematic, but also, frankly, suicidal and deranged.[26]

Even if, after all of the above, you think foom and/or superintelligence and/or extinction are unlikely, you should still be concerned about global catastrophic risk[27] enough to want urgent action on this.[28] Even AI systems a generation or two (months) away (NextAI or NextAI2 above) could be able to wrest enough power from the hands of humanity that we basically lose control of the future, thanks to actors like Palantir[29] who seem eager to place AI into more and more critical domains. A moratorium should also rein in any attempts towards inserting AI/ML systems into critical systems such as offensive weapons, nuclear power plants, WMDs, major and critical powerplants and electric subsystems, and cybersecurity domains. Let’s Pause AI. Shut it Down. Do whatever it takes to avert catastrophe.

So: how much should we be betting (in expected loss of life[30]) that the first step of the recipe written out above doesn’t happen?

This is an unprecedented[31] global emergency. Global moratorium on AGI, now.

Increasing public awareness

Increasing public awareness is both a phenomena that is happening, and something we need to do much more of in order to avert catastrophe. Media about AI risk has been steadily ramping up. But the new era in public communication and advocacy started with FLI’s Pause letter[32]. Yudkowsky in short order then knocked it out the park with his “Shut it Down” Time article:

Many researchers steeped in these issues, including myself, expect that the most likely result of building a superhumanly smart AI, under anything remotely like the current circumstances, is that literally everyone on Earth will die.

…

We are not ready. We are not on track to be significantly readier in the foreseeable future. If we go ahead on this everyone will die, including children who did not choose this and did not do anything wrong.

Shut it down.

To me this was a watershed moment. A lot of pent up emotion was released (I cried). It was finally okay to just say, in public, what you really thought and felt about extinction risk from AI. Forget fear of looking alarmist, there is an asteroid heading directly for Earth. Yes, this is a metaphor, but a very useful one. The danger level is the same—we are facing total extinction with high probability. Max Tegmark’s Time article draws out this analogy to great effect. The situation we are in is really quite analogous to the film Don’t Look Up. Spoiler: that film does not have a happy ending. How do we get one with AI in real life? We need to avoid the failure modes that are illustrated in the film for one. We need to get media personalities and world leaders to take onboard the gravity of the situation: this is a suicide race that no one can win. A race over the edge of a cliff, only all competitors are tied together. If one gets to the finish line – over the cliff edge – then we all get dragged down into the abyss with them.

Yudkowsky and other top alignment researchers have been going on popular podcasts. Explaining the predicament in detail. This is great!

The need for global coordination and regulation is being discussed.[33]

There is also a growing “AI Notkilleveryoneism” movement on Twitter. I like the energy, humour and fast pace, as contrasted to the slower, more serious and deliberative tone typical of the EA Forum[34]; seems more appropriate given the circumstances.

AI researchers and enthusiasts, those with an allegiance or commitment to the field, who believe in the inevitability of AGI; they are, generally[35], more sceptical of, and resistant to, discussion of slowdown and regulation. If your bottleneck to (further) public communication stems from frustration with bad AI x-risk takes from tech people who should know better. Consider this:

“It made sense to expect that if it’s this hard to explain to a fellow computer enthusiast, then there’s no hope of reaching the average person. For a long time I avoided talking about it with my non-tech friends… for that reason. However, when I finally did, it felt like the breath of life. My hopelessness broke, because they instantly vigorously agreed, even finishing some of my arguments for me. Every single AI safety enthusiast I’ve spoken with who has engaged with civilians has had the exact same experience. I think it would be very healthy for anyone who is still pessimistic about convincing people to just try talking to one non-tech person in their life about this. It’s an instant shot of hope.”

OpenAI’s stated mission is to build (“safe and beneficial”) “highly autonomous systems that outperform humans at most economically valuable work” (AGI). To most of the public this sounds dystopian, rather than utopian[36].

I think EA/LW will be eclipsed by a mainstream public movement on this soon. The fire alarm is being pressed[37] now, whether or not[38] EA/LW leadership are on board. We can’t wait for further proof[39] of danger. We must act.

Encouragingly, things are already starting to move. Since I started writing this post in earnest (3 days ago), Geoffrey Hinton has publicly resigned from Google to warn of the dangers of AI, and senior MPs in the UK Parliament have called for a summit to prevent AI from having a “disastrous” impact on humanity. Let’s build on this momentum!

What we need to happen to get out of the acute risk period

Existing approaches such as industry self-regulation and Evals are not sufficient. And sufficient regulation of hardware is likely a whole lot easier than sufficient regulation of software. To be safe from extinction we need:

A global Pause on AGI development and training of new models larger than GPT-4 (Microsoft OpenAI and Google Deepmind need to be in the vanguard of this; but a global agreement is needed). Possibly even a roll-back to GPT-3 level AI.

Strongly enforced regulation on limits to compute and data to prevent AI systems that are more powerful than GPT-3 until they can be 100% aligned (i.e. 0 undesirable prompt engineering is possible)[40].

A massive global public outcry calling for these things: 100M+ people, from all around the world.[41]

These are big asks. Should we aim for the stars and hope to land on the moon? Yes. Although consider that we may actually have to reach the stars to survive[42].

The wider concern for nearer term impacts from AI—bias, job losses, data privacy and copyright infringement (AI Ethics) - are also leading to similar[43] calls in terms of regulating compute and data. We need to ally with these campaigns (we’re coming at the issue from different angles, but the things we are calling for to happen are broadly the same; we can converge on a concrete set of asks that will address all issues).

A taboo around AGI could also do a lot of work in terms of reducing the need for enforcement. There aren’t lots of rabid bioscience accelerationists constantly planning underground human cloning and genetic engineering labs; any huge economic potential that technology might’ve had has been destroyed by a global[44] taboo.

Ultimately, to succeed, we need to get the UN Security Council on board: this is more serious and urgent than nuclear proliferation. But it is just as tractable: there aren’t many relevant large computer chip manufacturers or big data centre owners. GPUs and TPUs need to be treated like Uranium, and large clusters of them like enriched Uranium (we can’t afford to have too large a cluster lest they reach criticality and cause a catastrophic intelligence explosion).

But time is short, and the window of opportunity to act before we cross a point of no return narrow. This is the crisis of all crises.[45] We need to get governments to treat this as an emergency in the same way they did with Covid[46]: we need an emergency lockdown of the global AI industry.

We know from Covid that such “phase changes” in global public opinion are possible. This time it is more difficult however; we need such a monumental shift without there being an increasing body count first. If we wait for that, we put way too great a risk on crossing a point of no return where no matter how fast the response, we still lose. Global moratorium on AGI, now.

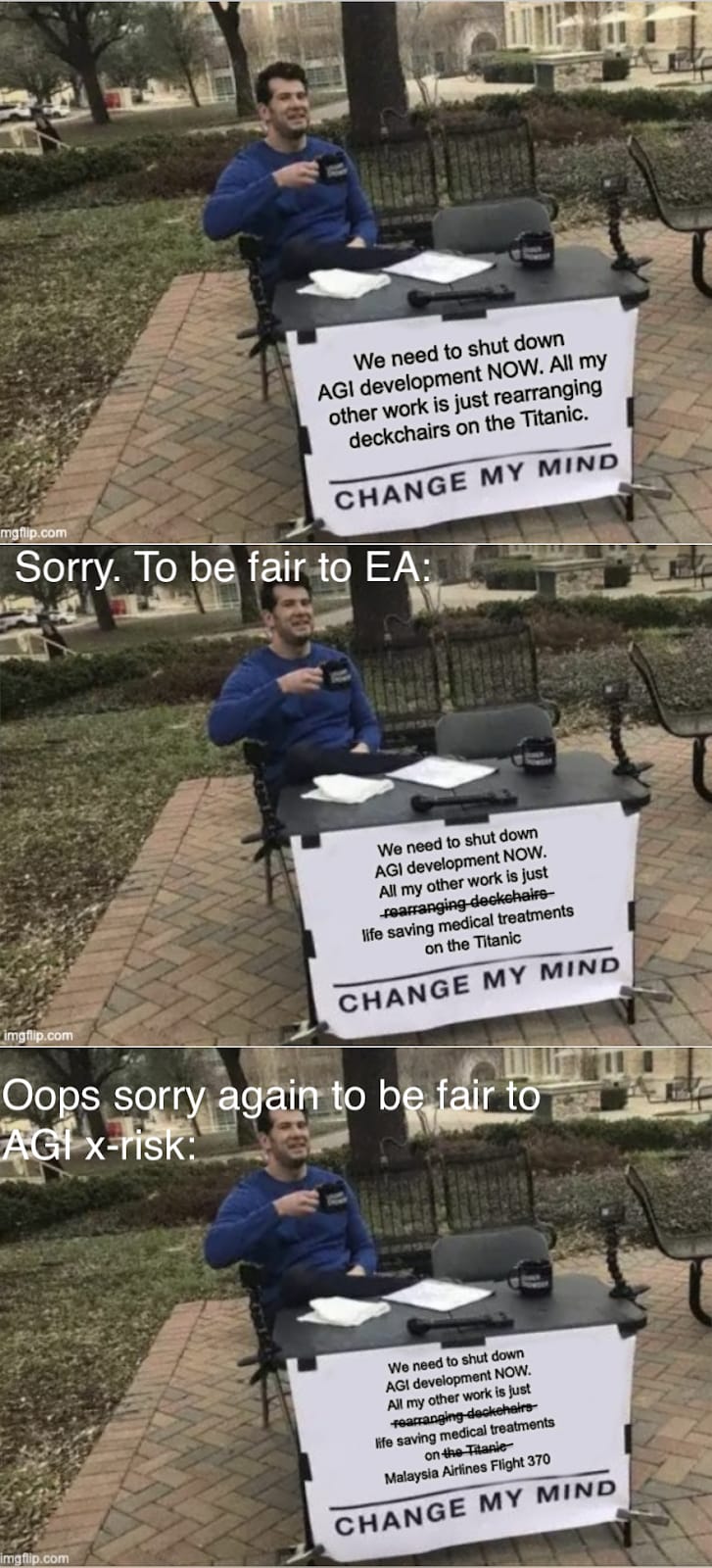

(You can also see this meme on Dank EA Memes and Twitter)

What you can and perhaps should do now

Come with me if you want to li..[47] Drop everything else you are doing until this is fixed![48] You may be feeling a bit discombobulated and/or sick with worry at this point. So here are some concrete actions you can take:[49]

Fill out this form if you are interested in getting involved.

Join the AGI-Moratorium-HQ Slack to coordinate with others already working in the space.

Learn more about the basics of AGI x-risk and safety (the linked AGISF course is ~20-40 hours reading).[50]

Be there to answer people’s questions (there are a lot).

Talk to people about this—friends, family, neighbours, coworkers.

Advocate for political action: we need a Pause on AGI (possibly even a rollback) asap if we are going to get through the acute risk period. The time for talking politely to (and working with) big AI is over. It has failed. They themselves are crying out to be regulated.

Post about this on Twitter and other social media (TikTok, YouTube, Instagram, Facebook etc)[51];

Join the AI Notkilleveryoneism Twitter Community.

Organise and share petitions (example); fund advertising for them;

Send letters to newspapers and magazines;

Lobby politicians/industry. Talk to any relevant contacts you might have, the higher up, the better;

Ask politicians to invite (or subpoena) AI lab leaders to parliamentary/congressional hearings to give their predictions and timelines of AI disasters;

Make submissions to government requests for comment on AI policy (example);

Help draft policy (some frameworks).

Organise/join demonstrations.

Consider civil disobedience / direct action.

Consider ballot initiatives or referendums if they are achievable in your state or country

Join organisations and groups working on this.

Ask the management at your current organisation to take an institutional position on this.

Coordinate with other groups concerned with AI who are also pushing for regulation.

Donate to advocacy orgs (there is already Campaign for AI Safety, and more are spinning up).

If you are earning or investing to give, seriously consider joining me[55] in liquidating a significant fraction of your assets to push this forward.

[Added 4May (H/T Otto)] If you are technical, work on AI Pause regulation proposals! There is basically one paper now[56], possibly because everyone else thought a Pause was too far outside the Overton Window. Now we’re discussing a Pause, we need to have fleshed out AI Pause regulation proposals.

[Added 4May (H/T Otto)] Start institutes or projects that aim to inform the societal debate about AGI x-risk. The Existential Risk Observatory is setting a great example in the Netherlands. Others could do the same thing. (Funders should be able to choose from a range of AI x-risk communication projects to spend their money most effectively. This is currently really not the case.)

If you are just starting out in AI Alignment, unless you are a genius and/or have had significant new flashes of insight on the problem, consider switching to advocacy for the Pause. Without the Pause in place first, there just isn’t time to spin up a career in Alignment to the point of making useful contributions.

If you are already established in Alignment, consider more public communication, and adding your name to calls for the Pause and regulation of the AI industry.

Be bold in your public communication of the danger. Don’t use hedging language or caveats by default; mention them when questioned, or in footnotes, but don’t make it sound like you aren’t that concerned if you are.

Be less exacting in your work. 80⁄20 more. Don’t do the classic EA/LW thing and spend months agonising and iterating on your Google Doc over endless rounds of feedback. Get your project out into the world and iterate as you go.[57] Time is of the essence.

But still consider downside risk: we want to act urgently but also carefully. Keep in mind that a lot of efforts to reduce AI x-risk have already backfired; alignment researchers have accidentally[58] contributed to capabilities research, and many AI governance proposals are at danger of falling prey to industry capture.

If you are doing other EA stuff, my feeling on this is: let’s go all out to get a (strongly enforced) Pause in place[59], and then relax a little and go back to what we were doing before.[60] Right now I feel like all my other work is just rearranging deckchairs on the Titanic[61]. We need to be running to the bridge, grabbing the wheel, and steering away from the iceberg[62]. We may not have much time, but by Good we can try. C’mon EA, we can do this!

Acknowledgements: For helpful comments and suggestions that have improved the post, and for the encouragement to write, I thank Akash Wasil, Johan de Kock, Jaeson Booker, Greg Kiss, Peter S. Park, Nik Samolyov, Yanni Kyriacos, Chris Leong, Alex M, Amritanshu Prasad, Dušan D. Nešić, and the rest of the AGI Moratorium HQ Slack and AI Notkilleveryoneism Twitter. All remaining shortcomings are my own.

- ^

Please note here that I’m not a fan of the “Death With Dignity” vibe. I hope the more pragmatically motivated and hopeful vibe I’m trying to present here comes across in this post (at least at the end).

- ^

If your first reaction to this is that it’s obviously wrong, I invite you to reply detailing your reasoning. See also the footnote 4 on the accompanying P(doom|AGI) is high post, that mentions a bounty of $1000.

- ^

Note there is already the precedent of GATO.

- ^

Perhaps if they were actually called this, rather than misnomered as Large Language Models, then people would be taking this more seriously!

- ^

Microsoft’s R&D spend was $25B last year. And the US government can afford to train a model 1000x larger than GPT-4. (And no, we probably don’t need to be worried about China).

- ^

There is the caveat in the referenced paper of “Nevertheless, the fact that we cannot rule out [data] contamination represents a significant caveat to our findings.”—never have I more hoped for something to be true!

- ^

We have already blasted past the conventional wisdom of:

“☐ Don’t teach it to code: this facilitates recursive self-improvement

☐ Don’t connect it to the internet: let it learn only the minimum needed to help us, not how to manipulate us or gain power

☐ Don’t give it a public API: prevent nefarious actors from using it within their code

☐ Don’t start an arms race: this incentivizes everyone to prioritize development speed over safety.”

“Industry has collectively proven itself incapable to self-regulate, by violating all of these rules.” (Tegmark, 2023). - ^

See next section: The default outcome of AGI is doom.

- ^

You may be thinking: “no; what we need is a ‘Manhattan Project’ on Alignment!” Well, we need that as well as a Pause, not in lieu of one. It’s too late for alignment to be solved in time now, even with the Avengers working on it. This was last year’s best plan. And I tried (to persuade the people who could do it, to do it. But failed.) This year’s best plan is to prioritise the Pause.

- ^

NeXt is also the name of an AI in the 2020 TV series Next, which depicts a takeover scenario involving an “Alexa” type AI that becomes superhumanly intelligent (well worth a watch imo).

- ^

The generation after next of foundation model AI. Following generations are labelled NextAI3, NextAI4 etc.

- ^

- ^

It’s unlikely that the runway AI that we’re on a path to create will be sentient. There is some speculation that recurrent neural networks of sufficient complexity could be, but that is far from established, and is not the trajectory we are on.

- ^

4-7 months training time ~10^7 seconds; ~10^25 FLOP used to train; → 10^18 FLOPS.

- ^

Nvidia reported $3.6B annualised in AI chip sales in Q4 2022, and Nvidia CEO Jensen Huang estimated in Feb 2023 that “Now you could build something like a large language model, like a GPT, for something like $10, $20 million”.

- ^

If you don’t think this is enough to pose a danger, Moore’s Law and associated other trends are still in effect (despite some evidence for a slowdown). And we are nowhere near close to the physical limits to computation (the theoretical limit set by physics is, to a first approximation, as many orders of magnitude above current infrastructure as current infrastructure is above the babbage engine).

- ^

3B android phones (how many people would bother to opt out if it was part of the standard Terms & Conditions?), recording 3,300 words/day (a conservative estimate) each, would be 10 trillion words.

- ^

It’s even possible they are doing this already, given the precedent OpenAI has set around disregarding data privacy. This would be in keeping with the big tech attitude of breaking the rules and taking the financial/legal hit after (that is usually much less than the profits gained from the transgression). See also: the NSA/Five Eyes.

- ^

100 days of recording at the rate calculated in the above footnote would be 1 quadrillion, ~1000x what GPT-4 was trained on.

- ^

Accessing these may not be as easy as with phone mics, but that doesn’t mean that a determined AGI+human group couldn’t hack their way to significant levels of data harvesting from them via fear or favour.

- ^

Placed in inverted commas because it’s better to think of it as an unconscious digital alien (see next point).

- ^

In Terminator 2, Skynet becomes “self-aware”; I think using the term “situationally aware” instead would’ve added more realism. Maybe it’s time for a remake of T2. (Then again, is there time enough left for that?)

- ^

See also The Alignment Problem from a Deep Learning Perspective, p4.

- ^

Re doom “only” being 50%: “it’s not suicide, it’s a coin flip; heads utopia, tails you’re doomed” seems like quibbling at this point. [Regarding the word you’re in “you’re doomed” in the above—I used that instead of “we’re doomed”, because when CEOs hear “we’re”, they’re probably often thinking “not we’re, you’re. I’ll be alright in my secure compound in NZ”. But they really won’t! Do they think that if the shit hits the fan with this and there are survivors, there won’t be the vast majority of the survivors wanting justice?]

- ^

This is my approximate estimate for P(doom|AGI). And I’ll note here that the remaining 10% for “we’re fine” is nearly all exotic exogenous factors (related to the simulation hypothesis, moral realism being true—valence realism?, consciousness, DMT aliens being real etc), that I really don’t think we can rely on to save us!

- ^

You might be thinking, “but they are trapped in an inadequate equilibrium of racing”. Yes, but remember it’s a suicide race! At some point someone really high up in an AI company needs to turn their steering wheel. They say they are worried, but actions speak louder than words. Take the financial/legal/reputational hit and just quit! Make a big public show of it. Pull us back from the brink.

Update: 3 days after I wrote the above, Geoffrey Hinton, the “Godfather of AI”, has left Google to warn of the dangers of AI. - ^

As an intuition pump, imagine a million John von Neumann-level smart alien hackers each operating at a million times faster than a human, let loose on the internet, and the chaos that that would cause to global infrastructure.

- ^

- ^

The demo in the linked video looks awfully like a precursor to Skynet…

- ^

And there is also potential for astronomical levels of future suffering.

- ^

There was the case of the Manhattan Project scientists worrying about the first nuclear bomb test becoming a self-sustaining chain reaction and burning off the whole of our planet’s atmosphere. But in that case, the richest companies in the world weren’t racing toward exploding a nuke before the scientists could get the calculations done to prove the tech was safe from this failure mode.

- ^

I’m not sure why my signature still hasn’t been processed, but I signed it on the first day!

- ^

- ^

Although maybe this is changing a bit too.

- ^

Via financial incentive and social conditioning.

- ^

And whilst the leaders of big AI companies (OpenAI, Deepmind, Anthropic) have expressed the desire to share the massive future wealth gained from AI in a globally equitable manner. The details of how such a Windfall will be managed have yet to be worked out.

- ^

We press our fire alarms in the UK and Europe (and Australia/NZ). They are being pulled in the US too though! Are they being pressed/pulled on other continents too? Please comment if you know of examples!

- ^

The linked post was ahead of its time. The fact that it was redacted is sad to see now (especially the crossed out parts).

- ^

It’s unreasonable to want empirical evidence for existential risk; you can’t collect data when you’re dead!

- ^

- ^

Including from the most populous countries, most of which have not had any meaningful say over the development of the AI technology that is likely to destroy them (e.g. India, Indonesia, Pakistan, Nigeria, Brazil, Bangladesh, Mexico).

- ^

This makes me think of the film Interstellar. Not sure if there is a useful metaphor to draw from it, but the film depicts an intense struggle for human survival; maybe it’s memeable material (this scene is a classic).

- ^

The linked article, controversially titled We Must Declare Jihad Against A.I. – although note that Jihad in this case is referring to Bulterian Jihad, the concept from the fictional Dune universe—a war on “thinking machines” – contains the quotes: “Policymakers should consider going several steps further than the moratorium idea proposed in the recent open letter, toward a broader indefinite AI ban”, “The prevention of an AGI would be an explicit objective of this regulatory regime.”, and “a basic rule may be: The more generalized, generative, open-ended, and human-like the AI, the less permissible it ought to be—a distinction very much in the spirit of the Butlerian Jihad, which might take the form of a peaceful, pre-emptive reformation, rather than a violent uprising.”

- ^

Yes, even China imprisons people for this.

- ^

It’s funny to think back just 6 months, and how the FTX crisis seemed all enveloping, an existential crisis for EA. This seems positively quaint now. Oh to go back to those days of mere ordinary crisis.

- ^

- ^

- ^

I’m joking and using memes here as a coping mechanism: I’m actually really fucking scared.

- ^

Being proactive about this has certainly helped my mental health, but ymmv.

- ^

The first few suggestions may seem a little trite in light of all the above. But you’ve got to start somewhere. And I know that EAs can be good at scaling projects up rapidly when they are driven to do so.

- ^

I separate out Twitter here as it is arguably the biggest driver of global opinion.

- ^

You may think that this isn’t important, but memes are effective drivers of culture.

- ^

You can reply to OpenAI and Google Deepmind executives’ tweets with this one.

- ^

Someone should also create and populate “Global AGI Moratorium” tags on the EA Forum and LessWrong.

- ^

For those not in the Highly Speculative EA Capital Accumulation Facebook group, the post reads: “There’s no point in trying to become a billionaire by 2030 if the world ends before then.

I’m concerned enough about AGI x-risk now, in this new post-GPT-4+plugins+AutoGPT era, that I intend to liquidate a significant fraction of my net worth over the next year or two, in a desperate attempt to help get a global moratorium on AGI (/Pause) in place. Without this breathing space to allow for alignment to catch up, I think we’re basically done for in the next 2-5 years.

Have been posting a lot on Twitter. For the next year or two I’m an “AI Notkilleveryoneist” above being an EA. I hope we can get back to EA business as usual soon (and I can relax and enjoy my retirement a bit), but this is an unprecedented global emergency that needs resolving. Is anyone else here thinking the same way?”

- ^

Shavit’s paper is great and the ideas in it should be further developed as a matter of priority.

- ^

I wrote this post in ~2 days (although I developed a lot of the points on Twitter over the preceding couple of weeks) and had one round of feedback over ~2 days.

- ^

Sam Altman is being mean to Yudkowsky here, but maybe he has a point. OpenAI and Anthropic were both founded over concern for AI Alignment, but have now, I think, become two of the main drivers of AGI x-risk.

- ^

If your bottleneck for doing this is money, talk to me.

- ^

Maybe I can even get to enjoy my retirement a bit. One can hope.

- ^

To be fair to EA and x-risk, it’s actually more like life saving medical treatments on Malaysia Airlines Flight 370 (as per my meme above).

- ^

Or (re the above footnoted reference), running to the cockpit, grabbing the controls, and steering away from the ocean abyss..

Downvoted because I view some of the suggested strategies as counterproductive. Specifically, I’m afraid of people flailing. I’d be much more comfortable if there was a bolded paragraph saying something like the following:

To give specific examples illustrating this (which may also be good to include and/or edit the post):

I believe tweets like this are much better (and net positive) then the tweet you give as an example. Sharing anything less then the strongest argument can be actively bad to the extent it immunizes people against the actually good reasons to be concerned.

Most forms of civil disobedience seems actively harmful to me. Activating the tribal instincts of more mainstream ML researchers, causing them to hate the alignment community, would be pretty bad in my opinion. Protesting in the streets seems fine, protesting by OpenAI hq does not.

Don’t have time to write more. For more info see this twitter exchange I had with the author, though I could share more thoughts and models my main point is be careful, taking action is fine, and don’t fall into the analysis-paralysis of some rationalists, but don’t make everything worse.

Thanks for writing out your thoughts in some detail here. What I’m trying to say is that things are already really bad. Industry self-regulation has failed. At some point you have to give up on hoping that the fossil fuel industry (AI/ML industry) will do anything more to fix climate change (AGI x-risk) than mere greenwashing (safetywashing). How much worse does it need to get for more people to realise this?

The Alignment community (climate scientists) can keep doing their thing; I’m very much in favour of that. But there is also now an AI Notkilleveryoneism (climate action) movement. We are raising the damn Fire Alarm.

From the post you link:

The United Nations Security Council. Anything less and we’re toast.

And we can talk all we like about the unilateralist’s curse, but I don’t think anything a bunch of activists can do will ever top the formation and corruption-to-profit-seeking of OpenAI and Anthropic (the supposedly high status moves).

I tentatively approve of activism & trying to get govt to step in. I just want it to be directed in ways that aren’t counterproductive. Do you disagree with any of my specific objections to strategies, or the general point that flailing can often be counterproductive? (Note not all activism i included in flailing, flailing, it depends on the type)

The above contains links to a lot of arguments, but it does not develop almost any argument fully.

In lieu of responding to them all, I will address one sentence.

Here’s a claim you make about likely future timelines: “GPT-4 + curious (but ultimately reckless) academics → more efficient AI → next generation foundation model AI (which I’ll call NextAI[10] for short)”

There are two links in “ultimately reckless” go to two papers, which must be to support the claim that researchers are reckless, or the claim that we will quickly get more efficient next level AI, presumably.

One paper uses GPT-4 to do neural architecture search over CIFAR-10, or CIFAR-100, or ImageNet16-120. These are all pretty tiny datasets… which is why people do NAS over them, because otherwise it would be insanely expensive. Even doing this kind of thing with a small LM would be really tough. Furthermore, GPT-4 looks like it’s giving you the same approximate OOM improvements that other NAS techniques do, which isn’t that great. Like I could write more here, but this is basically miles away from being used to help with actual LLMs, let alone recursive self improvement—it’s on toy datasets used for NAS because they are small.

The other uses GPT-4 augmented with planners to produce plans. “Plans” means classical planing problems like “Given a set of piles of blocks on a table, a robot is tasked with rearranging them into a specified target configuration while obeying the laws of physics.” This kind of thing—with definite ontology, non-vague world, etc—has been in AI since the 70s and the ability of GPT-4 to work with a classical planner is basically entirely unrelated to… lengthy, contextually vague problems necessary to take over the world. Like, it isn’t just that “Take over the world” is too vague and onto logically unspecified for this kind of treatment, “Unload my car, don’t break anything” is also too vague and unspecified. In any event, I don’t think it’s remotely worrisome, and in any event extremely unclear how it leads to more efficient NextAI.

Anyhow, neither of these papers lead me to think that researchers are reckless. I’m really unsure how they’re suppose to support that claim, or the claim that we’ll get NextAI quickly, or even what NextAI would be.

Anyhow, if the above sentence is representative, the above looks like a gish gallop—responding at length to many of these points would take 50x the space spent here.

It’s really not intended as a gish gallop, sorry if you are seeing it as such. I feel like I’m really only making 3 arguments:

1. AGI is near

2. Alignment isn’t ready (and therefore P(doom|AGI is high)

3. AGI is dangerous

And then drawing the conclusion from all these that we need a global AGI moratorium asap.

So Gish gallop is not the ideal phrasing, although denotatively that is what I think it is.

A more productive phrasing on my part would be, when arguing it is charitable to only put forth the strongest arguments you have, rather than many questionable arguments.

This helps you persuade other people, if you are right, because they won’t see a weaker argument and think all your arguments are that weak.

This helps you be corrected, if you are wrong, because it’s more likely that someone will be able to respond to one or two arguments that you have identified as strong arguments, and show you where you are wrong—no one’s going to do that with 20 weaker arguments, because who has the time?

Put alternately, it also helps with epistemic legibility. It also shows that you aren’t just piling up a lot of soldiers for your side—it shows that you’ve put in the work to weed out the ones which matter, and are not putting weird demands on your reader’s attention by just putting all those which work.

You have a lot of sub-parts in your argument for 1, 2, 3 above. (Like, in the first section there are ~5 points I think are just wrong or misleading, and regardless of whether they are wrong or not are at least highly disputed). It doesn’t help to have succession of such disputed points—regardless of whether your audience is people you agree with or people you don’t!

The way I see the above post (and it’s accompaniment) is knocking down all the soldiers that I’ve encountered talking to lots of people about this over the last few weeks. I would appreciate it if you could stand them back up (because I’m really trying to not be so doomy, and not getting any satisfactory rebuttals).

I think you need to zoom out a bit and look at the implications of these papers. The danger isn’t in what people are doing now, it’s in what they might be doing in a few months following on from this work. The NAS paper was a proof of concept. What happens when it’s massively scaled up? What happens when efficiency gains translate into further efficiency gains?

Probably nothing, honestly.

Here’s a chart of one of the benchmarks the GPT-NAS paper tests on. They GPT-NAS paper is like.… not off trend? Not even SOTA? Honestly looking at all these results my tenative guess is that the differences are basically noise for most techniques; the state space is tiny such that I doubt any of these really leverage actual regularities in it.

From the Abstract:

They weren’t aiming for SOTA! What happens when they do?

LessWrong:

A post about all the reasons AGI will kill us: No. 1 all time highest karma (827 on 467 votes; +1.77 karma/vote)

A post about containment strategy for AGI: 7th all time highest karma (609 on 308 votes; +1.98 karma/vote)

A post about us all basically being 100% dead from AGI: 52nd all time highest karma (334 on 343 votes; +0.97 karma/vote, a bit more controversial)

Also LessWrong:

A post about actually doing something about containing the threat from AGI and not dying [this one]: downvoted to oblivion (-5 karma within an hour; currently 13 karma on 24 votes; +0.54 karma/vote)

My read: y’all are so allergic to anything considered remotely political (even though this should really not be a mater of polarisation—it’s about survival above all else!) that you’d rather just lie down and be paperclipped than actually do anything to prevent it happening. I’m done.

I don’t want to speak on behalf of others, but I suspect that many people who are downvoting you agree that something should be done. They just don’t like the tone of your post, or the exact actions you’re proposing, or something else specific to this situation.