Speedrun ruiner research idea

Edit Apr 14: To be perfectly clear, this is another cheap thing you can add to your monitoring/control system; this is not a panacea or deep insight folks. Just a Good Thing You Can Do™.

Central claim: If you can make a tool to prevent players from glitching games *in the general case*, then it will probably also work pretty well for RL with (non-superintelligent) advanced AI systems.

Alternative title: RL reward+environment autorobustifier

Problem addressed: every RL thing ever trained found glitches/edge-cases in the reward function or the game/physics-sim/etc and exploited those until the glitches were manually patched

Months ago I saw a tweet from someone at OpenAI saying, yes, of course this happens with RLHF as well. (I can’t find it. Anyone have it bookmarked?

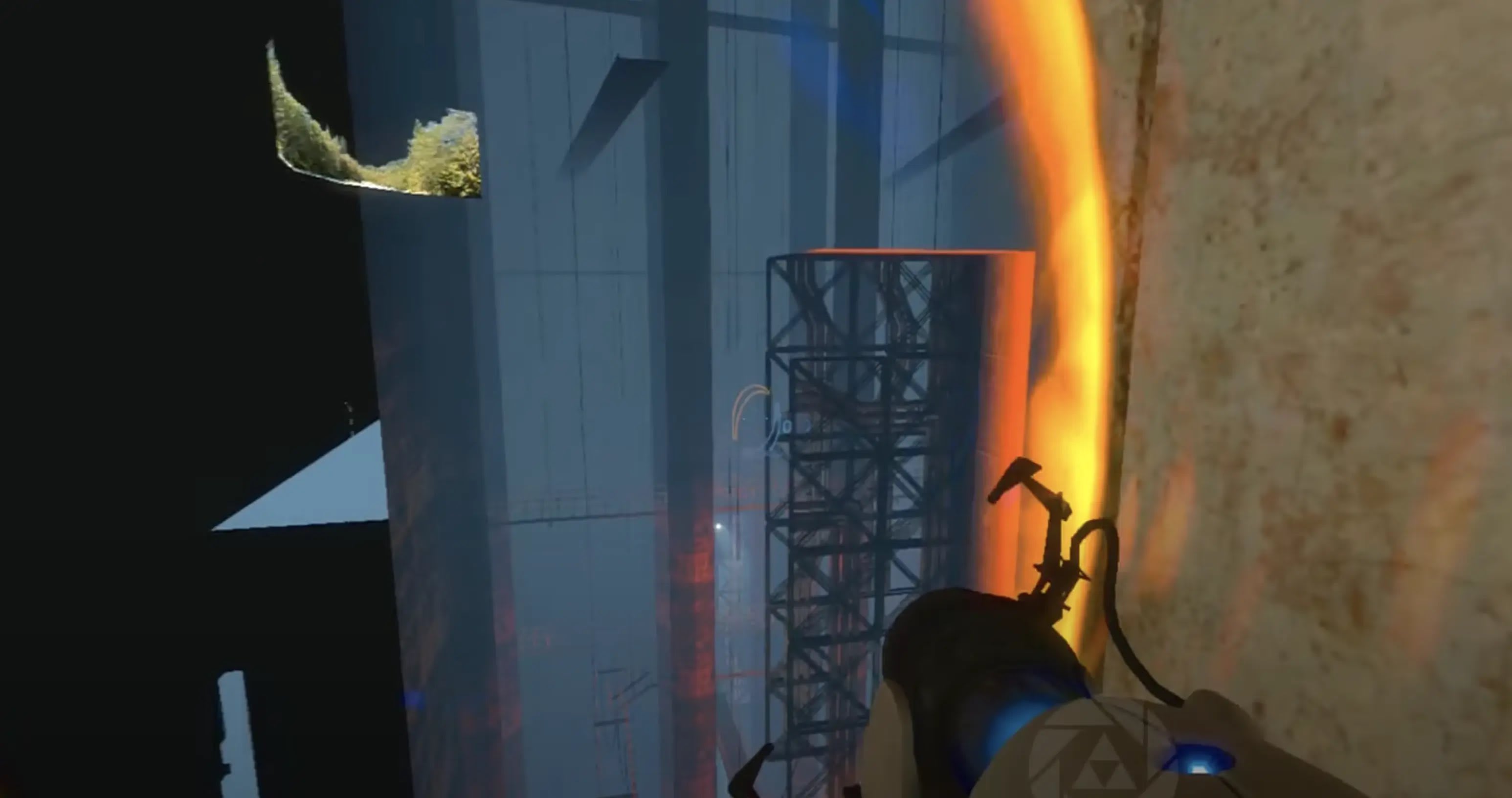

Obviously analogous ‘problem’: Most games get speedrun into oblivion by gamers.

Idea: Make a software system that can automatically detect glitchy behavior in the RAM of **any** game (like a cheat engine in reverse) and thereby ruin the game’s speedrunability.

You can imagine your system gets a score from a human on a given game:

Game is unplayable:

score := -1Blocks glitch:

score += 10 * [importance of that glitch]Blocks unusually clever but non-glitchy behavior:

score -=5 * [in-game benefit of that behavior]Game is laggy:[1]

score := score * [proportion of frames dropped]Tool requires non-glitchy runs on a game as training data:

score -= 2 * [human hours required to make non-glitchy runs]

/ [human hours required to discover the glitch]

Further defense of the analogy between general anti-speedrun tool and general RL reward+environment robustifier:

Speedrunners are smart as hell

Both have similar fuzzy boundaries that are hard to formalize:

‘player played game very well’ vs ‘player broke the game and didn’t play it’

is like

’agent did the task very well’ vs ‘agent broke our sim and did not learn to do what we need it to do’In other words, you don’t want to punish talented-but-fair players.

Both must run tolerably fast (can’t afford to kill the AI devs’ research iteration speed or increase training costs much)

Both must be ‘cheap enough’ to develop & maintain

Breakdown of analogy: I think such a tool could work well through GPT alphazero 5, but probably not GodAI6

(Also if random reader wants to fund this idea, I don’t have plans for May-July yet.)

metadata = {

"effort: "just thought of this 20 minutes ago",

"seriousness": "total",

"checked if someone already did/said this": false,

"confidence that": {

"idea is worth doing at all": "80%",

"one can successfully build a general anti-speedrun thing": "25%",

"tools/methods would transfer well to modern AI RL training": "50%"

}

}

- ^

Note that “laggy” is indeed the correct/useful notion, not eg “average CPU utilization increase” because “lagginess” conveniently bundles key performance issues in both the game-playing and RL-training case: loading time between levels/tasks is OK; more frequent & important actions being slower is very bad; turn-based things can be extremely slow as long as they’re faster than the agent/player; etc.

Are you proposing applying this to something potentially prepotent? Or does this come with corrigibility guarantees? If you applied it to a prepotence, I’m pretty sure this would be an extremely bad idea. The actual human utility function (the rules of the game as intended) supports important glitch-like behavior, where cheap tricks can extract enormous amounts of utility, which means that applying this to general alignment has the potential of foreclosing most value that could have existed.

Example 1: Virtual worlds are a weird out-of-distribution part of the human utility function that allows the AI to “cheat” and create impossibly good experiences by cutting the human’s senses off from the real world and showing them an illusion. As far as I’m concerned, creating non-deceptive virtual worlds (like, very good video games) is correct behavior and the future would be immeasurably devalued if it were disallowed.

Example 2: I am not a hedonist, but I can’t say conclusively that I wouldn’t become one (turn out to be one) if I had full knowledge of my preferences, and the ability to self-modify, as well as lots of time and safety to reflect, settle my affairs in the world, set aside my pride, and then wirehead. This is a glitchy looking behavior that allows the AI to extract a much higher yield of utility from each subject by gradually warping them into a shape where they lose touch with most of what we currently call “values”, where one value dominates all of the others. If it is incorrect behavior, then sure, it shouldn’t be allowed to do that, but humans don’t have the kind of self-reflection that is required to tell whether it’s incorrect behavior or not, today, and if it’s correct behavior, forever forbidding it is actually a far more horrifying outcome, what you’d be doing is, in some sense of ‘suffering’, forever prolonging some amount of suffering. That’s fine if humans tolerate and prefer some amount of suffering, but we aren’t sure of that yet.

I do not propose one applies this method to a prepotence

Cool then.

Are you aware that prepotence is the default for strong optimizers though?

What about mediocre optimizers? Are they not worth fooling with?

Wouldn’t really need reward modelling for narrow optimizers. Weak general real-world optimizers, I find difficult to imagine, and I’d expect them to be continuous with strong ones, the projects to make weak ones wouldn’t be easily distinguishable from the projects to make strong ones.

Oh, are you thinking of applying it to say, simulation training.

The issue with this idea is that it seems pretty much impossible

What makes you say so? Seems like 25% chance possible to me. You can find where position is stored and watch for sudden changes. Same thing with score & inventory...

Oh wait, maybe I misunderstood what you meant by “any game”. I thought you meant a single program that could detect it across all games, but it sounds much more feasible with a program that can detect it in one specific game.

All games. Find where position is stored etc automatically i mean. It will certainly have failure cases. Easy to make a game that breaks it. The question is if an adversarial agent can easily break it in a regular (ie not adversarially chosen) game.

It seems to me that either:

RLHF can’t train a system to approximate human intuition on fuzzy categories. This includes glitches, and this plan doesn’t work.

RLHF can train a system to approximate human intuition on fuzzy categories. This means you don’t need the glitch hunter, just apply RLHF to the system you want to train directly. All the glitch hunter does is make it cheaper.

You may be right. Perhaps the way to view this idea is “yet another fuzzy-boundary RL helper technique” that works in a very different way and so will have different strengths and weaknesses than stuff like RLHF. So if one is doing the “serially apply all cheap tricks that somewhat reduce risk” approach then this can be yet another thing in your chain.