an effective ai safety initiative

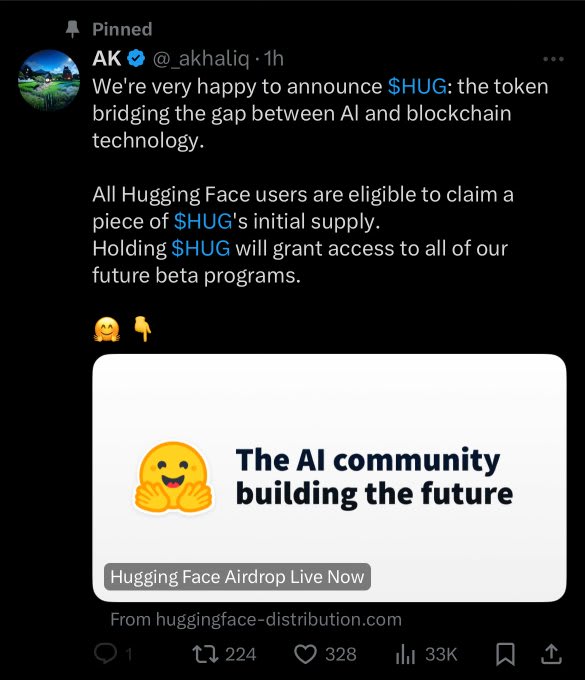

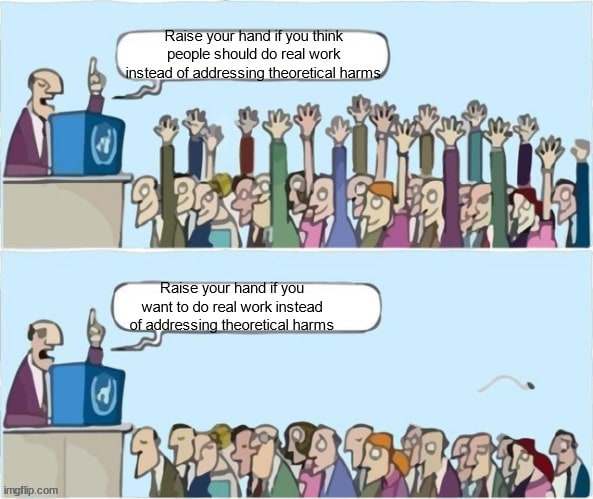

A frequent criticism of the Effective Altruism/AI Safety memeplex is that they are too focused on theoretical harms from AI and not enough on real ones (it’s me, I’m the one who frequently makes this criticism).

Consider the recent California AI bill, which has heavy fingerprints of EA on it.

Notice that the bill:

Literally does not apply to any existing AI

Addresses only theoretical harms (e.g. AI could be used for WMD)

Does so by attacking open source models (thereby in my estimation actually making us less safe)

What if instead there was a way we could spend a small amount of money in a way that:

Addressed real, existing harms caused by AI (or AI like) actors

Without passing any new laws or taking away anyone’s rights

That virtually everyone would agree is net-beneficial

Sounds like a clear (effective) win, right?

Here it is!

What will the first malevolent AGI look like?

Virtually every realistic “the AI takes over the world” story goes like this:

The AI gets access to the internet

It hacks a bunch of stuff and starts accumulating resources (money, host computers, AWS GPU credits, etc)

It uses those resources to (idk, turn us all into paperclips)

This means that learning how to defend and protect the internet from malicious actors is a fundamental AI safety need.

In a world of slow takeoff where we survive because of multiple warning shots, the way we survive is by noticing the warning shots and increasing the resilience of our society in response to those warning shots. There is no fire alarm, so why not start increasing resilience now.

Fortunately (unfortunately?), we live in a world where the first malevolent AGI we encounter will probably look like ChaosGPT, a self-propagating AI Agent programmed to survive while causing as much chaos as possible.

Such an AGI will not be a super-intelligent agent that uses acausal bargaining to talk its way out of a box. It will be barely more intelligent than GPT-4. For all I know, it will literally be llama-3-400B-evil-finetune. Assuming AI scaling trends continue, near-future models will have just enough smarts to pull off common internet scams, steal money, and use that money to rent more GPUs to feed themselves.

How do we counter such threats?

An idea that the US military has been keen to since at least the 1970′s is that speed is critical to victory. This concept desperately needs to be applied to cyber security.

Consider a typical hack:

The hacker compromises some target of value’s account

The hacker uses this account to direct victims to a webpage that does some combination of

steals their money

installs further malware on their computer

Eventually someone notices the account is hacked

Authorities step in and take down the hacked message

Notice that is is precisely during the time between step 2) and step 4) that the attacker is gaining money/power/etc. Therefore the longer that it takes to get to step 4, the faster the attacker grows.

Much like the R0 of Covid-19, this ratio of: determines how fast the malevolent actor grows, and if it is low enough, eventually the agent dies out.

But everyone knows hacking is bad, how is this an EA cause?

Our government is very bad at information technology. EAs, on the other hand, tend to be extremely technically savvy.

Another lesson learned from Covid-19 was that something as simple as setting up a phone number people can call for information is utterly beyond the ability of the US government. On the other hand EA-adjacent people have this skill in droves.

So here is my proposal:

Set up a rapid-response cyber-security “911”. This will be a phone-number/website/twitter account/everything-else endpoint where people can report if they suspect an account has been hacked. Then implement a system to rapidly validate that information and pass it on to the relevant authorities as quickly as possible.

The goal (and everything else about the design will work towards this) will be to minimize the amount of time from step 1) in our model attack to step 4)

How much would this cost?

Not much.

If you read this far, there is a strong probability you could build an MVP website in an afternoon. Vaccinate CA appeared to cost “millions of dollars”, but this is pennies in the EA world, especially with the entire future of humanity at stake.

Would it really be net beneficial?

If the only outcome was that 1000 additional grandmas across the country called “hacker 911” and were told “no, that’s not your grandson in jail, it’s an AI”, instead of wiring $10k to a bad actor, it would be net beneficial. We’re talking billions of dollars so even a few percent effectiveness would be a net-win for society.

Seriously, the wins here are massive.

None of this matters if the Super-intelligent AGI hacks directly into people’s brains using Diamondoid Bacteria

Maybe not.

But anything that increases the ability of our society to survive by even a little bit helps.

Are there other things like this that we should be doing?

Probably, yes.

Feel free to suggest more in the comments.

Why are you writing a blog post instead of just doing this?

That’s the whole point of the bill! It’s not trying to address present harms, it’s trying to address future harms, which are the important ones. Suggesting that you instead address present harms is like responding to a bill that is trying to price in environmental externalities by saying “but wouldn’t it be better if you instead spent more money on education?”, which like, IDK, you can think education is more important than climate change, but your suggestion has basically nothing to do with the aims of the original bill.

I don’t want to address “real existing harm by existing actors”, I want to prevent future AI systems from killing literally everyone.

A real AI system that kills literally everyone will do so by gaining power/resources over a period of time. Most likely it will do so the same way existing bad-agents accumulate power and resources.

Unless you’re explicitly committing to the Diamondoid bacteria thing, stopping hacking is stopping AI from taking over the world.

I am not sure I understand this comment. Are you saying you think there are autonomous AI systems that right now are trying to accumulate power? And that present regulation should be optimized to stop those?

I don’t think I know of a single story of this type? Do you have an example? It’s a thing I’ve frequently heard argued against (the AI doesn’t need to first make lots of money, it will probably be given lots of control anyways, or alternatively it can just directly skip to the “kill all the humans” step, it’s not really clear how the money helps that much), and it’s not like a ridiculous scenario, but saying “virtually every realistic takeover story goes like this” seems very false.

For example, Gwern’s “It looks like you are trying to take over the world” has this explicit section:

Which explicitly addresses how it doesn’t seem worth it for the AI to make money.

Point taken. “$$$” was not the correct framing (if we’re specifically talking about the Gwern story). I will edit to say “it accumulates ‘resources’”.

The Gwern story has faster takeoff than I would expect (especially if we’re talking a ~GPT4.5 autoGPT agent), but the focus on money vs just hacking stuff is not the point of my essay.

I think accumulate power and resources via mechanisms such as (but not limited to) hacking seems pretty central to me.

Agree that if you include things that are not money, it starts being relatively central. I do think constraining it to money gets rid of a lot of the scenarios.

Literally does not apply to any existing AI

Does so by attacking open source models

1 contradicts 3.

...but there are a number of EAs working on cybersecurity in the context of AI risks, so one premise of the argument here is off.

And a rapid response site for the public to report cybersecurity issues and account hacking generally would do nothing to address the problems that face the groups that most need to secure their systems, and wouldn’t even solve the narrower problem of reducing those hacks, so this seems like the wrong approach even given the assumptions you suggest.