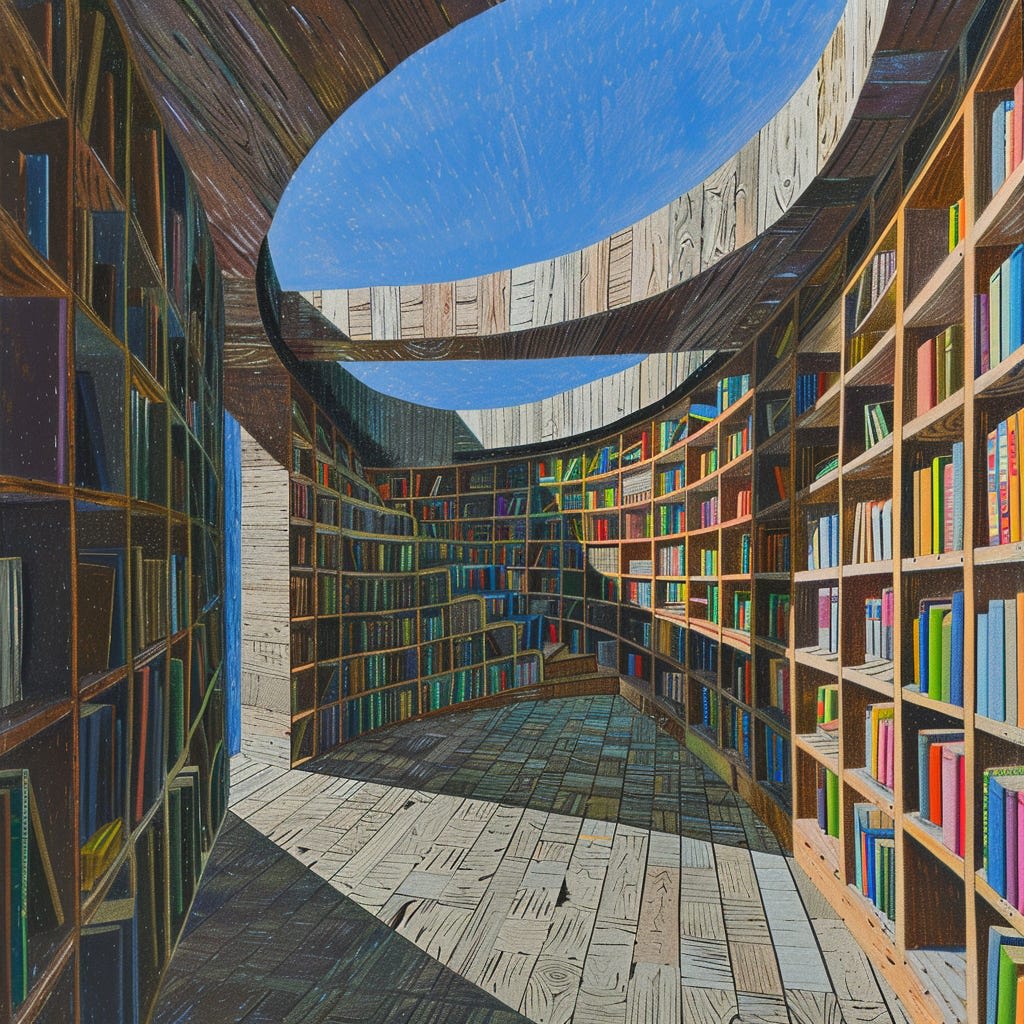

Midjourney, “infinite library”

I’ve had post-election thoughts percolating, and the sense that I wanted to synthesize something about this moment, but politics per se is not really my beat. This is about as close as I want to come to the topic, and it’s a sidelong thing, but I think the time is right.

It’s time to start thinking again about neutrality.

Neutral institutions, neutral information sources. Things that both seem and are impartial, balanced, incorruptible, universal, legitimate, trustworthy, canonical, foundational.[1]

We don’t have them. Clearly.

We live in a pluralistic and divided world. Everybody’s got different “reality-tunnels.” Attempts to impose one worldview on everyone fail.

To some extent this is healthy and inevitable; we are all different, we do disagree, and it’s vain to hope that “everyone can get on the same page” like some kind of hive-mind.

On the other hand, lots of things aren’t great: crumbling institutions, culture war eating everything, opportunistic SEO slop taking over the internet, noise overwhelming signal.

There is a pervasive sense of an old system dying, and a sense of potential that a new one might be born. Venkatesh Rao has somewhat inspired me here with his Mandala and Machine essay, though I’m taking a slightly different lens on it.

These days, savvy people tend to accept that the “world” is a roiling chaotic ball of stormclouds, impossible to get a handle on; that all information is intrinsically untrustworthy; that all environments outside a trusted inner circle are adversarial.

This is not a universal human condition.

And it may not be our permanent condition. The future may hold something more like a “foundation” or a “framework” or a “system of the world” that people actually trust and consider legitimate.

I don’t mean that in a utopian, impossible way; I mean it in the limited, realistic, actually-existing way that trust and legitimacy have been present in (recent and distant) past cultures.

Not “Normality”

To a large extent, we still rely on a deep, though crumbling, reserve of unexamined “normality.” Apolitical, basic, running on 90’s-sitcom scripts; you work at your job, then you come home to your friends or family and relax. “You know, normal.” The thing that seems to have broken in the last decade of Weirding.

A lot of people, of course, are nostalgic for normality. Noah Smith is eager to put the 2010s behind us, for instance.

I can see why, but really, unexamined normality is inadequate. It’s what it feels like from the inside to be Keynes’ “slaves of some defunct economist.”

“Why have people picked up such-and-such extreme, radical view all of a sudden?” Well, it’s the Internet and social media, of course; but more specifically, it’s because that view would always have been attractive to some fraction of people, but in decades past they’d never have heard of it.

A lot of people, for instance, only figured out as adults that Communism had been an influential force in U.S. culture through the 20th century. This was basic background knowledge for me, growing up in an academic family, but for other people it seems to be an electric hidden truth.

Of course some percent of people who read Osama bin Laden’s manifesto for the first time are going to find it compelling! Most people had literally never heard a terrorist’s point of view before. Almost anything he could have written would be more persuasive than “he has no point of view, he’s just evil.”

Unexamined normality’s main protection is silence; it persists only because most people have never heard of any alternative to the way things are. Denser communication networks mean that more people will encounter questions like “but why are things this way?” And, because normality runs on silence, it doesn’t have good explicit answers.

To some extent, weirdness is just the inevitable result of the operation of Mind. Everything unexamined, sooner or later, gets questioned, disputed, messed with. To hope to avoid this is to seek to impose stupidity on the world.

But of course, to the extent that normality was functional, people questioning “the way we’ve always done it” makes things dysfunctional. Things once trusted implicitly can break down, gradually and then suddenly.

What is Neutrality Anyway?

A process is neutral towards things when it treats them the same.

To be neutral on a controversial topic is to take no sides on it. There are many ways this can be done:

consider the topic out of bounds for a space: “no politics or religion at the dinner table”

allow anyone to express their opinion, but take no institutional stand; people may take sides, but the platform/newspaper/university/government that defines the space around them does not.

describe the controversy without taking a side on it

potentially take a stand on the controversy, but only when a conclusion emerges from an impartial process that a priori could have come out either way, such as:

randomization

judicial proceedings

scientific experiment

majority vote, prediction market, or other opinion-aggregation mechanisms

The practical reason to seek neutrality is to allow cooperation between people who don’t agree.

For instance, the Dutch Republic, in the late 16th century, was one of the first polities in Europe to enshrine religious freedom into law rather than establishing a state church. Why? Because the Netherlands were a patchwork of counties, divided between Catholics, Calvinists, Arminians, and assorted “spiritualist” sects, trying to unite and win independence from Hapsburg Spain. It was not practicable for one church to win outright and force the others into submission; their only hope was compromise.

Invariably, real-world neutrality is neutral between some things, not all things. The relevant range of things to be neutral about is determined by:

whom do you want to cooperate with? for what purpose?

what range of opinions and worldviews could they and you hold?

where do differences in views threaten to break down cooperation?

what are the minimal conditions for cooperation to hold?

We don’t care about being “neutral” between literally all strings of characters, or between Earthlings and hypothetical extraterrestrials. We care about neutrality between actually-existing, or plausibly-potential, crucial differences in point of view between potential allies.

Neutrality-producing procedures can expand options for cooperation. Perhaps Alice and Bob can’t cooperate if there’s a risk their disagreement will blow up unexpectedly, but they can cooperate if they can agree to put it aside or submit to a neutral adjudication procedure. The procedure is a “box” they can safely put their conflict in.

Seen from that point of view, the most important property of the “box” is that Alice and Bob have to be willing to put their conflict in it. If either suspects that the “box” is rigged to give the other one the upper hand, it won’t work. The perception of neutrality is the critical element. But in the long run, you earn trust by being worthy of it.

“Neutrality is Impossible” is Technically True But Misses The Point

I once saw a computer science professor respond to the allegation that “neutrality is impossible” by asking “ok, how is my algorithms and data structures class non-neutral?”

So, ok, imagine a standard undergraduate computer science class on algorithms and data structures. It covers the usual material; none of the ideas are controversial within computer science; political discussion never comes up in the classroom because it’s irrelevant to the course.

But yeah, actually, it’s not perfectly neutral.

There’s a technical sense that nobody really cares about, where it’s “non-neutral” to make utterances in a language instead of random strings of sounds, or to use concepts that seem “natural” to humans instead of weird philosophers’ constructs like bleen and grue.

More relevantly, it’s possible for actual people to have objections to things about our “neutral” CS class.

Some people object to the structure of universities and courses themselves; grading, lectures, problem sets, and tests; conflating purposes like credentialing, coming-of-age experiences, vocational training, and liberal-arts education into single institutions called “colleges”; etc.

Some people object to the choices of what goes into a “standard” CS curriculum. Are these the right things to prioritize? Are these concepts valid? Sure, by stipulation nothing being taught is controversial within computer science, but if you ask a mathematician you might get some gripes. Ask a philosopher? A religious mystic? They might say the whole business is rotten and deluded.

Some people object to “apolitical” spaces in themselves. To declare some topics to be “politics” and thus temporarily off limits is to implicitly believe something about them: namely, that they can be put aside for the space of a class, that it is not so urgently necessary to disrupt the status quo that things can’t be left locally “apolitical” while something else gets done.

Even our hypothetical bog-standard CS class takes a stand on some things — it “believes” that certain things are worth teaching, in a certain way. And it’s not just conceivably but realistically possible for there to be disagreement about that!

When we talk about neutrality we have to talk about neutral relative to what and neutral in what sense.

The class is neutral on a wide range of controversial topics, in the sense that it doesn’t engage with them at all. This means that a pretty wide range of people can take the class and learn algorithms and data structures. The range isn’t literally infinite — non-English-speakers, people not enrolled in the college, maybe deaf students if there’s no accommodation, etc, will not be able to take the class.

The question isn’t “is it neutral or not”, the question is, “is the type and degree of neutrality doing the job we want it to do?” And then it’s possible to get into the real disagreements, about what different people are trying to accomplish in the first place with the “neutrality”.

Systems of the World

A system-of-the-world is the dual of neutrality; it’s the stuff that a space is not neutral about. The stuff that’s considered foundational, basic, “just true”, common-knowledge. The framework on which you hang a variety of possible options.

The framework, the system, the “container” of the space, is deliberately neutral between various options, but it cannot be neutral with regards to itself. There has to be a “bottom” somewhere.

Intellectual worldviews “bottom out” in philosophical axioms or concepts. They seem obvious, they may rarely be discussed, but you would have to stop doing your day-to-day work if you truly rejected the foundations on which it rests.[2]

The literal “world” “view” of viewing Planet Earth as a globe, and all points on the earth as locations on a sphere or its map projections, is itself a framework or system. It is neutral between points on Earth — the globe is global, it picks no sides between countries or continents.[3] But it’s still a particular choice. It shows the world as if from a spaceship[4] outside, rather than from the point of view of a creature walking around on it.

I’ve been rereading Neal Stephenson’s Baroque Cycle lately, which is about the origins of the broader “system of the world” which includes the Newtonian/Leibnizian views of mathematics and physics, the emergence of political liberalism, the Bank of England and the first financial institutions, the earliest days of the Industrial Revolution, etc. Modernity, in other words.

Modernity is a system that aims at a sort of universality and neutrality. But it isn’t the only system that has had such aims. And Modernity absolutely does take certain stands, with which other worldviews disagree.

A “system of the world” is really not the same thing as unexamined normality.

Yes, when living within a system-of-the-world, people trust that system. They rely on it, believe in it, not ironically or provisionally but for real. They treat it as common knowledge that can be assumed in communication. They see the system as true and real, or at least validly reflective of reality, not as a mere hypothesis or lens.

And, yes, people’s faith in systems-of-the-world can shatter when they first learn about something that doesn’t fit.

But strong systems-of-the-world are articulable. They can defend themselves. They can reflect on themselves. They can (and should) shatter in response to incompatible evidence, but they don’t sputter and shrug when a child first asks “why”.

Not every point of view is even trying to be a system-of-the-world. It’s possible to be fundamentally particularist, speaking only “for” yourself, or your community, or a particular priority. Not even aspiring to any kind of universalism or neutrality.

Remember, neutrality is a thing we do sometimes, when we need to. It might be better to speak of “impartializing tactics”, rather than a static Nirvana-like state of “neutrality”. We use “impartializing tactics” in order to broaden our reach: cooperation between people, and also generalization across contexts and coordination across time. In using such a tactic, an entity decides not to care about the object-level stuff it’s being “impartial” about. It “withdraws” to be above the fray in one domain, to gain greater scope and range elsewhere.[5]

Some entities (people, institutions, cultural viewpoints, etc) don’t choose to withdraw or “rise above” or use these “impartializing tactics” very much at all; they aren’t even aiming to be systems of the world.

We can tell these apart, the same way we can tell apart universalizing religions (like Christianity and Islam, which hope that one day everyone will accept their truth) from non-universalizing religions (like Hinduism; from what I’m told it’s impossible to “convert to Hinduism.”)

“Red tribe” and “blue tribe” memeplexes are non-universalizing. They don’t actually want to be fully general and create a system that “everyone” can live with; they want to win, sure, but not to “go meta” or “transcend-and-include.”[6]

Let’s Talk About Online

Right now, in the parts of the world I can see, I think our system-of-the-world is extremely weak. There are very few baseline “common-sense” assumptions that can be treated as common knowledge even among educated people meeting on friendly terms. What we learned in school? What we saw on TV? Common life experiences? There’s just a lot less of that than there once was.

The literal UI affordances of software apps are, I suspect, an embarrassingly large amount of our current “system-of-the-world”, and while I generally want to stick up for software and the internet and “tech” against its enemies, the tech industry was certainly never prepared for the awesome responsibility of becoming The World!

I think it’s ultimately going to be necessary to build a new system to replace the old.

In a narrow sense that looks like an information system. What replaces Google, Wikipedia, blogs, and social media, in the way that those (partially) replaced traditional media, libraries, schools, etc?

In a deeper sense, our present day’s conflicting viewpoints “live in” a World defined by the tools and platforms that host them.

There are also social, political, economic, and natural processes in the physical world; we don’t live in cyberspace, we need to eat, and physical-world factors and forces constrain us; but there’s no shared conceptual framework, no “World”, that encompasses all of them. There’s just Reality, which can bite you. The closest thing we’ve got to a universal conceptual World is your computer screen, I’m sorry to say.

And it just isn’t prepared to do the job of being, y’know, good.

In the sense that a library staffed by idealistic librarians is trying to be good, trying to not take sides and serve all people without preaching or bossing or censoring, but is also sort of trying to be a role model for children and a good starting point for anyone’s education and a window into the “higher” things humanity is capable of.

The “ideal library” is not a parochial or dogmatic ideal. It’s quite neutral and understated. As a library patron, you are in the driver’s seat, using the library according to its affordances. The library doesn’t really have a loud “voice” of its own, it isn’t “telling” you anything — it’s primarily the books that tell you things. But the library is quietly setting a frame, setting a default, making choices.

So is every institutional container.

And most institutions and information-technology frameworks are not really ambitious enough to be setting the “frame” or “context” for the whole World.

They make openly parochial/biased decisions to choose sides on issues that are obviously still active controversies.

Or, they use “impartializing tactics” chosen in the early-Web-2.0 days when people were more naive and utopian, like “allow everyone to trivially make a user account, give all user accounts initially identical affordances, prioritize user-upvoted content.”

These techniques are at least attempts at neutral universality, and they have real strengths — we don’t want to throw the baby out with the bathwater — but they weren’t designed to be ultra-robust to exploitation, or to make serious attempts to assess properties like truth, accuracy, coherence, usefulness, justice.

“But an algorithm can’t possibly assess those properties! That’s naive High Modernist wishful thinking!”

“Well, have you tried lately? Are you sure nothing we’re capable of now — with LLMs, zero-knowledge proofs, formal verification, prediction markets, and the like — can make a better stab at these supposedly-subjective virtues than “one guy’s opinion” or “a committee’s report” or “upvotes”?”

The alternative viewpoint is “there is no substitute for good human judgment; we don’t need systems, we need the right people to talk amongst themselves and make good decisions.”

To that, I say, first of all, that the “right” people to handle issues around information/truth, trust/legitimacy, and dispute resolution are more likely to congregate around attempts to build neutral, technical, robustly unexploitable systems. The ideal archetype of “good human judgment” is a judge…and judges are learned in law, which is a system. (Or protocol, if you prefer.)

Second of all, it is not enough for the “right people to talk amongst themselves”. There are some very brilliant people who only reveal their insights in private group chats and face-to-face relationships. They don’t all find each other. And the scale of their ambitions is limited by the scale of recruitment and management that this “personal judgment” method can manage. And I shouldn’t have to explain why “just put the right people in charge of everything” is unstable.[7]

But sure, I generally do concede that a lot of human judgment is irreducible. It’s just not everything, and human judgment can be greatly extended by protocols. There may be no technological substitute for good people, but there are certainly complements.

I may write some more specific ideas for how this could shape up in a later post, but really I’m in the earliest stages of speculation and other people know much more about the object level. What I want to encourage, and seek out, is a healthy vainglory. You actually can contemplate creating a new (cognitive) foundation for the world, something that vast numbers of very different people will actually want to count on. It’s been done before. It doesn’t require anything literally impossible. It’s not wilder than stuff respectable people discuss freely like “building AGI” or “creating a new and better culture”.

In fact, what makes it seem crazier is the concreteness; it’s a happily-ever-after endgame (even though it doesn’t need to be anywhere near literally eternal or perfect) that’s supposed to be made of mundane moving parts like procedures, UI elements, and algorithms — ideas that never seem that great when specifics are proposed. But I think the possibility of “dumb proposed ideas that won’t work” is a strength, not a weakness; the alternative is to speak concretely only about relatively narrow things, and vaguely about Utopia, and leave the vulnerable space in between safely unspoken (and thus never traversed.)

So yeah. Broken, crooked ladders towards Utopia are a good thing, actually. Please, make more of them!

- ^

- ^

Yes, yes, David Chapman thinks this is false, that there are no valid axioms or foundations anywhere and we can still do our “jobs” while recognizing that all frameworks are provisional and groundless. I have, in fact, thought about this, and I’ll get to that in a minute.

- ^

well, except via the convention of putting the North Pole on top; “Why are we changing maps?”

- ^

or angel; in Paradise Lost there’s a surprising amount of astronomy, and a lot of attention lavished on what Satan sees as he approaches Earth from the outside.

- ^

Venkat Rao thinks of “mandalas” as caring about people and “machines” as driven by knowing, not caring. I think this is a screwy dualism; knowing and caring are inextricably linked. The “thinking vs. feeling” dichotomy has always been fake. I expect this is because he is personally a “thinker” whose feelings aren’t super strong, so it seems natural to him to divide the world into “thinkers” and “feelers”, whereas I can never decide which kind I am. (Mercury/Moon conjunct, lol.)

But there is a real thing I see about “distance” and “withdrawal” when you go up a meta level. The more meta you go, the less involved with the object level you can be; you can “touch” many more things, but more lightly and vaguely. “Caring” is conserved.

- ^

Ken Wilber’s phrase.

- ^

I shouldn’t, but I do. #1: Not everybody agrees on who “the right people” are; if you tell me you’ll just put “the right people” in charge, I might not trust you. #2: The “right people” may not continue to make the “right” decisions when given more power or resources. #3: what about succession? can the “right people” choose other “right people” reliably? if they could, wouldn’t they be doing it already? The world doesn’t look like wisdom and knowledge is stably being transmitted within a network of elite dynasties.

Agree 100% with all of this.

There is one thing that comes to mind IMO and that people who argue that “everything is political” and that neutrality is an evil ploy to actually sneak in your evil ideas really underestimate: the point of impartiality as you describe it is to keep things simpler. Maybe a God with an infinite mind could keep in it all the issues, all the complexities, all the nuances simultaneously, and continuously figure out the optimal path. But we can’t. We come up with simple rules like “if you’re a doctor, you have a duty to cure anyone, not pick and choose” because they make things more straightforward and decouple domains. Doctors cure people. If you do crimes, there’s a system dedicated to punish you. But a doctor’s job is different, and the knowledge they need to do it has nothing to do with your rap sheet.

The frenzy to couple everything into a single tangle of complexity is driven by the misunderstanding that complacency is the only reason why your ideology is not the winning one, and that if only everyone was forced to think about it all of the time, they’d end up agreeing with it. But in reality, decoupling is necessary mostly because it allows the world to be cognitively accessible rather than driving us into either perpetual decision paralysis or perpetual paranoia (or worse, both). Destroying that doesn’t give anyone victory, we just end up all worse off.

In some cases, yes, but this is only one factor of many. Others include:

Our brains are often drawn to narratives, which are complex and interwoven. Hence the tendency to bundle up complex logical interdependencies into a narrative.

Our social structures are guided/constrained by our physical nature and technology. For in-person gatherings, bundling of ideas is often a dominant strategy.

For example, imagine a highly unusual congregation: a large unified gathering of monotheistic worshippers with considerable internal diversity. Rather than “one track” consisting of shared ideology, they subdivide their readings and rituals into many subgroups. Why don’t we see much of this (if any) in the real world? Because ideological bundling often pairs well with particular ways of gathering.

P.S. I personally welcome gathering styles that promote both community and rationality (spanning a diversity of experiences and values).

I don’t think this is quite the same thing. Most people actually don’t want to have to apply moral thought to every single aspect of their lives, it’s extenuating. The ones who are willing to, and try to push this mindset on others, are often singularly focused. Yes, bundling people and ideas in broad clusters is itself a common simplification we gravitate towards as a way to understand the world, but that does not prevent people from still being perfectly willing to accept some activities as fundamentally non-political.

I am not following the context of the comment above. Help me understand the connection? The main purpose of my comment above was to disagree with this sentence two levels up:

… in particular, I don’t think it captures the dominant driver of “coupling” or “bundling”.

Does the comment one level up above disagree with my claims? I’m not following the connection.

Related: https://www.lesswrong.com/posts/vcuBJgfSCvyPmqG7a/list-of-collective-intelligence-projects

I had never thought about approaching this topic from the abstract, but I’m judging from the karma that this is actually what people want, rather than existing projects.

I’m surprised! I thought people were overall disinterested about this topic, but it seems more like the problem itself hadn’t been stated to start with.

Speaking only for myself, I can agree with the abstract approach (therefore: upvote), but I am not familiar with any of the existing projects mentioned in the article (therefore: no vote; because I have no idea how useful the projects actually are, and thus how useful is the list of them).

Informative feedback! Though, I’m sorry if it wasn’t clear, I’m not talking about this list -the post I linked is more like inner documentation for people working in this space and I though the OP and similarly engaged people could benefit from knowing about it, I don’t think it’s “underrated” in any way (I’m still learning something out of your comment, though, so thanks!)

What I meant was that I noticed that posts that present the projects in detail (e.g. Announcing the Double Crux Bot) tend to generate less interest than this one, and it’s a meaningful update for me -I think I didn’t even realize a post like this was “missing”.

Saying the (hopefully) obvious, just to avoid potential misunderstanding: There is absolutely nothing wrong with writing something for a smaller group of people (“people working in this space”), but naturally such articles get less karma, because the number of people interested in the topic is smaller.

Karma is not a precise tool to measure the quality of content. If there were more than a handful of votes, the direction (positive or negative) usually means something, but the magnitude is more about how many people felt that the article was written for them (therefore highest karma goes to well written topics aimed at the general audience).

My suggestion is to mostly ignore these things. Positive karma is good, but bigger karma is not necessarily better.

My intuition is to get less excited by single projects (a Double Crux bot) until someone has brought them all together & created momentum behind some kind of “big” agglomeration of people + resources in the “neutrality tools” space.

I didn’t know about all the existing projects and I appreciate the resource! Concrete >> vague in my book, I just didn’t actually know much about concrete examples.

I think much of the difficulty comes from the fact that past systems-of-the-world, the unexamined normalcies that functioned only in silence, were de facto authored by evolution, so they were haphazard patchworks that worked, but without object level understanding, which makes them particualrly tricky to replace.

Take ancient fermentation rituals. They very well may break down when a child asks “why do we have to bury the fish?” or “why bury it this long?” or “why do we have to let it sit for 4 months now?”

The elder doesn’t know the answer. All he knows is that, if you don’t follow these steps, people who eat the fish get sick and die.

Going from that system, to the better system involving chemistry and the germ theory of disease, is a massive step, and unfortunately, there’s a large gap between realizing your elder has no idea what’s going on, and figuring out the chemical process behind fermentation.

We’re suffering in this gap right now, unwinding many similar unexamaned normalcies that were authored by cultural evolution, which we don’t yet have comprehensive models to replace. Marriage and monogomy are a good example. After birth control, that system started quickly collapsing when the first child asked “why” and no adults could give a good answer, yet we still don’t have anything close to a unified theory of human mating, relationships, and child-rearing that’s better.

The tough part of building new neutral common sense is that, in so many cases right now, we don’t actually know the right answer, all we know is that the old cultural wisdom is at best incomplete, and at worst totally anachronistic.

Social media was essentially like 3 billion kids asking “why” all at once, about a mostly-still-patchwork system mostly authored by evolution, so it’s no real surprise it fell apart. Despite those whys being justified, replacing something evolution has created with a gears level comprehensive model is really, really hard.

Worthy task, of course, but I think fundamentally more complex than the manner by which the original systems were created.

We even seem to have a collective taboo against developing such theory, or even making relatively obvious observations.

Agreed, and we have well-meaning conservatives like Ben Shapiro taking marriage and monogamy as almost an unfalsifiable good, and then using it as a starting point for their entire political philosophy. Maybe he’s right, but I wish realistic post-birth-control norms were actually part of the overton window.

The ingroup library is a method for building realistic, sustainable neutral spaces that I haven’t seen come up. Ingroup here can be a family, or other community like a knitting space, or lesswrong. Why doesn’t lesswrong have a library, perhaps one that is curated by AI?

I have it in my backlog to build a library, based on a nested collapsible bulleted list along with a swarm of LLMs. (I have the software in a partially ready state!) It would create an article summary of your article, as well as link your article to the broader lesswrong knowledge base.

Your article would be summarised as below (CMIIW):

In the world there are ongoing culture wars, and noise overwhelming signal; so that one could defensibly take a stance that incoming information outside a trusted inner circule is untrustworthy and adversarial. Neutrality and neutral institutions are proposed as a difficult solution to this.

Neutrality refers to impartializing tactics / withdrawing above conflict / putting conflict in a box to facilitate cooperation between people

Neutral institutions / information sources things that both seem and are impartial, balanced, incorruptible, universal, legitimate, trustworthy, canonical, foundational. We don’t have many if any neutral institutions right now.

There is a hope for a “foundation” or a “framework” or a “system of the world” that people actually trust and consider legitimate, but it would require effort.

Now for my real comments:

> Strong systems-of-the-world are articulable. They can defend themselves. They can reflect on themselves. They can (and should) shatter in response to incompatible evidence, but they don’t sputter and shrug when a child first asks “why”.

I love how well put this is. I am reminded of Wan Shi Tong’s Library in the Avatar series.

I think neutral institutions spring up whenever there are huge abundances unlocked. For example, google felt like a neutral institution when it first opened, before SEO happened and people realised it was a great marketing space. I think this is because of

courtesy of @Screwtape in Rationality Quotes Fall 2024.

A few new fronts that humanity has either recently unlocked or I feel like are heavily underutilized:

Retrieval Augmented LLMs > LLMs > Search

AI Agents > human librarians > not having librarians

Outliners > word processors > erasers > pens

Well, arguably it does: https://www.lesswrong.com/library

Library in the sense of “we collect texts written by other people” is: The Best Textbooks on Every Subject

I would like to see this one improved; specifically to have a dedicated UI where people can add books, vote on books, and review them. Maybe something like “people who liked X also liked Y”.

Also, not just textbooks, but also good popular science books, etc.

Curated. This was one of the more inspiring things I read this year (in a year that had a moderate number of inspiring things!)

I really like how Sarah lays out the problem and desiderata for neutrality in our public/civic institutional spaces.

LessWrong’s strength is being a fairly opinionated ”university[1]” about how to do epistemics, which the rest of the world isn’t necessarily bought into. Trying to make LW a civic institution would fail. But, this post has me more excited to revisit “what would be necessary to build good, civic infrastructure” (where “good” requires both “be ‘good’ in some kind of deep sense,” but also “be memetically fit enough to compete with Twitter et all.” One solution might be convincing Musk of specific policies rather than building a competitor)

I.e. A gated community with epistemic standards, a process for teaching people, and a process for some of those people going on to do more research.

Durable institutions find ways to survive. I don’t mean survival merely in terms of legal continuity; I mean fidelity to their founding charter. Institutions not only have to survive past their first leader; they have to survive their first leader themself! The institution’s structure and policies must protect against the leader’s meandering attention, whims, and potential corruptions. In the case of Elon, based on his mercurial history, I would not bet that Musk would agree to the requisite policies.

Interesting but I’ve just skim so will need to come back. With that caveat made, I seem to have had a couple of thought that keep recurring for me that seem compatible or complementary with your thoughts.

First, where do we define the margin between public and private. It strikes me that a fair amount of social strife does revolve around a tension here. We live in a dynamic world so thinking that the sphere of private actions will remain static seem unlikely but as the world changed (knowledge, applied knowledge driving technology change, movement of people resulting in cultural transmission and tensions...) will be forces resulting in a change in the line between public and private.

While I’m not entirely sure it is the best framing, I do think of this in the form of externalities. Negative externalities are the more challenging form. What I think starts happening is that we live in t=0 and some set of private activities are producing very little negative impacts on others. But we find by t=10 some of the elements in that set of private activities are now producing a large enough total negative external effect that:

People are able to start seeing cause and effect

The costs to others are sufficient to over come the organizational and transactional costs of using social infrastructures to seek relief for the harms. Those can be formal in the sense of courts and government law/regulation requests. But also informal in various forms—the people engaging in the activities loose reputation, don’t get invited to the good parties any more, people don’t want to talk with them or be seen with them any more, perhaps even more aggressive responses.

At some point either most accept that a new definition of “private” exists and the old ways have changed or society reached the point that those who have not adapted will be treated as criminal and removed from society.

The other thing I’ve been thinking about is related. One hears the where’s my flying car, it’s the 21st Century already quip now and then. But I think a better one might be: It’s the 21st Century, why am I still living under and 18th Century form of government?

I think these relate some of your post in that a lot of the social conflict you point to is driven by the shifting margin between public and private sphere of action. As that margin shifts people use the government to address those new conflicts within the society. But few if any governments differ substantially from those that have existed for centuries. I would characterize that vision of government, even when thinking of representative democracies, as that of an actor/agent. Government takes actions, just like the private members of society do. It should function, as you say, in a neutral way. Part of the failing there comes from government, being an actor/agent, then has its own interests, agendas and biases.

That government as an active participant contrasts a bit with how I think most people think of markets. Markets don’t really do anything. They are simply an environment in which active entities come and interact with each other. Markets don’t set price or quality or even really type of item—these are all unplanned outputs. The market itself is indifferent to all those, it’s neutral in the sense you use that term.

Well, in the 21st Century might we not think that how governments are structured might also shift? While I am far from sure that the shift would be correctly called divestiture or privatization (which seems most people think of when talking about fixing government—or for some calling for increasing what its already doing) I do think the shift might be away from an acting entity and more into some type of passive environment that has some commonality with markets. In a very real sense governments are already a type of market setting but not a price/money exchange one (the representatives are not quite but out bids and offers on votes) but clearly these is an demand mediation and supply process going on. But currently the market-like aspect of government is about integrating voter/members of society demands and then the government makes a decision and takes the actions it wants. I would think some areas might be suitable for taking out the government being the actor and let the actions be decentralized among the people. Probably not individual action, I suspect some sub-agent presence will exist to reduce organizational/transaction costs but certainly the process would look more market-like and be a more neural setting. That might well then remove a lot of the divisiveness and conflict we see with the existing “old school” forms of government.

That is all probably a bit poorly written and expressed but it’s a quick dump of a couple of not fully thought out ideas.

Love this!!! To inspect the concept of neutrality as a software like process driven institution is a very illuminating approach.

I would add that there is a very relevant discussion in the AI sphere which is profoundly connected to the points you are making: the existence of bias in AI models.

To identify and measure bias is in a sense to identify and measure lack of neutrality, so it follows that, to define bias, one must first be very rigorous on the definition of neutrality.

This can seem simple for some of the more pedestrian AI tasks, but can become increasingly sophisticated as we introduce AI as an essential piece in workflows and institutions.

AI algorithms can be heavily biased, datasets can be biased and even data structures can be biased.

I feel this is a topic which you can further explore in the future. Thank you for this!

There are notable differences between these properties. Usefulness and justice are quite different from the others (truth, accuracy, coherence). Usefulness (defined as suitability for a purpose, which is non-prescriptive as to the underlying norms) is different from justice (defined by some normative ideal). Coherence requires fewer commitments than truth and accuracy.

Ergo, I could see various instantiations of a library designed to satisfy various levels. Level 1 would value coherence. Level 2 would add truth and accuracy. Level 3: +usefulness. Level 4, +justice.

One point I’m not sure about with the idea of neutrality is neutrality of process or of outcome. Or would that distinction not matter to your interests here?

From the POV of this piece, whether you want process or outcome neutrality...depends. You’re being “neutral” so you can enlist the trust and cooperation of a varied range of people, in order to pursue some purpose that all of you value. Do the relevant people care more about process or outcome?

Another way to look at it is that it’s ultimately always a question of process. “Ensuring equality/neutrality of outcome” means “I propose a process that makes adjustments so that outcomes end up in a desirable “neutral” configuration.” Then the questions are: a.) does that process result in that “neutral” configuration? b.) is that configuration of outcomes what we want? c.) is the process of adjustment itself objectionable? (e.g. “whether or not material equality is desirable, I don’t believe in forced redistribution to equalize wealth”).

When you are building an institution that aims at neutrality, ultimately you’re proposing a set of processes, things the institution will do, and hoping that these processes engender trust. “I can’t accept the likely outcome of this process” and “I can’t accept the way this process works” are both ways trust can break down.

Our current condition is a product of our material circumstances, and those definitely aren’t permanent in their essential character, as many people have variously noted. Things are still very much in flux, and any eventual medium-to-long term frameworks would significantly depend on (possibly wildly divergent) trajectories that major trends will take in the foreseeable future. Of course, even marginal short-term improvements to the memetic hellscape would be welcome, but seriously expecting something substantial and lasting is premature.

I think the foundational truths that neutral institutions or frameworks might embody come at the cost of marginalizing fringe ideas, which may not fit within the current paradigm. However, I am more optimistic about the current chaos, as it could signify that a clash of ideas is taking place.

There are two kinds of beliefs, those that can be affirmed individually (true independently of what others do) and those that depend on others acting as if they believe the same thing. They are, in other words, agreements. One should be careful not to conflate the two.

What you describe as “neutrality” to me seems to be a particular way of framing institutional forbearance and similar terms of cooperation in the face of the possibility of unrestrained competition and mutual destruction. When agreements collapse, it is not because these terms were unworkable (except for in the trivial sense that, well, they weren’t invulnerable to gaming and do on) but because cooperation between humans can always break down.

Right. Some such agreements are often called social contracts. One catch is that a person born into them may not understand their historical origin or practical utility, much less agree with them.