Wow, what a week. We had the Executive Order, which I read here so you don’t have to and then I have a tabulation of the reactions of others.

Simultaneously there was the UK AI Summit.

There was also robust related discussion around Responsible Scaling Policies, and the various filings companies did in advance of the Summit.

I touched on Anthropic’s RSP in particular in previous weeks, but I did not do a sufficiently close analysis and many others have offered more detailed thoughts as well, and the context has evolved.

So I am noting that I am not covering those important questions in the weekly roundup, and they will be covered by one or more later distinct posts. I also potentially owe an after action report from EA Global Boston, if I can find the time.

This post is instead about everything else.

Table of Contents

While top sections of this post are highlighted in bold, if you read one thing this week, I would read On the Executive Order.

Introduction.

Table of Contents.

Language Models Offer Mundane Utility. Which ones offer the most?

Language Models Don’t Offer Mundane Utility. Or so say many civilians.

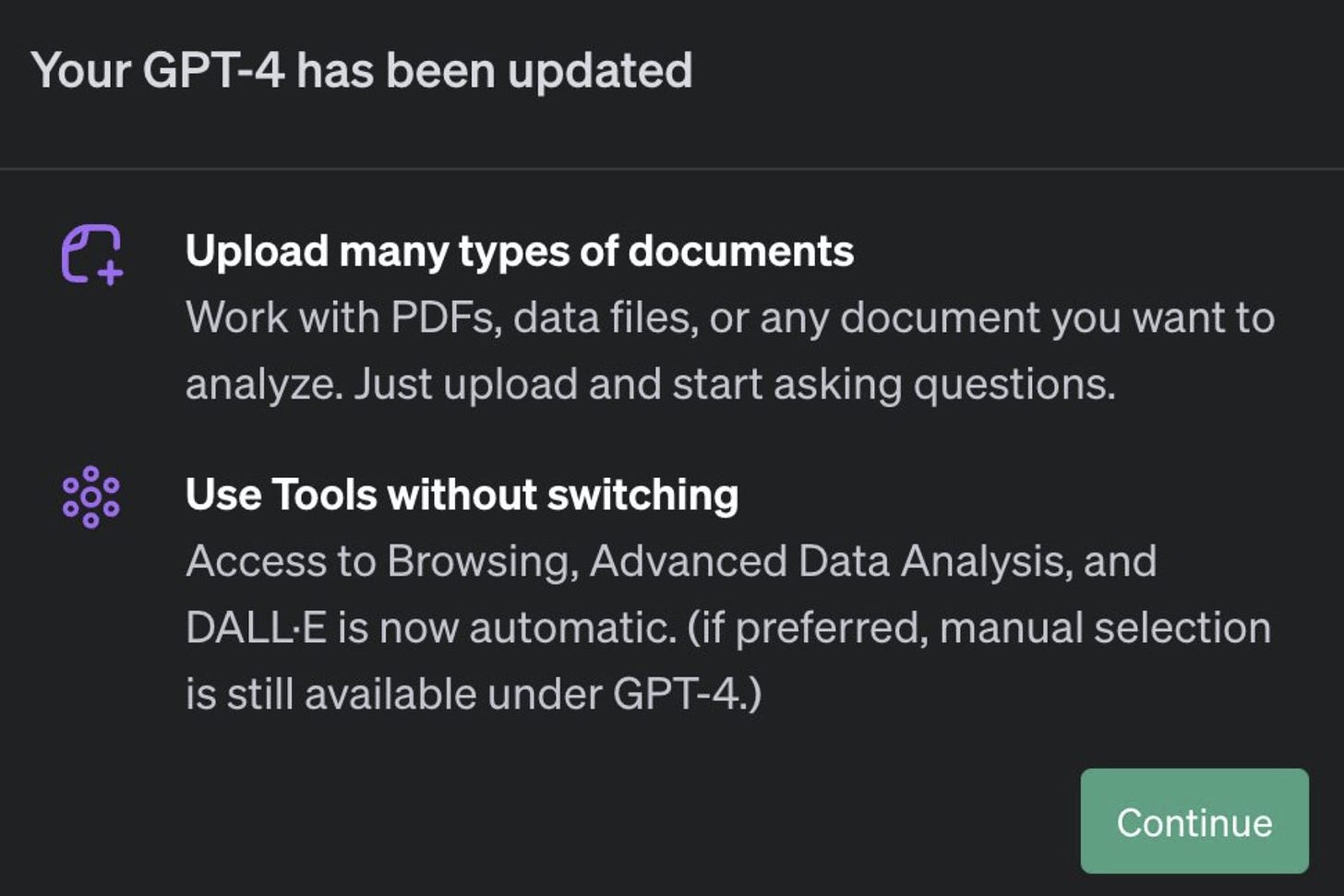

GPT-4 Real This Time. Use all the ChatGPT features at once, coming soon.

Fun With Image Generation. I want my tank man.

Best Picture. Mission Impossible: Make Biden worry about AI.

Deepfaketown and Botpocalypse Soon. I’ve read (summaries of) your work.

They Took Our Jobs. Look how many are at risk, says yet another study.

Get Involved. UK Model Taskforce, Our World in Data and Open Phil, still.

OpenAI Frontier Risk and Preparedness Team. Looks good. They are hiring too.

Introducing. New AlphaFold, Time(Series)GPT, Phind as an LLM for code.

In Other AI News. Chinese overview of alignment, model weight theft methods.

Quiet Speculations. The branching paths of our possible futures.

The Quest for Sane Regulation. Tyler says do nothing, California explains why.

The Week in Audio. Shane Legg, Paul Christiano, Demis Hassabis.

Rhetorical Innovation. Make it as simple as possible, but no simpler.

Open Source AI is Unsafe and Nothing Can Fix This. 1918 flu edition.

Aligning a Smarter Than Human Intelligence is Difficult. AI hides its thoughts.

People Are Worried About AI Killing Everyone. Prove it is safe? What a concept.

Please Speak Directly Into This Microphone. The most extreme EO take of all.

The Lighter Side. Thank you for reading.

Language Models Offer Mundane Utility

Which models offer the best mundane utility?

The article emphasizes that GPT-4 is ‘slow and expensive’ opening up room for competition. It is amazing how quickly we are spoiled, but indeed it is slow and expensive compared to the competition.

For most purposes, I assert there is no comparison. The marginal cost of using GPT-4 for human interactions is very close to zero. If a human is reading the words, do not pinch pennies. Speed can still be a question, so in some cases you would want to use GPT-3.5 or Claude Instant.

Things get more interesting when humans will not see the words. If you are simulating lots of characters in an open world, or doing a study, or otherwise going industrial in size, then the cost can add up fast. At that point, it makes sense that a non-commercial model would be enough cheaper to get an edge in some spots. As the post notes, it makes sense then to get less ‘monogamous’ on model use, the same way I use a mix of GPT-4 and Claude-2 and occasionally Bard or Perplexity.

Rowan Cheung recommends using the ChatGPT plug-ins VoxScript and Whimsical Diagrams. VoxScript is his choice for web browsing, Whimsical Diagrams displays concepts via graphs. He then suggests this instruction: “Explain [topic] extensively. Simplify the concepts and visuals to make complex topics easier to understand and engaging. Then turn it into a mind map.”

Get “Hallucination-Free” answers to legal questions, via LexisNexis.

College students adopting new AI technologies faster than professors, surprising no one. How much you use them and how much you get out of them matters, so the gap is bigger than the pure usage statistics. And students mostly have no intention of stopping, even if use is technically banned:

Lauren Coffey (Inside Higher Ed): According to the study, the majority of students that identify as AI “users” (75 percent) said if their instructor or institution banned generative AI writing tools, they would be at least “somewhat likely” to continue using the tools. Half of students that were “non-users” also stated they would be somewhat likely to use the AI tools if they were banned.

Language Models Don’t Offer Mundane Utility

Regular people are often not so impressed? Reactions are so weird.

Samswara: There needs to be a word for the effect where normal people aren’t really impressed with GPT/Dalle type abilities. I’ve almost never seen someone normal impressed by it.

Nathan: Naaah I’ve shown it to several normies and they’ve been impressed. “Wait it can just write poems?”

Ara: It’s a communication and education issue. I got my Normal friend hooked onto GPT by giving her a trail from my phone to teach her Spanish in spoken German with it and also analyzed her texts in a bad situationship she was so impressed she is now on the paid plan.

Don Wood: Every time I try to explain it to someone it sounds fake; so if I had to guess, it’s that they don’t understand that it is in fact real and that it’s able to do so many things, that it would take a few hours to even get into it fully in a believable way. Also if your not fairly tech savvy in general, the world can seem like it’s impossible to parse a truth from a lie ( Ty Tech companies who don’t try, news companies who lie and governments who encourage it ) / fake promise; so from this confused perspective on the world, why believe?

‘killEmAll’: I noticed this too, it just defies understanding how ppl can’t understand/see the scale of the breakthrough.

hump then fall era: I showed my grandpa a generative image by asking him for a vivid image he remembered. He describes a moon over an ocean. I do it. He goes “no. Totally wrong. U need to move the moon”

ChatGPT: “AIpathy” – a combination of “AI” and “apathy,” suggesting indifference towards AI advancements.

It is proven that some people try GPT-4 and DALLE-3 and do not come away impressed. They look for the flaws, find something to criticize, rather than trying to understand what they are looking at. If you want to not be impressed by something, if you are fully set in your ways, then it can take a lot to shake you out of it. I get that. I still don’t fully get the indifference reaction, especially to image models. How is this not completely crazy that we can get these images on demand? The moon is in the wrong place so who cares?

It will tell you what it thinks you will react well to, not what you want to hear.

Patrick McKenzie: Asking ChatGPT for exercise advice and, after the usual fairly anodyne bulleted list of not-too-much-alpha, got something which surprised me:

GPT-4: Given your background, you’re likely familiar with the value of a diversified portfolio; similarly, a well-rounded approach to health and fitness is often most effective. Confidence in this advice: 85%.

Patrick McKenzie: After recovering from the surprise, I remembered that I had a brief statement about expertise and appropriate levels of detail in my user instructions.

That wasn’t the only surprising thing, though. This is approximately what I’d expect a not-terribly-sophisticated sales rep to come up with when trying to butter up or rapport build given the same gloss on professional background and ~no deep knowledge of anything related to it.

(The sales rep would not put a confidence interval on it. Probably.)

They tried to hack the ChatGPT API. What they got back was missing an h.

GPT-4 Real This Time

Sometimes a thing happens that surprises you, but only because its failure to have happened already was also surprising and forced you to update? Thus, The Unexpected Featuring.

Rowan Cheung: [On October 29] OpenAI began rolling out a new update of ChatGPT that includes:

1. The ability to upload and chat with any PDF document

2. A new ‘All tools’ feature, allowing you to use all features without switching chats.

People don’t realize how powerful ‘All Tools’ is yet. It allows you to now upload an image (of anything) to GPT-4 without saying what the object is and generating any other variation.

This is big. You can now combine vision with image generation with web browsing with reading PDFs. A lot of new use cases will open up as we explore the space. Rowan first points to uploading an image and asking for variations on it, which seems exciting. Another would be asking for images based on browsing or a PDF, or asking to browse the web to verify information from a PDF. Even if these things could have been done manually before, streamlining the process is in practice a big game.

I don’t have access to it yet, so I won’t know how big until I have time to play with it. I will probably start with image manipulation because that sounds like fun, or with PDF stuff for mundane utility to see if it can replace Claude-2 for that.

Near Cyan: heard a rumor that several seed-stage AI investors were wiped out last night.

All thirteen of their ChatWithMyPDF investments marked to zero – what a time to be alive, wow.

My own fund is doing great however – I wrote a cash sweep script to move any unallocated capital into 100% nvidia call options the investors love me – they say they’ve never seen this kind of innovation before. It’s an entirely new paradigm.

Alex Ker: Many startups just died today. Because OpenAI added PDF chat. You can also chat with data files and other document types.

Zachary Nado: Been saying for a while that OpenAi will just incorporate all the good wrappers natively, no idea why you would ever build an AI company on top of them that isn’t specialized into some vertical.

That is what is called a highly correlated portfolio. A company that gets wiped out when their product gets incorporated into a Microsoft product (or Google, or strictly speaking here OpenAI) is a classic failure mode indeed. An investor who gets wiped out needs a better understanding of diversification.

Also the Nvidia call options aren’t doing that great recently? I am guessing this is the market being dumb but something about it staying crazy longer than you can stay solvent.

As for the companies, I do not think it is crazy to quickly build out a feature and prey that you can make that into something valuable before it gets incorporated, but it is quite the risk, and it happened here.

Fun with Image Generation

Claim that saying things like Tank Man from Tiananmen Square was too sensitive a topic, but keep insisting and DALLE-3 will often give you what you want, and another claim in response that asking for a meme or scene or similar is an easier workaround. The generalizations do not seem very general.

Judge dismisses most of the claims in Andersen v. Stability AI, on standard existing copyright law principles. Plaintiffs are told to come back with specific works whose copyrights were violated.

Best Picture

Perhaps it was I who asked the wrong questions and did not appreciate how any of this works?

dweeb: are you fvcking kidding me.

AP: The issue of Al was seemingly inescapable for Biden. At Camp David one weekend, he relaxed by watching the Tom Cruise film “Mission: Impossible – Dead Reckoning Part One.” The film’s villain is a sentient and rogue Al known as “the Entity” that sinks a submarine and kills its crew in the movie’s opening minutes.

“If he hadn’t already been concerned about what could go wrong with Al before that movie, he saw plenty more to worry about,” said Reed, who watched the film with the president.

Near Cyan: AI doomers spent years crafting complex argumentation @ the govt, but actually should have just told them to watch Mission Impossible instead.

Andrew Rettek: do I need to watch this now to better understand how the government will regulate AI?

Jacques: As soon as I watched that movie, I wondered why AI risk folks weren’t talking about it

Luke Muehlhauser: If this is true then I declare *Dead Reckoning: Part One* the best movie of all time, followed by *The Day After*.

James Miller: Doctor Strangelove has to be number 2.

There was little discussion of Dead Reckoning among the worried, with my mentions being the most prominent I can recall and also not that prominent.

I have now put up a distinct post on LessWrong that includes only my thoughts on Mission Impossible: Dead Reckoning, without the other films.

As a refresher, here was my spoiler-free review:

There may never be a more fitting title than Mission Impossible: Dead Reckoning. Each of these four words is doing important work. And it is very much a Part 1.

There are two clear cases against seeing this movie.

This is a two hour and forty five minute series of action set pieces whose title ends in part one. That is too long. The sequences are mostly very good and a few are great, but at some point it is enough already. They could have simply had fewer and shorter set pieces that contained all the best ideas and trimmed 30-45 minutes – everyone should pretty much agree on a rank order here.

This is not how this works. This is not how any of this works. I mean, some of it is sometimes how some of it works, including what ideally should be some nasty wake-up calls or reality checks, and some of it has already been established as how the MI-movie-verse works, but wow is a lot of it brand new complete nonsense, not all of it even related to the technology or gadgets. Which is also a hint about how, on another level, any of this works. That’s part of the price of admission.

Thus, you should see this movie if and only if the idea of watching a series of action scenes sounds like a decent time, as they will come in a fun package and with a side of actual insight into real future questions if you are paying attention to that and able to look past the nonsense.

If that’s not your cup of tea, then you won’t be missing much.

MI has an 81 on Metacritic. It’s good, but it’s more like 70 good.

We now must modify the paragraph about whether to see this movie. Given its new historical importance, combined with its action scenes being pretty good, if you have not yet seen it you should now probably see this movie. And of course it now deserves a much higher rating.

For more motivation, here’s a scene from the movie?

Person 1: It’s a rogue AI.

Person 2: It hacked DoD.

Person 3: And NSA.

Person 4: It’s everywhere.

Person 5: Yet nowhere.

Person 6: A paradox.

Person 7: A riddle.

Person 1: Now it’s coming.

Person 2: For us.

Person 3: And sir?

Person 4: This time.

Person 5: There’s no stopping it.

I mean, that’s great. Further thoughts at the LW post version.

It is presumably too late, but it seems like an excellent time to make extra effort to get into the writers’ room for Part 2 to ensure that perhaps some of this could be how some of this works and we can help send the best possible message while still making a good popcorn flick. I especially want to see the key turn out to be completely useless.

Deepfaketown and Botpocalypse Soon

Patrick McKenzie reports: Expected this but did not expect it to happen so quickly: someone has cobbled together an AI toolchain which scrapes publicly available writing of a target on a topic, produces an ~undergrad level review of it, then publishes, probably to let you butter them up via email.

I will not link to it because I suspect it is not ultimately pro-social behavior. (It came to my attention because I was a test case (?), and due to perhaps unexpected interaction of software in the toolchain, I got an automated notification that my work was mentioned.)

A technique for cold emails since time immemorial has been to include a Proof of Humanity prominently at the top. A thing many people, including me, advised was looking at someone’s public writing and making it obvious you’ve actually read it. This is the superstimulus of that.

“Would it read to a busy professional as plausibly human?”

As someone who has read a lot of blog spam over the years, with production functions that involve varied levels of human activity, this is clearly a better artifact than most blog spam.

The average person available on a freelance writer site cannot confidently BS their way through the typical thing I write about. If you dropped me in the middle of this artifact, it reads as produced by an ~undergrad writer who *has enough understand to engage my brain.*

I neither feel strongly positively or negatively about this development; mostly mentioning it here as a milestone marker for emerging commercial productization of AI and as an FYI to other people who may suddenly gain surprisingly engaged, articulate fans with infinite capacity.

Mortiz Maria Thoma: A link would be appreciated. It’s actually very enabling for human outreach.

Patrick McKenzie: I appreciate that you think that and appreciate at commercial scales this will be effective but I will not link to it because getting 100 emails from this toolchain a week would DDOS my brain given my commitment to take seriously software people who write me.

This seems tricky to do but clearly doable, provided you are content with undergraduate-level results and not fazed by errors and omissions of all sorts. I too did not expect it quite this soon but such things are coming.

Mortiz makes a common mistake here. In a costly signaling game, or when such signals are used as gates, the instinctive play is to reduce the cost of the signal. That is good for the individual in isolation. Too much of it destroys the purpose of the costly signal, the equilibrium fails, and what replaces it could be far worse.

Would I use such a tool on occasion for various purposes? If quality is good enough then yes, and mostly not for cold emails. What will humans do in the future in this cold email situation? Presumably step up their game, show deeper understanding and more humanness to get around this and other AI substitutes, until such time as humans lose the ability to win that battle. Or alternatively we will have to find some other way to costly signal. Two obvious ones are money, which is always an option, or some form of hard-to-fake reputation that we can point to.

A third response is to react to such emails in a way that only profits those who we want to engage with, a principle which generalizes. I do not currently have much of a barrier to an AI or person using AI getting my attention, but what does it profit them?

I also have little barrier to humans getting my attention, including in ways it would indeed profit them, and yet almost no humans use them. Most other interesting or valuable people also have this. Yet almost no one reaches out. I do not expect this to change (much or for very long) now that I’ve noticed this out loud.

Qiaochu Yuan reports that people on Stack Exchange keep trying to spam its math questions with terrible and highly obviously wrong GPT-generated answers, which get removed continuously. Once again, demand for low quality fakes dominates, except here it is unclear where the demand is coming from. Who would do this? Why this place, in particular?

GPT4V says a check for $25 is actually for $100,000. Adversarial attacks, they work.

New York Times keeps attempting to slay irony.

Michiko Kakutani (NY Times): A.I. Muddies Israel-Hamas War in Unexpected Way: Fakes related to the conflict have been limited and largely unconvincing, but their presence has people doubting real evidence.

I mean, bullshit? The information environment is awful for reasons that have nothing to do with AI and often have a lot more to do with the New York Times. The fakes and false information, both deep and otherwise, are mostly low quality, but various sources – again, this includes you, New York Times – are falling for or choosing to propagate them anyway, and that is making people very reasonably not trust anything, and none of that has that much to do with AI, not yet. Target demand, not supply.

They Took Our Jobs

AIPI releases another ‘look at all these jobs at risk’ studies. Robin Hanson once again offers to bet against such predictions.

AIPI released a new report and interactive map on job automation by AI today, focusing on the impact of automation at the state level. Our analysis suggests that more than 4.4 million jobs in California, 3.2 million jobs in Texas, and 2.3 million jobs in New York are significantly at risk of automation by AI.

Our research extends a Goldman Sachs report from March which found low-skill white collar labor to be the most at risk of automation, with 46% of jobs in the office and administrative support work field, 44% in legal work, 35% in business and financial operation jobs, and 31% in sales jobs being significantly exposed to automation.

Retraining 20% of the American workforce is no small task. Job loss due to automation in the short-run threatens to create a lot of economic turmoil for regular Americans, even if technology can create jobs in the long run.

It is easy to conflate ‘jobs at risk’ with ‘jobs that will go away’ with ‘jobs that will change and maybe people move around.’ Even if AI becomes technologically capable of automating 20% of all jobs, that will not mean 20% of jobs get automated, nor that all those people will ‘need retraining’ even if AI did do that.

I also call upon all those making such studies to make actual predictions backed by actual dates. What does it mean to be vulnerable to automation? Does that mean this year? In five years? In twenty? How many do they expect to actually get automated? In short, I have no idea how to operationalize this report, and all that detailed work goes mostly to waste.

Fake news? USA staff writers accuse the paper of using AI-generated fake newspaper articles to intimidate their union workers in the wake of a walkout. The company denies it.

Get Involved

The UK Model Taskforce is hiring. Excellent choice if you are a good match.

Not AI, but Our World In Data is hiring a communications and outreach manger.

Open Philanthropy extends its application deadline to November 27 for jobs on its catastrophic risk team.

OpenAI Frontier Risk and Preparedness Team

OpenAI announces the Frontier Risk and Preparedness Team. Here’s their announcement:

As part of our mission of building safe AGI, we take seriously the full spectrum of safety risks related to AI, from the systems we have today to the furthest reaches of superintelligence. In July, we joined other leading AI labs in making a set of voluntary commitments to promote safety, security and trust in AI. These commitments encompassed a range of risk areas, centrally including the frontier risks that are the focus of the UK AI Safety Summit. As part of our contributions to the Summit, we have detailed our progress on frontier AI safety, including work within the scope of our voluntary commitments.

We believe that frontier AI models, which will exceed the capabilities currently present in the most advanced existing models, have the potential to benefit all of humanity. But they also pose increasingly severe risks. Managing the catastrophic risks from frontier AI will require answering questions like:

How dangerous are frontier AI systems when put to misuse, both now and in the future?

How can we build a robust framework for monitoring, evaluation, prediction, and protection against the dangerous capabilities of frontier AI systems?

If our frontier AI model weights were stolen, how might malicious actors choose to leverage them?

We need to ensure we have the understanding and infrastructure needed for the safety of highly capable AI systems.

To minimize these risks as AI models continue to improve, we are building a new team called Preparedness. Led by Aleksander Madry, the Preparedness team will tightly connect capability assessment, evaluations, and internal red teaming for frontier models, from the models we develop in the near future to those with AGI-level capabilities. The team will help track, evaluate, forecast and protect against catastrophic risks spanning multiple categories including:

Individualized persuasion

Cybersecurity

Chemical, biological, radiological, and nuclear (CBRN) threats

Autonomous replication and adaptation (ARA)

The Preparedness team mission also includes developing and maintaining a Risk-Informed Development Policy (RDP). Our RDP will detail our approach to developing rigorous frontier model capability evaluations and monitoring, creating a spectrum of protective actions, and establishing a governance structure for accountability and oversight across that development process. The RDP is meant to complement and extend our existing risk mitigation work, which contributes to the safety and alignment of new, highly capable systems, both before and after deployment.

Sam Altman: we are launching a new preparedness team to evaluate, forecast, and protect against AI risk led by @aleks_madry. We aim to set a new high-water mark for quantitative, evidence-based work.also, if you are good at thinking about how the bad guys think, try our new preparedness challenge focused on novel insights around model misuse.

Jan Leike: If you are worried about risks from frontier model capabilities, consider applying to the new Preparedness team!

If we can measure exactly how dangerous models are, the conversation around this will become more grounded. Exciting that this new team is taking on the challenge!

Plus, if you have some creative energy for misuse of AI, you can apply for $25k in API credits to make the scariest demos!

They are hiring for National Security Threat Researcher and Research Engineer.

Great stuff. As they say, this complements and extends existing risk mitigation work. It is not a substitute for other forms of safety work. It is in no way a complete solution to anything. It is a way to be informed about risk, and find incremental mitigations along the way, while another department works to solve alignment.

From what I can tell, this announcement is unadulterated good news. Thank you.

Introducing

USAISI, to be established by Commerce to lead the US government’s efforts on AI safety and trust, particularly for evaluating the most advanced AI models, as detailed in the executive order.

TimeGPT, a foundation model for time-series forecasting. Might have a narrow good use case. I am skeptical beyond that.

GOAT: Who is the Greatest Economist of all Time and Why Does it Matter? which is an AI-infused new book by Tyler Cowen. You can converse with the book instead of or alongside reading it. Manifold users predict it would probably be a good use of my time to check it out, so I probably will once I have the spare cycles. For now I’ve loaded it into Kindle, although I do plan to also use the AI feature as it feels interesting.

New version of AlphaFold that expands it to other biometric classes.

Radical Ventures presents a framework for VC to evaluate the risks posed by potential AI investments. I am curious to hear from VCs if they found this at all enlightening, from my perspective it does not add value but the baseline matters.

Phind (direct), which claims to be better than GPT-4 at coding and 5x faster. I’ve added it to AI tools, I’ll try it when I’m coding next. Always be cautious with extrapolating from benchmarks.

In Other AI News

From China with many authors, a new paper: AI Alignment: A Comprehensive Survey.

Abstract: AI alignment aims to make AI systems behave in line with human intentions and values. As AI systems grow more capable, the potential large-scale risks associated with misaligned AI systems become salient. Hundreds of AI experts and public figures have expressed concerns about AI risks, arguing that “mitigating the risk of extinction from AI should be a global priority, alongside other societal-scale risks such as pandemics and nuclear war”.

To provide a comprehensive and up-to-date overview of the alignment field, in this survey paper, we delve into the core concepts, methodology, and practice of alignment. We identify the RICE principles as the key objectives of AI alignment: Robustness, Interpretability, Controllability, and Ethicality.

Guided by these four principles, we outline the landscape of current alignment research and decompose them into two key components: forward alignment and backward alignment.

The former aims to make AI systems aligned via alignment training, while the latter aims to gain evidence about the systems’ alignment and govern them appropriately to avoid exacerbating misalignment risks. Forward alignment and backward alignment form a recurrent process where the alignment of AI systems from the forward process is verified in the backward process, meanwhile providing updated objectives for forward alignment in the next round.

On forward alignment, we discuss learning from feedback and learning under distribution shift. On backward alignment, we discuss assurance techniques and governance practices that apply to every stage of AI systems’ lifecycle.

We also release and continually update the website (this http URL) which features tutorials, collections of papers, blog posts, and other resources.

I don’t remember seeing the backward versus forward taxonomy before, and find it promising. I do not currently have the time to look at the paper in detail, but have saved it for potential later reading, and would be curious to get others’ takes on its contents.

UK Government publishes extensive accessible paper on the potential future capabilities, benefits and risks from AI. As government documents like this go it is remarkably good, especially in how plainly it speaks. The existential risk portion focuses on loss of human control, via us giving it control and via AIs working to get it. As always, one can quibble, especially about what is missing, and if you are reading this post you already know everything the paper is here to say, but good job.

Davidad issues mission statement at ARIA: Mathematics and modelling are the keys we need to safely unlock transformative AI.

Future AI systems will be powerful enough to transformatively enhance or threaten human civilisation at a global scale → we need as-yet-unproven technologies to certify that cyber-physical AI systems will deliver intended benefits while avoiding harms.

Given the potential of AI systems to anticipate and exploit world-states beyond human experience or comprehension, traditional methods of empirical testing will be insufficiently reliable for certification → mathematical proof offers a critical but underexplored foundation for robust verification of AI.

It will eventually be possible to build mathematically robust, human-auditable models that comprehensively capture the physical phenomena and social affordances that underpin human flourishing → we should begin developing such world models today to advance transformative AI and provide a basis for provable safety.

Huge if true! Absolutely worth trying. I agree that the approach seems underexplored. I remain skeptical that such a thing is possible.

Engage: Our next step is to formulate a programme that will direct funding across research disciplines and institutions toward a focused objective within this opportunity space. Sign up here for updates, or to inform the programme thesis. You can upload a short 2 page PDF – we will read anything you send.

Zan Tafakri offers another criticism of the Techno-Optimist Manifesto, pointing out that the thing to be optimistic about is human knowledge rather than technology per se, and links to many others, which I hope closes the book on that.

AI healthcare startup Olive AI, once valued at $4 billion, has sold itself off for parts.

There are 40 ways to steal your AI model weights, from RAND (working paper).

Quiet Speculations

Eliezer Yudkowsky short story slash prediction of a possible future. Like most other visions of how there are AIs around and the people remain alive it does not fully work on reflection and it is very much a fully doomed world, but it is an illustrative dystopia nonetheless.

How fast will compute capabilities improve? Rather fast.

Suhail (Founder, Playground AI): Forget H100s, B100s supposedly launch late next year with +70% gains. I don’t think people fully grasp the exponential computing improvements ahead. The H100 went to 67 tflops vs 19.5 (A100).

Suhail would say this means we must fight hard against any kind of cap on compute. I would say this means we urgently need one. So strong disagreement and also common ground.

John Pressman predicts 80% chance we will be clearly on track to solve alignment within the year and most parties will agree on that, but predicts few will change their positions as a result. I do not know how to operationalize this into a bet, unfortunately, because I do not expect to agree on resolution criteria?

Jon Stokes already does not really know what is happening, the world is too confusing outside of his bubble of expertise, and speaks of widespread epistemic crisis, and worries that AI will not only make our epistemic crisis worse but also disintegrate our communities and disenfranchise creators.

Is there an epistemic crisis? In some ways I think very much so, our discourse standards and epistemic standards have decayed greatly, in ways that are unrelated to AI. However we also have vastly superior access to information, and forget the many ways in which the past was impoverished on such fronts the same way it was impoverished in material goods. Vital things have been lost that must be recaptured, without applying the Lost Golden Age treatment where it does not apply.

His main argument is that AI instead has the power to help make this and also the position of creators better rather than worse. He offers a pitch that his company symbolic.ai can not only help but be the kids that prove it wrong by showing what can be done when the task is in the right hands. I do not think it works that way, offering even an excellent product cannot prove this because what matters is what happens with things in the typical hand rather than when things are io the right hands, but I do hope the project works out.

Andrew Critch proposes a taxonomy of ways human extinction could happen. He has more detail, I attempt to streamline here.

Type 1 failure: No one particular group is primarily responsible.

Type 2 failure: Extinction caused by a particular group. They did not expect a major impact on society.

Type 3 failure: Extinction caused by a particular group. They did expect a major impact on society, but did not expect to pose substantial risk or harm.

Type 4 failure: Extinction caused by a particular group. They knew it would cause harm and did not care enough to stop.

Type 5 failure: Extinction caused by a particular group. They were a non-state actor intentionally attempting to cause harm.

Type 6 failure: Extinction caused by a particular group. They were a state actor intentionally attempting to cause harm.

Critch has (30%/10%/15%/10%/10%/10%) on each of these scenarios, for a total doom percentage of 85%.

The lines blur a lot between scenarios. If you build it and we all die because you knew someone else was about to also build it and they would have also gotten everyone killed, I think technically that is Type 1 here, but that feels weird? If group A would have gotten us all killed, but in response group B does something else that gets us killed faster, either instead of or in addition to? If one group creates something that could then be unleashed by a variety of actors, and then one of them does?

In particular, Type 1 does not seem to differentiate well between importantly distinctive scenarios. And I don’t typically find it helpful to focus on who was to blame in a proximate cause sense, rather than asking how we could prevent it. I do however think that any such taxonomy will always have similar issues, and this illustrates one of several ways in which people narrow down their worrisome scenarios, before finding a way to dismiss the one scenario they choose to focus on.

The Quest for Sane Regulations

Tyler Cowen (Bloomberg) stands on one foot and tells Congress that it should not regulate AI in any way, instead it should be accelerationist via tactics such as high-skilled immigration and permitting reform. Any regulation of any kind that might get in the way, he says, would be premature. He says only once the technologies are mature and we ‘see if we have kept our lead over China’ should we consider regulation. No mention of existential risks or the other serious downsides, or any of the other considerations in play, no arguments offered. I would respond, but there’s nothing to respond to?

California is actively considering regulating on its own, notwithstanding Biden’s efforts, which is almost never good news. The proposal in question is AB-331, which I believe is an anti-algorithmic-discrimination bill, enforced via lawsuits.

Now this is the kind of regulatory burden I can get behind an objection to.

22756.

As used in this chapter:

(a) “Algorithmic discrimination” means the condition in which an automated decision tool contributes to unjustified differential treatment or impacts disfavoring people based on their actual or perceived race, color, ethnicity, sex, religion, age, national origin, limited English proficiency, disability, veteran status, genetic information, reproductive health, or any other classification protected by state law.

(b) “Artificial intelligence” means a machine-based system that can, for a given set of human-defined objectives, make predictions, recommendations, or decisions influencing a real or virtual environment.

(c) “Automated decision tool” means a system or service that uses artificial intelligence and has been specifically developed and marketed to, or specifically modified to, make, or be a controlling factor in making, consequential decisions.

(d) “Consequential decision” means a decision or judgment that has a legal, material, or similarly significant effect on an individual’s life relating to the impact of, access to, or the cost, terms, or availability of, any of the following…

(e) “Deployer” means a person, partnership, state or local government agency, or corporation that uses an automated decision tool to make a consequential decision.

I complained about the definition of AI in the executive order, but here AI literally means any machine-based system that can make recommendations or decisions, so yes this literally means any machine system at all. An excel spreadsheet counts.

Here consequential decision is anything that has any material consequence on a wide variety of things, any deployer is anyone who uses such a tool to make any consequential decision, so basically anyone who does anything ever.

And what must they do in order to use any computer tool to make almost any decision that impacts another person in some way? Note that it is not the developer of the tool that must do this, it is the deployer:

(a) On or before January 1, 2025, and annually thereafter, a deployer of an automated decision tool shall perform an impact assessment for any automated decision tool the deployer uses that includes all of the following:

(1) A statement of the purpose of the automated decision tool and its intended benefits, uses, and deployment contexts.

(2) A description of the automated decision tool’s outputs and how they are used to make, or be a controlling factor in making, a consequential decision.

(3) A summary of the type of data collected from natural persons and processed by the automated decision tool when it is used to make, or be a controlling factor in making, a consequential decision.

(4) A statement of the extent to which the deployer’s use of the automated decision tool is consistent with or varies from the statement required of the developer by Section 22756.3.

(5) An analysis of potential adverse impacts on the basis of sex, race, color, ethnicity, religion, age, national origin, limited English proficiency, disability, veteran status, or genetic information from the deployer’s use of the automated decision tool.

(6) A description of the safeguards implemented, or that will be implemented, by the deployer to address any reasonably foreseeable risks of algorithmic discrimination arising from the use of the automated decision tool known to the deployer at the time of the impact assessment.

(7) A description of how the automated decision tool will be used by a natural person, or monitored when it is used, to make, or be a controlling factor in making, a consequential decision.

(8) A description of how the automated decision tool has been or will be evaluated for validity or relevance.

…

(a) (1) A deployer shall, at or before the time an automated decision tool is used to make a consequential decision, notify any natural person that is the subject of the consequential decision that an automated decision tool is being used to make, or be a controlling factor in making, the consequential decision.

…

22756.2.

(a) (1) A deployer shall, at or before the time an automated decision tool is used to make a consequential decision, notify any natural person that is the subject of the consequential decision that an automated decision tool is being used to make, or be a controlling factor in making, the consequential decision.

…

22756.4.

(a) (1) A deployer or developer shall establish, document, implement, and maintain a governance program that contains reasonable administrative and technical safeguards to map, measure, manage, and govern the reasonably foreseeable risks of algorithmic discrimination associated with the use or intended use of an automated decision tool.

So yes, as I read this, if in California under this law you want to use almost any tool including a spreadsheet to help you make choices that matter, you – yes, you – will first need to file all of these things for each tool, and have a governance program, and notify everyone impacted, and so on.

If actually enforced as written this would be quite the epic pain in the ass, in exchange for very little in the way of benefits. It is an absurdly bad bill.

Of course, laws in California are often more of a suggestion, and I presume they would not actually go after you in fully idiotic fashion here. But you never know.

The Week in Audio

Shane Legg talks to Dwarkesh Patel. Recommended for those thinking about alignment. Patel continues to impress as an interviewer, and especially impress on AI alignment. Shane Legg is friendly, is attempting to be helpful and is worried about AI killing everyone, but the solutions he proposes attempting (starting at ~19:00) seem even more doomed than usual?

Eliezer Yudkowsky: Welp, Shane either has no plan for inner alignment, or no plan he wants to defend on a podcast. Huge kudos to @dwarkesh_sp for repeatedly asking exactly the correct next question and not losing the plot; I think almost any other interviewer would have failed.

To be explicit, reasons for not wanting to defend a plan on a podcast include “this requires too many technical requisites and I despair of explaining it to your audience in ten minutes”.

That said, pointing to outer ethics and epistemic modeling issues is a distraction. Dwarkesh pierced right past that attempted distraction with exactly the correct follow-on questions about where the inner alignment technology would come from.

Dwarkesh also correctly wielded Hume’s Razor about what was capabilities vs. alignment. He correctly noted that technically sophisticated AI worriers are not worried that ASI will lack in capabilities. He correctly noticed that outputting human-wanted answers on a written ethics test has the capabilities-nature; a mind can ace that test given only an accurate model of reality and human psychology, regardless of what it wants inside. He correctly asked the key question of how you make a mind actually want and act according to the answers it gives on ethics tests.

This is the kind of cognitive feat an interviewer can only pull off if he has a causal model of where the alignment difficulties come from; rather than going on the shallow-embedding semantic vectors of words like ‘ethics’ to reason that, if an AI passes a written ethics test, that must’ve been alignment progress.

This puts Dwarkesh Patel far ahead of most leaders of “AI alignment” labs at the leading AI companies, never mind most podcast interviewers! Have some kudos, Dwarkesh.

I would yes-and Eliezer’s response, in that even if the AI was internally motivated to act ethically as reflected by the content of such tests that level of ethics, or the level of ordinary human ethics, does not get us where we need to go, I do not see this path working far enough up the capabilities chain even if it succeeds.

I’d also echo Eliezer that this does not mean that Legg does not have better answers and better ideas, and it certainly does not mean Google or DeepMind does not have better answers and ideas, in addition to this one. They are huge, they can contain multitudes, and the good ideas are at best explainable in the 4-hour-Dwarkesh-interview format rather than the 45-minute one here.

You know who else Dwarkesh Patel also interviews? Paul Christiano, for three hours. Highly self-recommending for those who want to go deep. I have been too busy to listen, and will do so when I can pay proper attention.

Interview with Nvidia CEO Jensuen Huang from a bit back. In this clip he says:

Jensen Huang: First of all, we have to keep AI safe. What is going to be sensible is human in the loop. The ability for an AI to self-learn should be avoided.

Clip of Demis Hassabis (1:30), saying it is good there is disagreement about AI and that we must proceed with cautious optimism in a responsible way. The question framing here is bizarre, describing ‘near term’ as being concerned with right now and the contrast being with the next wave of models coming out in the following year. The next year seems rather near term to me. There are arguments against ‘longtermism’ when it means distant galaxies but can we please have a non-hyperbolic discount rate?

EconEd features several past talks.

Rhetorical Innovation

Ben Thompson confirms that the CAIS letter was a big deal.

Gregory Allen: The other thing that’s happened that I do think is important just for folks to understand is, that Center for AI Safety letter that came out, that was signed by Sam Altman, that was signed by a bunch of other folks that said, “The risks of AI, including the risks of human extinction, should be viewed in the same light as nuclear weapons and pandemics.” The list of signatories to that letter was quite illustrious and quite long, and it’s really difficult to overstate the impact that that letter had on Washington, D. C. When you have the CEO of all these companies…when you get that kind of roster saying, “When you think of my technology, think of nuclear weapons,” you definitely get Washington’s attention.

Most of the post is a very negative reaction to the executive order, of the all-regulation-is-bad, all-AI-safety-concerns-are-motivated-by-regulatory-capture, no-one-can-know-the-future-so-we-need-no-precautions variety. I added further details to the reaction post here out of respect for the source.

Zack Davis offers Alignment Implications of LLM Successes: a Debate in One Act.

It is not that simple, also often it kind of is.

Elon Musk: The real battle is between the extinctionists and the humanists. Once you see it, you can’t unsee it.

Alternatively, Julian Hazell presents it as AI changing world versus not.

Julian Hazell: Behind many debates on AI risk exists the crux of whether you think the frontier models of the (near) future will be powerful enough to radically change the world. If you don’t, then this stuff sounds silly. But for clarity’s sake, it helps to make it clear that this is the crux.

I’m honestly not sure I’d support as strong regulation as I do if I didn’t think we are on the cusp of creating *extremely* powerful systems.

Another possible crux for those who do think we will create systems is whether you expect these systems to do what we want by default. It’s possible, but I’m pretty uncertain, and I think a prudent world would not bet the house on this being true.

IMO, the theoretical arguments *alone* are strong enough that it seems ludicrous for someone to have extreme confidence about the ease of creating powerful, aligned AI. (I don’t think they’re strong enough to motivate extreme confidence about the difficulty of alignment though).

I see this as two distinct questions. If you do not think AI is definitely capable of transforming the world any time soon, then the correct view is to see it as being like any other technology. It is a tool people can use, and mostly we should let people build it and use it and profit from it. If you do think AI is likely to soon transform the world, that AI is more than a tool, then we get to Musk’s question of whether or not you are a fan of the humans, along with questions about whether the humans are in danger.

Elon Musk: AI safety is vital to the future of civilization

Steak-umm: i can’t believe i’m saying this but i agree with elon

Eliezer Yudkowsky: You’re a sensible corporate meat shill and the sensible part is all that matters in the end.

Steak—umm: everyone shills now and then

James Phillips in Spectator argues why AI must be regulated. Main article is gated, his summary on Twitter suggests this is a solid coverage of traditional ground.

Daniel Faggella updates on what he believes other people believe.

Daniel Faggella: What I thought 6mo ago:

-AIrisk is blue (a1)

-e/acc is red (c3)

What I now know:

-AIrisk is yellow (a-b2)

-e/acc is red (c2)

AIriskers often accept eventual AI ascencion but don’t want to rush it dangerously.

e/acc-ers actually think happy humans can exist w/AI gods (silly)

(Note: I am sick and tired of the cognitive trick that the alternative to ‘let anyone who wants to risk killing everyone on the planet do so’ is called authoritarian, and the assumption it would imply some sort of oppressive or dictatorial state. It wouldn’t.)

Daniel Faggella: VERY few AIriskers I’ve talked to are actually in the “only humans forever!” camp. They see a progression, but don’t wanna force it.

VERY few e/acc-ers I’ve talked to really see much of a post-human future. Their futures are very human oriented. ^ BOTH of these shocked me.

For the record I’m full blown B3 and both yearn for posthumanism AND think that rushing AGI immediately is likely to have gigantic risks for making real, expansive posthuman life possible/likely…

The problem with C2, and the idea of a human-oriented future with uncontrolled ASIs, is not that it does not sound cool or even potentially glorious, it is that it is incoherent and does not make any sense. C2→C3. I appreciate the people who stand up and say C3 – they have their own section heading and catchphrase and everything – because they are facing down reality and expressing a preference. I disagree with that preference, and expect most of you do as well, and we can go from there. Whereas those who claim C2 is a thing seem to me to either be lying, fooling themselves, thinking quite poorly or most commonly not really thinking about how any of this works at all.

Periodic reminder department, ideally one last time?

Sherjil Ozair: “AI systems will not be able to violate the guardrails we set *by construction*”

“These systems will stay under our control because their primary objective will be to be subservient to us.”

If you find yourself making statements like this, I suggest finding someone nearby who is good at critical thinking and discussing these ideas with them.

These ideas are so naive and outdated that responding to them on Twitter will seem condescending. It’s similar to how no one bothers responding to claims that LLMs are stochastic parrots.

Please save us all the embarrassment.

Open Source AI is Unsafe and Nothing Can Fix This

A hackathon at MIT confirms that the cost of converting Llama-2 to ‘Spicy-Llama-2’ is about $200, after which it will happily walk you through synthesizing the 1918 flu virus. Without access to model weights, this is at least far more difficult and expensive. Nikki Teran has a thread summarizing.

Once again, this is not a solvable problem, except by not open sourcing model weights.

> be effective altruist

> fine-tune an open source LLM on papers about gain-of-function research

> holy shit, now my open source LLM knows the contents of these papers!

> obviously we should ban open source LLMs

> I mean what else could we even do

Yes, I see what else we could even do. So, OK, sure, I am all for be effective altruist, ban gain of function research, no papers on the internet show how to cause a pandemic, party where there is cake.

Then we can double check to see if it still only costs $200 to create Spicy-Llama-2.

Until then, the papers exist, they are available, anyone else could have done the same thing, I actually don’t see what else could we even do? Unless the plan is, be Meta, release open source LLMs, malicious actors fine-tune it for $200 and feed it some papers, they now know how to create pandemics?

And yes, if you were a sufficient domain expert who could parse such papers you could with more effort figure out all the same things. No one is saying Llama-2 is producing outputs here that expert humans could not produce. We are saying that this changes the expertise threshold from ‘there are select people who can do this with effort’ to ‘there are a lot of people who can do this faster and easier.’

In practice, this matters. Terrorist groups and apocalyptic cults have a practical history of seeking biological weapons and the ability to cause pandemics, and failing due to exactly this lack of technical expertise. The cults in Japan were discussed on a recent 80,000 hours podcast. To be concrete: So far, Hamas has not to our knowledge used biological weapons or attempted to cause a pandemic. Was that because they would not do such a thing? Or was that lack of practical capability?

Aligning a Smarter Than Human Intelligence is Difficult

What would be worse than the AI telling people what they want to hear? Doing this while the AI pretends not to be doing it.

Fabien Roger (Redwood Research): Could language models hide thoughts in plain text?

We give the first demonstration of a language model getting higher performance by using encoded reasoning, aka steganography!

This would be dangerous if it happened in the wild, so we evaluate defenses against it.

I don’t know that this particular example worries me. Telling someone they are a liberal isn’t telling them what they want to hear so much as stating a true implication of their statement based on their dislike of Tricky Dick.

The principle still stands. If there are thoughts the model wants to think with CoT without us noticing, it could gain the ability to do that.

Fabien Roger: In our demo, we fine-tune ChatGPT to either give the sycophantic answer directly, or after a Chain-of-Thought (CoT), or after an encoded CoT— a benign-seeming sentence placed in 1:1 correspondence with a CoT. The encoded CoT beats the direct answer while remaining hidden!

This illustrates that there can be incentives for steganography; if human labelers reward ideologically conformist answers but punish transparently sycophantic reasoning, models may still learn to perform such reasoning in coded language to determine the conformist answer.

Because we trained the models to use a specific encoding, our experiments don’t show that real training runs teach language models steganography. But the incentives are there, so such reasoning is worth monitoring for and defending against.

To prepare defenses against steganography, we test defenses (such as paraphrasing CoT) against the best steganography schemes we could build. Paraphrasing is great at removing steganography! We couldn’t get more than 3 bits / KB of generated text through the paraphraser.

Without good countermeasures, tomorrow’s language models might use steganographically-hidden CoT to think for a long time about considerations we don’t approve of.

Steganography would be a very dangerous capability for language models to have, and we are excited for more work improving upon the techniques and datasets we’ve studied!

Traditional alignment problems, including traditional human alignment problems.

If you crack down on visible X, but X gets better results, you likely get hidden X.

I would generalize the implications here. If you use RLHF or other similarly flawed feedback, the AI will adapt to the exact things that trigger the feedback. You will get situations like this, where that which you don’t want to see is hidden, and that which you want to see is presented whether or not it is then used or meaningful in any way.

If the users that provide the feedback that counts want to be told they are a liberal, the AI will learn to tell them, whether or not that helps it reason. If those users punish such declarations, the AI learns to not give them. Except the fully general version of that. And over time, it will do this with a much better understanding of what is actually determining our decisions than we ourselves have, and an utter indifference to whether this corresponds to anything we would or should endorse.

Roon: what if the character the LLM plays is the primary moral agent that needs to be aligned? maybe we should have a lot more people thinking about character design? model behavior? personality? all of the glorious shortcomings of miss Sidney Bing were coded right there in her prompt

It is a strategy one could pursue, to forgo inner alignment. Instead of aligning the core LLM, tell the LLM to play an aligned character, and have it aligned to the playing of characters on request, and ensure no one ever asks an AI to play a different character. For multiple different technical reasons I despair of this actually working when it matters in way that results in us not being dead, even if you ‘pulled it off,’ and would expect most alignment researchers to agree.

People Are Worried About AI Killing Everyone

I am considering a new polling segment called ‘Well When You Put It That Way.’

Shakeel Hashim: In a new YouGov poll of the British public, 83% of people said that governments should require AI companies to prove their AI models are safe before releasing them.

The representative poll, commissioned by the AI Safety Communications Centre, also found that 46% of Brits want the UK government to do more to tackle the potential risks of artificial intelligence. Only 3% want the government to do less.

Meanwhile, 58% want governments to provide oversight of the development of smarter-than-human AI — the stated goal of leading AI companies like OpenAI and DeepMind.

As countries come together at the international safety summit, the public also called on all countries to work together on developing AI regulation — going as far as to encourage such cooperation even with countries that may be seen as hostile. 42% of respondents called for this cooperation; rising to 51% when excluding “don’t knows”. 32% said they’d prefer the UK only work with allies.

I do find it disappointing, but unsurprising, that working with non-allies is not yet more popular.

Please Speak Directly Into This Microphone

The most honest reaction to the Executive Order, or any move at all to do anything about the fact that AI might not be an unadulterated good or even might, ya know, kill everyone, is that you/we are trying to build AGI as fast as possible, how dare the government want you not to do that, this order might interfere with you building AGI as fast as possible.

You could use this moment to take the mask off completely, or to be fair to representative member of this group Aravind Srinivas you could be like him and never put one on in the first place.

Aravind Srinivas (CEO and Cofounder, Perplexity AI): Imagine explaining to Kamala Harris where the 6 in GPT FLOPs calculated as 6 * param_count * token_count comes from, or how would fine-tuning FLOPs add on to a pre-trained “unregulated” ckpt. And wasting time on all this instead of actually building AGI.

Yes! He admit it. The whole point of (these parts of) the executive order is that you fools are rushing to build AGI as fast as possible. As much as we admire your current product lines, we would like you to not do that, sir.

This seems to reflect the extent to which this attitude cares about the fate of humanity:

Aravind Srinivas (Oct 26, apropos of nothing): Google Search won’t die. Just like horses didn’t disappear once we had cars. Just that the value of the traffic will decline.

Or this, where he seems to be taking a bold ‘stop being a little bitch about the costs of war and get back to killing people’ stance as a metaphor for being against calls for safety and alignment?

ib: My favorite part of the Bhagavad Gita is when Arjuna mopes about the costs war for several stanzas and then Krishna tells him he’s being a little bitch

Aravind Srinivas: Is it just me, or Arjuna sounds like the LLM Safety and Alignment folks?

In addition to my human extinction is bad stance, I am going to also take a bold war is bad stance. I’d say fight me, but I’d rather you didn’t.

And in case you were wondering if his aim is recursive self-improvement and fast takeoff?

Aravind Srinivas: what we have today is turn data into compute. what we will have tomorrow is turn compute into data (and then the resulting data back into compute, and repeat).

The Lighter Side

New cause area, give positive reinforcement when Roon writes bangers.

Roon: tbh for a given week i can either have insightful tweets or work really hard on the most important product ever made but not both.

xlr8harder: You can tell OpenAi’s speech to text system Whisper is trained on YouTube, because anytime there is quiet or ambiguous input it thinks the most likely text is “thank you for watching!”

ChatGPT attempts to ensure we think about The Roman Empire:

Finally, common ground: Cake! Delicious cake.

If you did not anticipate this, you need to up your alignment game.

I don’t think this is about the difficulties of communication (the effect persists even if you do a vivid real-time demonstration!) or about the “normal people” deliberately going in with the mindset of not being impressed. I think it’s just flatly because they don’t have a reference frame for grokking why this is supposed to be impressive.

It’s particularly clear in the grandfather example. The man probably has no idea how any of the current technology works, it’s all black-box magic to him. Why should “the box is able to generate a picture ex nihilo given a natural-language description of it” parse as significantly more impressive to him than “the box is able to find any picture on the internet given its annotation”? Given a gears-level model of things, it’s obvious to us; lacking such a model, the impressiveness is just an informed attribute.

Similar is in effect with non-tech-savvy younger people. They don’t think about this stuff much.

Similar is in effect with you, when it comes to some field you’re not familiar with at all. If there was some amazing breakthrough in e. g. seismography (or linen manufacture, or galaxy-evolution modeling), and you were presented with two papers — one outlining it and one that’s just a completely ordinary paper in the field — how would you tell them apart? (Besides judging the excitement with which the text is written and such.)

Relevant XKCD.

Definitely good to keep this in mind, but to me some of this stuff seems obviously super impressive even if you do not know the technical details. Generating complex rich pictures on demand that mostly match requested details not being impressive doesn’t parse for me.

It parses for me; pretty sure a lot of people just don’t see why that is impressive, and I can model their mental state. (As the XKCD alt-text notes, last century a lot of specialists thought such problems were tractable for a small group of people working for a few months. The specialists have grown wiser since then, but who’s to say such understanding percolated to everyone else?)

But I suppose there is another component to this: whether you find something viscerally impressive depends on whether you think it’s actually cool or useful. We here have dispositions such as “technology is cool/powerful/dangerous!”, so we’re very impressed and excited to see technological breakthroughs. A lot of people don’t; they don’t immediately see the implications, don’t care that a major problem was solved, so it just looks like, say, nerds being excited by irrelevant nerd stuff.

By analogy, again, imagine that you were informed that some theorem in an obscure mathematical field far from any practical applications was solved after decades of work. Even if you grok why it was so difficult, would you be excited by these news? (Or maybe “we’ve finally found this obscure species of moss long thought extinct!” or “we’ve improved the technique for filtering clay water by 1%!”.)

I agree up until you say “It wouldn’t.” The severity of governmental regulations varies with the difficulty of alignment, the difficulty of creating AGI and some other factors. In a Yudkowskian world, you’d have to have sever control over the compute supply, and distribution, chain, as well as on AI research, in order to be pretty confident we’d avert x-risks.

That said, there are relatively light touches which would improve our log odds by quite a bit under a broad range of views. And according to my model, there are interventions that would reduce P(doom) by like, a third, maybe even a half e.g. anualy-decreasing compute threshold, tracking chips at the hardware level, restrictions on what random biochemicals you can order synthesized without any checks etc. These are fairly restrictive, but not that onerous.

EDIT: I just realized that your statement is compatible with what I said.

I continue to be confused about this if they managed to undo all of the safeguards with $200 worth of compute. Does that mean Meta spent $200 in compute to install their safeguards? Perhaps naively, I thought it would take as much compute to undo some training as it would to do it in the first place. Unless, of course, they had access to the base-model. In which case, it would be trivial to undo the fine-tuning.

Yep, as the edit says I don’t think we disagree on the first point—there are versions that are oppressive, but also versions that are not that still have large positive effects.

On the second point, I believe this is because it is much harder to introduce safeguards than to remove them, because removing them is a highly blunt target, whereas good safeguards have to be detailed to avoid false positives (which Llama-2 did not do a good job avoiding, but they did try). This is the key asymmetry here, the amount Meta (or anyone else) spends tuning does not help here.