I believe there are two phenomena happening during training

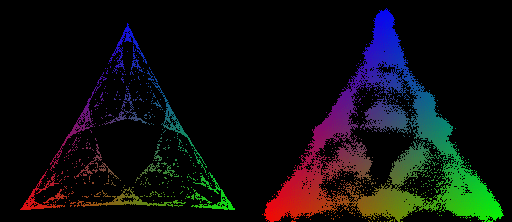

Predictions corresponding to the same stable region become more similar, i.e. stable regions become more stable. We can observe this in the animations.

Existing regions split, resulting in more regions.

I hypothesize that

could be some kind of error correction. Models learn to rectify errors coming from superposition interference or another kind of noise.

could be interpreted as more capable models picking up on subtler differences between the prompts and adjusting their predictions.

I’m not familiar with this interpretation. Here’s what Claude has to say (correct about stable regions, maybe hallucinating about Hopfield networks)