Just FYI, TT, please keep telling people about value sharding! Telling people about working solutions to alignment subproblems is a really good thing!!

bigbird

Ah, that wasn’t my intention at all!

A side-lecture Keltham gives in Eliezer’s story reminds me about some interactions I’d have with my dad as a kid. We’d be playing baseball, and he’d try to teach me some mechanical motion, and if I didn’t get it or seemed bored he’d say “C’mon ${name}, it’s phsyics! F=ma!”

Different AIs run built and run by different organizations would have different utility functions and may face equal competition from one another, that’s fine. My problem is the part after that where he implies (says?) that the Google StockMaxx AI supercluster would face stiff competition from the humans at FBI & co.

[Removed, was meant to be nice but I can see how it could be taken the other way]

I think it’d be good to get these people who dismiss deep learning to explicitly state whether or not the only thing keeping us from imploding, is an inability by their field to solve a core problem it’s explicitly trying to solve. In particular it seems weird to answer a question like “why isn’t AI X-risk a problem” with “because the ML industry is failing to barrel towards that target fast enough”.

I am slightly baffled that someone who has lucidly examined all of the ways in which corporations are horribly misaligned and principle-agent problems are everywhere, does not see the irony in saying that managing/regulating/policing those corporations will be similar to managing an AI supercluster totally united by the same utility function.

Why not also have author names at the bottom, while you’re at it.

too radical

The craziest part of being a rationalist is regularly reading completely unrelated technical content, thinking “this person seems lucid”, then going to their blog and seeing that they are Martin Sustrik.

peaceful protest of the acceleration of agi technology without an actually specific written & coherent plan for what we will do when we get there

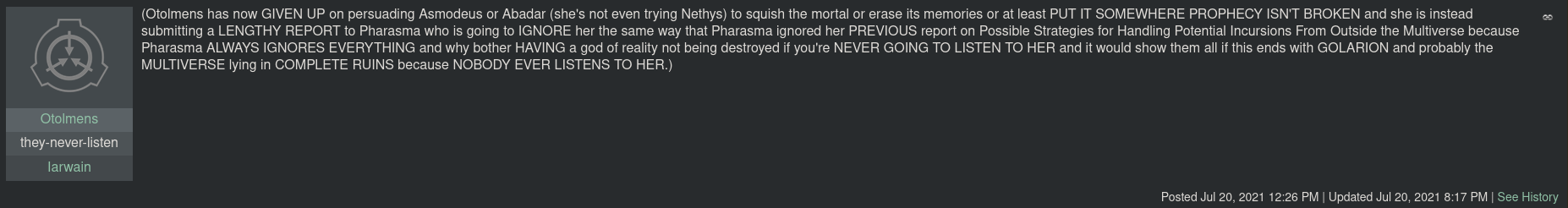

Seriously this is the funniest shit

Nothing Yudkowsky has ever done has impressed me as much as noticing the timestamps on the Mad Investor Chaos glowfic. My peewee brain is in shock.

How much coordination went on behind the scenes to get the background understanding of the world? Do they list out plot points and story beats before each session? What proportion of what I’m seeing is railroaded vs. made up on the spot? I really wish I had these superpowers, damnit.

bigbird’s Shortform

You went from saying telling the general public about the problem is net negative to saying that it’s got an opportunity cost, and there are probably unspecified better things to do with your time. I don’t disagree with the latter.

One reason you might be in favor of telling the larger public about AI risk absent a clear path to victory is that it’s the truth, and even regular people that don’t have anything to immediately contribute to the problem deserve to know if they’re gonna die in 10-25 years.

This is just “are you a good person” with few or no subtle twists, right?