Rational Animations’ main writer and helmsman

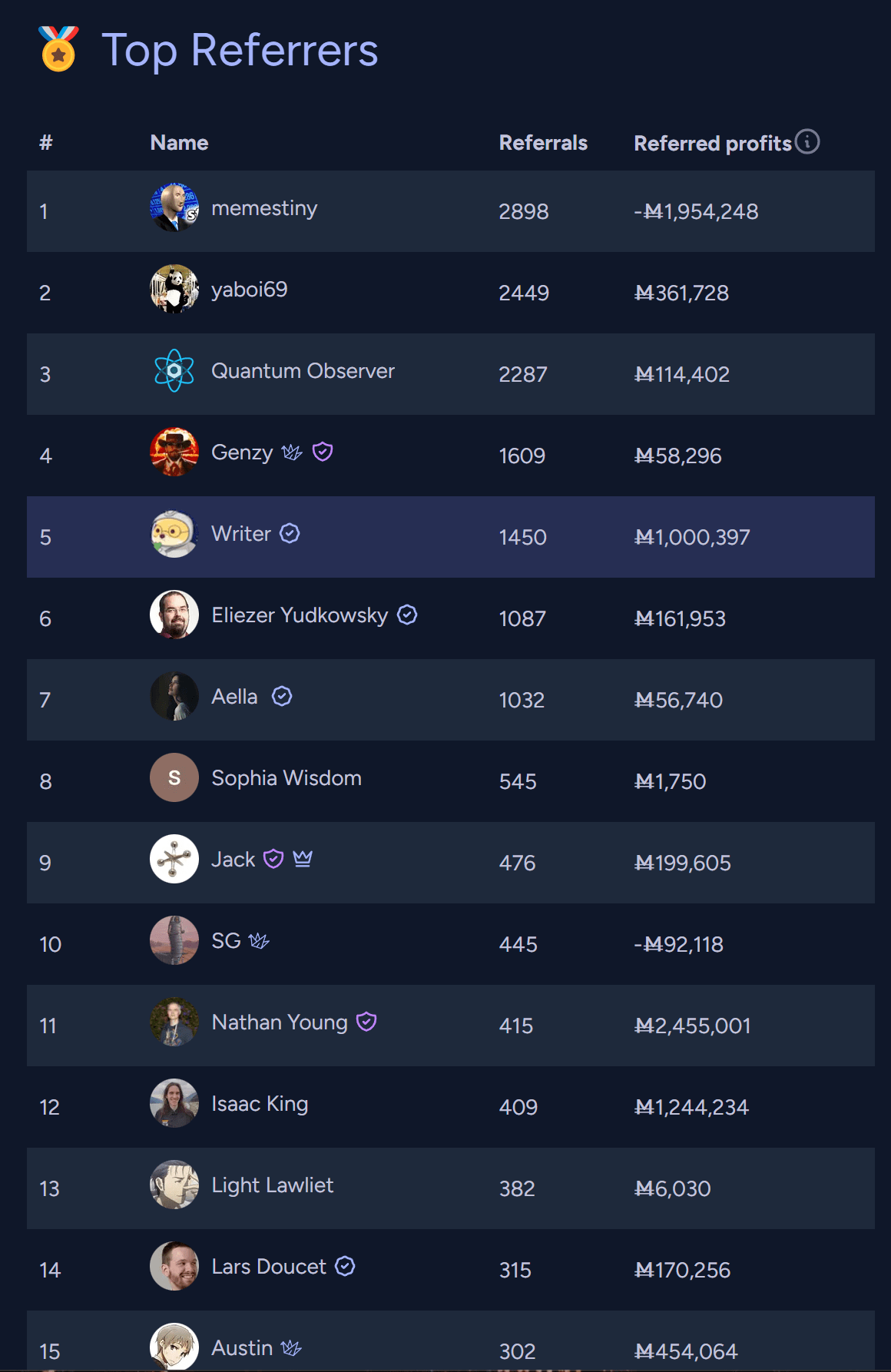

Writer

Update:

I think it would be very interesting to see you and @TurnTrout debate with the same depth, preparation, and clarity that you brought to the debate with Robin Hanson.

Edit: Also, tentatively, @Rohin Shah because I find this point he’s written about quite cruxy.

For me, perhaps the biggest takeaway from Aschenbrenner’s manifesto is that even if we solve alignment, we still have an incredibly thorny coordination problem between the US and China, in which each is massively incentivized to race ahead and develop military power using superintelligence, putting them both and the rest of the world at immense risk. And I wonder if, after seeing this in advance, we can sit down and solve this coordination problem in ways that lead to a better outcome with a higher chance than the “race ahead” strategy and don’t risk encountering a short period of incredibly volatile geopolitical instability in which both nations develop and possibly use never-seen-before weapons of mass destruction.

Edit: although I can see how attempts at intervening in any way and raising the salience of the issue risk making the situation worse.

Noting that additional authors still don’t carry over when the post is a cross-post, unfortunately.

I’d guess so, but with AGI we’d go much much faster. Same for everything you’ve mentioned in the post.

Turn everyone hot

If we can do that due to AGI, almost surely we can solve aging, which would be truly great.

Looking for someone in Japan who had experience with guns in games, he looked on twitter and found someone posting gun reloading animations

Having interacted with animation studios and being generally pretty embedded in this world, I know that many studios are doing similar things, such as Twitter callouts if they need some contractors fast for some projects. Even established anime studios do this. I know at least two people who got to work on Japanese anime thanks to Twitter interactions.

I hired animators through Twitter myself, using a similar process: I see someone who seems really talented → I reach out → they accept if the offer is good enough for them.

If that’s the case for animation, I’m pretty sure it often applies to video games, too.

Thank you! And welcome to LessWrong :)

The comments under this video seem okayish to me, but maybe it’s because I’m calibrated on worse stuff under past videos, which isn’t necessarily very good news to you.

The worst I’m seeing is people grinding their own different axes, which isn’t necessarily indicative of misunderstanding.But there are also regular commenters who are leaving pretty good comments:

The other comments I see range from amused and kinda joking about the topic to decent points overall. These are the top three in terms of popularity at the moment:

Stories of AI takeover often involve some form of hacking. This seems like a pretty good reason for using (maybe relatively narrow) AI to improve software security worldwide. Luckily, the private sector should cover it in good measure for financial interests.

I also wonder if the balance of offense vs. defense favors defense here. Usually, recognizing is easier than generating, and this could apply to malicious software. We may have excellent AI antiviruses devoted to the recognizing part, while the AI attackers would have to do the generating part.

[Edit: I’m unsure about the second paragraph here. I’m feeling better about the first paragraph, especially given slow multipolar takeoff and similar, not sure about fast unipolar takeoff]

Also I don’t think that LLMs have “hidden internal intelligence”

I don’t think Simulators claims or implies that LLMs have “hidden internal intelligence” or “an inner homunculus reasoning about what to simulate”, though. Where are you getting it from? This conclusion makes me think you’re referring to this post by Eliezer and not Simulators.

Yoshua Bengio is looking for postdocs for alignment work:

I am looking for postdocs, research engineers and research scientists who would like to join me in one form or another in figuring out AI alignment with probabilistic safety guarantees, along the lines of the research program described in my keynote (https://www.alignment-workshop.com/nola-2023) at the New Orleans December 2023 Alignment Workshop.

I am also specifically looking for a postdoc with a strong mathematical background (ideally an actual math or math+physics or math+CS degree) to take a leadership role in supervising the Mila research on probabilistic inference and GFlowNets, with applications in AI safety, system 2 deep learning, and AI for science.

Please contact me if you are interested.

i think about this story from time to time. it speaks to my soul.

it is cool that straight-up utopian fiction can have this effect on me.

it yanks me in a state of longing. it’s as if i lost this world a long time ago, and i’m desperately trying to regain it.

i truly wish everything will be ok :,)

thank you for this, tamsin.

Here’s a new RA short about AI Safety: https://www.youtube.com/shorts/4LlGJd2OhdQ

This topic might be less relevant given today’s AI industry and the fast advancements in robotics. But I also see shorts as a way to cover topics that I still think constitute fairly important context, but, for some reason, it wouldn’t be the most efficient use of resources to cover in long forms.

The way I understood it, this post is thinking aloud while embarking on the scientific quest of searching for search algorithms in neural networks. It’s a way to prepare the ground for doing the actual experiments.

Imagine a researcher embarking on the quest of “searching for search”. I highlight in cursive the parts present in the post (if they are present at least a little):

- At some point, the researcher reads Risks From Learned Optimization.

- They complain: “OK, Hubinger, fine, but you haven’t told me what search is anyway”

- They read or get involved in the discussions about optimization that ensue on LessWrong.

- They try to come up with a good definition, but they find themselves with some difficulties.

- They try to understand what kind of beast search is by coming up with some general ways to do search.

- They try to determine what kind of search neural networks might implement. They use a bunch of facts they know about search processes and neural networks to come up with ideas.

- They try to devise ways to test these hypotheses and even a meta “how do I go about forming and testing these hypotheses anyway.”

- They think of a bunch of experiments, but they notice pitfalls. They devise strategies such as “ok; maybe it’s better if I try to get a firehose of evidence instead of optimizing too hard on testing single hypotheses.”

- They start doing actual interpretability experiments.Having the reasoning steps in this process laid out for everyone to see is informative and lets people chime in with ideas. Not going through the conceptual steps of the process, at least privately, before doing experiments, risks wasting a bunch of resources. Exploring the space of problems is cool and good.

This recent Tweet by Sam Altman lends some more credence to this post’s take:

RA has started producing shorts. Here’s the first one using original animation and script: https://www.youtube.com/shorts/4xS3yykCIHU

The LW short-form feed seems like a good place for posting some of them.

In this post, I appreciated two ideas in particular:

Loss as chisel

Shard Theory

“Loss as chisel” is a reminder of how loss truly does its job, and its implications on what AI systems may actually end up learning. I can’t really argue with it and it doesn’t sound new to my ear, but it just seems important to keep in mind. Alone, it justifies trying to break out of the inner/outer alignment frame. When I start reasoning in its terms, I more easily appreciate how successful alignment could realistically involve AIs that are neither outer nor inner aligned. In practice, it may be unlikely that we get a system like that. Or it may be very likely. I simply don’t know. Loss as a chisel just enables me to think better about the possibilities.

In my understanding, shard theory is, instead, a theory of how minds tend to be shaped. I don’t know if it’s true, but it sounds like something that has to be investigated. In my understanding, some people consider it a “dead end,” and I’m not sure if it’s an active line of research or not at this point. My understanding of it is limited. I’m glad I came across it though, because on its surface, it seems like a promising line of investigation to me. Even if it turns out to be a dead end I expect to learn something if I investigate why that is.

The post makes more claims motivating its overarching thesis that dropping the frame of outer/inner alignment would be good. I don’t know if I agree with the thesis, but it’s something that could plausibly be true, and many arguments here strike me as sensible. In particular, the three claims at the very beginning proved to be food for thought to me: “Robust grading is unnecessary,” “the loss function doesn’t have to robustly and directly reflect what you want,” “inner alignment to a grading procedure is unnecessary, very hard, and anti-natural.”

I also appreciated the post trying to make sense of inner and outer alignment in very precise terms, keeping in mind how deep learning and reinforcement learning work mechanistically.

I had an extremely brief irl conversation with Alex Turner a while before reading this post, in which he said he believed outer and inner alignment aren’t good frames. It was a response to me saying I wanted to cover inner and outer alignment on Rational Animations in depth. RA is still going to cover inner and outer alignment, but as a result of reading this post and the Training Stories system, I now think we should definitely also cover alternative frames and that I should read more about them.

I welcome corrections of any misunderstanding I may have of this post and related concepts.

Maybe obvious sci-fi idea: generative AI, but it generates human minds

The two Gurren Lagann movies cover all the events in the series, and based on my recollection, they should be better animated. Still based on what I remember, the first should have a pretty central take on scientific discovery. The second should be more about ambition and progress, but both probably have at least a bit of both. It’s not by chance that some e/accs have profile pictures inspired by that anime. I feel like people here might disagree with part of the message, but I think it does say something about issues we care about here pretty forcefully. (Also, it was cited somewhere in HP: MoR, but for humor.)