Rational Animations’ main writer and helmsman

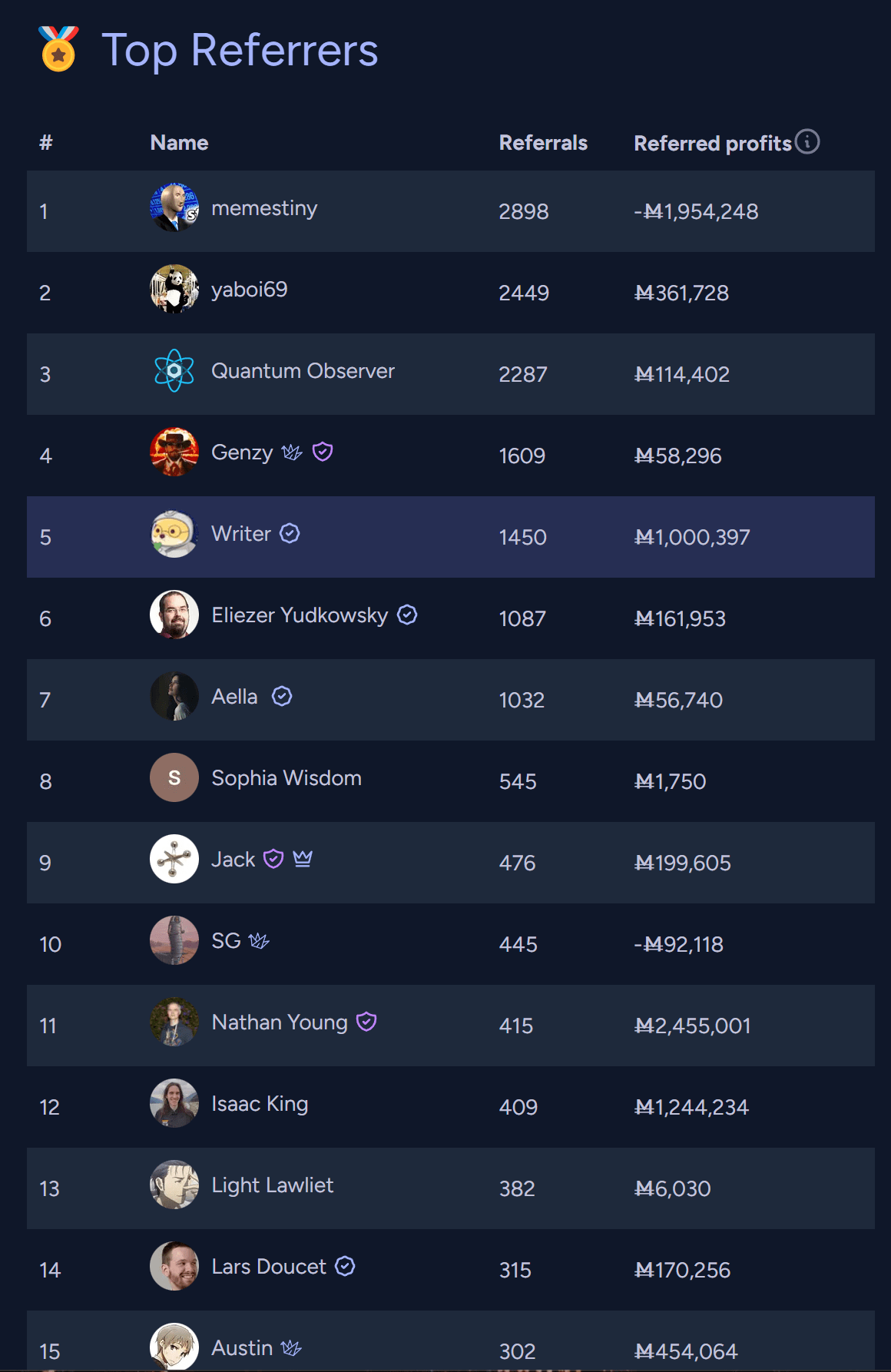

Writer

It’s true that a video ending with a general “what to do” section instead of a call-to-action to ControlAI would have been more likely to stand the test of time (it wouldn’t be tied to the reputation of one specific organization or to how good a specific action seemed at one moment in time). But… did you write this because you have reservations about ControlAI in particular, or would you have written it about any other company?

Also, I want to make sure I understand what you mean by “betraying people’s trust.” Is it something like, “If in the future ControlAI does something bad, then, from the POV of our viewers, that means that they can’t trust what they watch on the channel anymore?”

RA x ControlAI video: What if AI just keeps getting smarter?

But the “unconstrained text responses” part is still about asking the model for its preferences even if the answers are unconstrained.

That just shows that the results of different ways of eliciting its values remain sorta consistent with each other, although I agree it constitutes stronger evidence.

Perhaps a more complete test would be to analyze whether its day to day responses to users are somehow consistent with its stated preferences and analyzing its actions in settings in which it can use tools to produce outcomes in very open-ended scenarios that contain stuff that could make the model act on its values.

Thanks! I already don’t feel as impressed by the paper as I was while writing the shortform and I feel a little embarrassed for not thinking through things a little bit more before posting my reactions, although at least now there’s some discussion under the linkpost so I don’t entirely regret my comment if it prompted people to give their takes. I still feel to have updated in a non-negligible way from the paper though, so maybe I’m still not as pessimistic about it as other people. I’d definitely be interested in your thoughts if you find discourse is still lacking in a week or two.

I’d guess an important caveat might be that stated preferences being coherent doesn’t immediately imply that behavior in other situations will be consistent with those preferences. Still, this should be an update towards agentic AI systems in the near future being goal-directed in the spooky consequentialist sense.

Why?

Surprised that there’s no linkpost about Dan H’s new paper on Utility Engineering. It looks super important, unless I’m missing something. LLMs are now utility maximisers? For real? We should talk about it: https://x.com/DanHendrycks/status/1889344074098057439

I feel weird about doing a link post since I mostly post updates about Rational Animations, but if no one does it, I’m going to make one eventually.

Also, please tell me if you think this isn’t as important as it looks to me somehow.

EDIT: Ah! Here it is! https://www.lesswrong.com/posts/SFsifzfZotd3NLJax/utility-engineering-analyzing-and-controlling-emergent-value thanks @Matrice Jacobine!

Can Knowledge Hurt You? The Dangers of Infohazards (and Exfohazards)

Our new video about goal misgeneralization, plus an apology

The two Gurren Lagann movies cover all the events in the series, and based on my recollection, they should be better animated. Still based on what I remember, the first should have a pretty central take on scientific discovery. The second should be more about ambition and progress, but both probably have at least a bit of both. It’s not by chance that some e/accs have profile pictures inspired by that anime. I feel like people here might disagree with part of the message, but I think it does say something about issues we care about here pretty forcefully. (Also, it was cited somewhere in HP: MoR, but for humor.)

The King and the Golem—The Animation

Update:

That Alien Message—The Animation

I think it would be very interesting to see you and @TurnTrout debate with the same depth, preparation, and clarity that you brought to the debate with Robin Hanson.

Edit: Also, tentatively, @Rohin Shah because I find this point he’s written about quite cruxy.

The world is awful. The world is much better. The world can be much better: The Animation.

Rational Animations’ intro to mechanistic interpretability

For me, perhaps the biggest takeaway from Aschenbrenner’s manifesto is that even if we solve alignment, we still have an incredibly thorny coordination problem between the US and China, in which each is massively incentivized to race ahead and develop military power using superintelligence, putting them both and the rest of the world at immense risk. And I wonder if, after seeing this in advance, we can sit down and solve this coordination problem in ways that lead to a better outcome with a higher chance than the “race ahead” strategy and don’t risk encountering a short period of incredibly volatile geopolitical instability in which both nations develop and possibly use never-seen-before weapons of mass destruction.

Edit: although I can see how attempts at intervening in any way and raising the salience of the issue risk making the situation worse.

That’s fair, we wrote that part before DeepSeek became a “top lab” and we failed to notice there was an adjustment to make