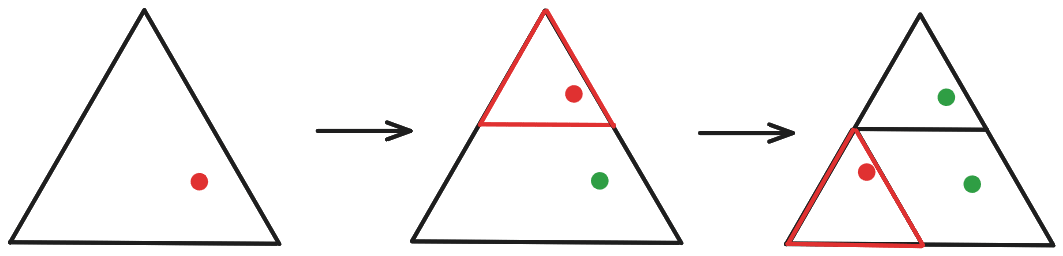

I think you’re imagining that we modify the shrink-and-reposition functions each iteration, lowering their scope? I. e., that if we picked the topmost triangle for the first iteration, then in iteration two we pick one of the three sub-triangles making up the topmost triangle, rather than choosing one of the “highest-level” sub-triangles?

Something like this:

If we did it this way, then yes, we’d eventually end up jumping around an infinitesimally small area. But that’s not how it works, we always pick one of the highest-level sub-triangles:

Note also that we take in the “global” coordinates of the point we shrink-and-reposition (i. e., its position within the whole triangle), rather than its “local” coordinates (i. e., position within the sub-triangle to which it was copied).

Here’s a (slightly botched?) video explanation.

That’s good news.

There was a brief moment, back in 2023, when OpenAI’s actions made me tentatively optimistic that the company was actually taking alignment seriously, even if its model of the problem was broken.

Everything that happened since then has made it clear that this is not the case; that all these big flashy commitments like Superalignment were just safety-washing and virtue signaling. They were only going to do alignment work inasmuch as that didn’t interfere with racing full-speed towards greater capabilities.

So these resignations don’t negatively impact my p(doom) in the obvious way. The alignment people at OpenAI were already powerless to do anything useful regarding changing the company direction.

On the other hand, what these resignations do is showcasing that fact. Inasmuch as Superalignment was a virtue-signaling move meant to paint OpenAI as caring deeply about AI Safety, so many people working on it resigning or getting fired starkly signals the opposite.

And it’s good to have that more in the open; it’s good that OpenAI loses its pretense.

Oh, and it’s also good that OpenAI is losing talented engineers, of course.