In the day I would be reminded of those men and women,

Brave, setting up signals across vast distances,

Considering a nameless way of living, of almost unimagined values.

Emrik

He linked his extensive research log on the project above, and has made LW posts of some of their progress. That said, I don’t know of any good legible summary of it. It would be good to have. I don’t know if that’s one of Johannes’ top priorities, however. It’s never obvious from the outside what somebody’s top priorities ought to be.

Surely you could work for free as an engineer at an AI alignment org or something and then shift into discussions w/ them about alignment?

To be clear: his motivation isn’t “I want to contribute to alignment research!” He’s aiming to actually solve the problem. If he works as an engineer at an org, he’s not pursuing his project, and he’d be approximately 0% as usefwl.

I strongly endorse Johannes’ research approach. I’ve had 6 meetings with him, and have read/watched a decent chunk of his posts and YT vids. I think the project is very unlikely to work, but that’s true of all projects I know of, and this one seems at least better than almost all of them. (Reality doesn’t grade on a curve.)

Still, I really hope funders would consider funding the person instead of the project, since I think Johannes’ potential will be severely stifled unless he has the opportunity to go “oops! I guess I ought to be doing something else instead” as soon as he discovers some intractable bottleneck wrt his current project. He’s literally the person I have the most confidence in when it comes to swiftly changing path to whatever he thinks is optimal, and it would be a real shame if funding gave him an incentive to not notice reasons to pivot. (For more on this, see e.g. Steve’s post.)

I realize my endorsement doesn’t carry much weight for people who don’t know me, and I don’t have much general clout here, but if you’re curious here’s my EA forum profile and twitter. On LW, I’m mostly these users {this, 1, 2, 3, 4}. Some other things which I hope will nudge you to take my endorsement a bit more seriously:

I’ve been working full-time on AI alignment since early 2022.

I rarely post about my work, however, since I’m not trying to “contribute”—I’m trying to do.

EA has been my top life-priority since 2014 (I was 21).

I’ve read the Sequences in their entirety at least once. (Low bar, but worth mentioning.)

I have no academic or professional background because I’m financially secure with disability money. This means I can spend 100% of my time following my own sense of what’s optimal for me without having to take orders or produce impressive/legible artifacts.

I think Johannes will be much more effective if he has the same freedom, and is not tied to any particular project. I really doubt anyone other than him will be better able to evaluate what the optimal use of his time is.

Edit: I should mention that Johannes hasn’t prompted me to say any of this. I took noticed him due to the candour of his posts and reached out by myself a few months ago.

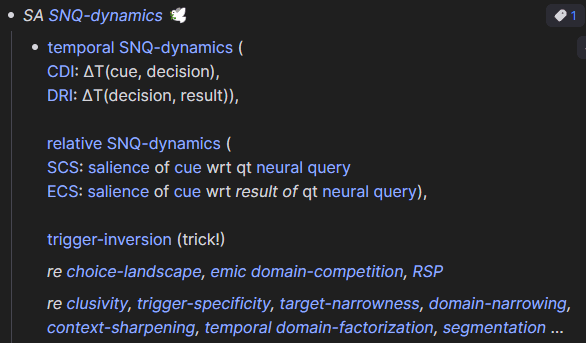

Epic Lizka post is epic.

Also, I absolutely love the word “shard” but my brain refuses to use it because then it feels like we won’t get credit for discovering these notions by ourselves. Well, also just because the words “domain”, “context”, “scope”, “niche”, “trigger”, “preimage” (wrt to a neural function/policy / “neureme”) adequately serve the same purpose and are currently more semantically/semiotically granular in my head.

trigger/preimage ⊆ scope ⊆ domain

“niche” is a category in function space (including domain, operation, and codomain), “domain” is a set.

“scope” is great because of programming connotations and can be used as a verb. “This neural function is scoped to these contexts.”

Aaron Bergman has a vid of himself typing new sentences in real-time, which I found really helpfwl.[1] I wish I could watch lots of people record themselves typing, so I could compare what I do.

Being slow at writing can be sign of failure or winning, depending on the exact reasons why you’re slow. I’d worry about being “too good” at writing, since that’d be evidence that your brain is conforming your thoughts to the language, instead of conforming your language to your thoughts. English is just a really poor medium for thought (at least compared to e.g. visuals and pre-word intuitive representations), so it’s potentially dangerous to care overmuch about it.

- ^

Btw, Aaron is another person-recommendation. He’s awesome. Has really strong self-insight, goodness-of-heart, creativity. (Twitter profile, blog+podcast, EAF, links.) I haven’t personally learned a whole bunch from him yet,[2] but I expect if he continues being what he is, he’ll produce lots of cool stuff which I’ll learn from later.

- ^

Edit: I now recall that I’ve learned from him: screwworms (important), and the ubiquity of left-handed chirality in nature (mildly important). He also caused me to look into two-envelopes paradox, which was usefwl for me.

Although I later learned about screwworms from Kevin Esvelt at 80kh podcast, so I would’ve learned it anyway. And I also later learned about left-handed chirality from Steve Mould on YT, but I may not have reflected on it as much.

- ^

I did nearly this in ~2015. I made a folder with pictures of inspiring people (it had Eliezer Yudkowsky, Brian Tomasik, David Pearce, Grigori Perelman, Feynman, more idr), and used it as my desktop background or screensaver or both (idr).

I say this because I am surprised at how much our thoughts/actions have converged, and wish to highlight examples that demonstrate this. And I wish to communicate that because basically senpai notice me. kya.

I wrote the entry in the context of the question “how can I gain the effectiveness-benefits of confidence and extreme ambition, without distorting my world-model/expectations?”

I had recently been discovering abstract arguments that seemed to strongly suggest it would be most altruistic/effective for me to pursue extremely ambitious projects; both because 1) the low-likelihood high-payoff quadrant had highest expected utility, but also because 2) the likelihood of success for extremely ambitious projects seemed higher than I thought. (Plus some other reasons.) I figured that I needn’t feel confident about success in order to feel confident about the approach.

This exact thought, from my diary in ~June 2022: “I advocate keeping a clear separation between how confident you are that your plan will work with how confident you are that pursuing the plan is optimal.”

I think perhaps I don’t fully advocate alieving that your plan is more likely to work than you actually believe it is. Or, at least, I advocate some of that on the margin, but mostly I just advocate keeping a clear separation between how confident you are that your plan will work with how confident you are that pursuing the plan is optimal. As long as you tune yourself to be inspired by working on the optimal, you can be more ambitious and less risk-averse.

Unfortunately, if you look like you’re confidently pursuing a plan (because you think it’s optimal, but your reasons are not immediately observable), other people will often mistake that for confidence-in-results and perhaps conclude that you’re epistemically crazy. So it’s nearly always socially safer to tune yourself to confidence-in-results lest you risk being misunderstood and laughed at.

You also don’t want to be so confident that what you’re doing is optimal that you’re unable to change your path when new evidence comes in. Your first plan is unlikely to be the best you can do, and you can only find the best you can do by trying many different things and iterating. Confidence can be an obstacle to change.

On the other hand, lack of confidence can also be an obstacle to change. If you’re not confident that you can do better than you’re currently doing, then you’ll have a hard time motivating yourself to find alternatives. Underconfidence is probably underappreciated as a source of bias due to social humility being such a virtue.

To use myself as an example (although I didn’t intend for this to be about me rather than about my general take on mindset): I feel pretty good about the ideas I’ve come up with so far. So now I have a choice to make: 1) I could think that the ideas are so good that I should just focus on building and clarifying them, or 2) I could use the ideas as evidence that I’m able to produce even better ideas if I keep searching. I’m aiming for the latter, and I hold my current best ideas in contempt because I’m still stuck with them. In some sense, confidence makes it easier to Actually Change My Mind.

I guess the recipe I might be advocating is:

1. separate between confidence-in-results and confidence-in-optimality

2. try to hold accurate and precise beliefs about the results of your plans/ideas, mistakes here are costly

3. try to alieve that you’re able to produce more optimal ideas/plans than the ones you already have, mistakes here are less costly, and gains in positive alief are much higherI’m going to call this the Way of Aisi because it reminds me of an old friend who just did everything better than everyone else (including himself) because he had faith in himself. :p

reflections on smileys and how to make society’s interpretive priors more charitable

Oh cool. Another way of embedding higher dimensions in 2D. Edges don’t have to visually line up as long as you label them. And if some dimension (eg ‘z’) is very rarely used, it takes up much less cognitive space compared to if you tried to represent it on equal terms as all other dimensions (eg as in a spatial visualisation). Not sure what I’ll use it for yet tho.

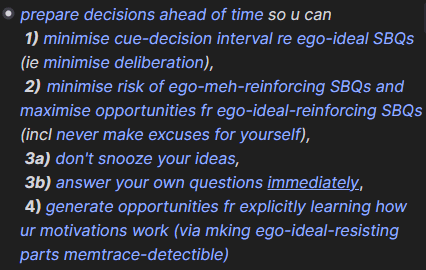

personally, I try to “prepare decisions ahead of time”. so if I end up in situation where I spend more than 10s actively prioritizing the next thing to do, smth went wrong upstream. (prev statement is exaggeration, but it’s in the direction of what I aspire to lurn)

as an example, here’s how I’ve summarized the above principle to myself in my notes:

(note: these titles is v likely cause misunderstanding if u don’t already know what I mean by them; I try avoid optimizing my notes for others’ viewing, so I’ll never bother caveating to myself what I’ll remember anyway)I bascly want to batch process my high-level prioritization, bc I notice that I’m v bad at bird-level perspective when I’m deep in the weeds of some particular project/idea. when I’m doing smth w many potential rabbit-holes (eg programming/design), I set a timer (~35m, but varies) for forcing myself to step back and reflect on what I’m doing (atm, I do this less than once a week; but I do an alternative which takes longer to explain).

I’m prob wasting 95% of my time on unnecessary rabbit-holes that cud be obviated if only I’d spent more Manual Effort ahead of time. there’s ~always a shorter path to my target, and it’s easier to spot from a higher vantage-point/perspective.

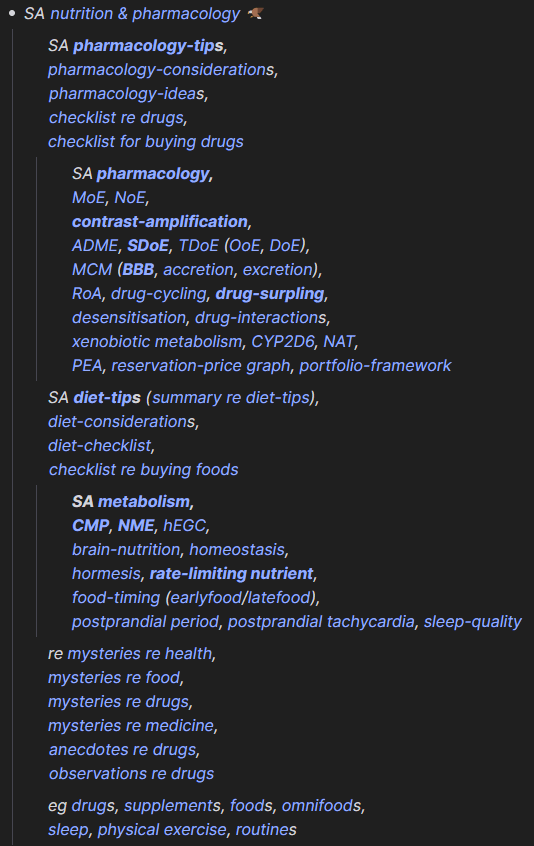

as for figuring out what and how to distill…

Context-Logistics Framework

one of my project-aspirations is to make a “context-logistics framework” for ensuring that the right tidbits of information (eg excerpts fm my knowledge-network) pop up precisely in the context where I’m most likely to find use for it.

this can be based on eg window titles

eg auto-load my checklist for buying drugs when I visit iherb.com, and display it on my side-monitor

or it can be a script which runs on every detected context-switch

eg ask GPT-vision to summarize what it looks like I’m trying to achieve based on screenshot-context, and then ask it to fetch relevant entries from my notes, or provide a list of nonobvious concrete tips ppl in my situation tend to be unaware of

prob not worth the effort if using GPT-4 tho, way too verbose and unable to say “I’ve got nothing”

a concrete use-case for smth-like-this is to display all available keyboard-shortcuts filtered by current context, which updates based on every key I’m holding (or key-history, if including chords).

I’ve looked for but not found any adequate app (or vscode extension) for this.

in my proof-of-concept AHK script, this infobox appears bottom-right of my monitor when I hold CapsLock for longer than 350ms:

my motivation for wanting smth-like-this is j observing that looking things up (even w a highly-distilled network of notes) and writing things in takes way too long, so I end up j using my brain instead (this is good exercise, but I want to free up mental capacity & motivation for other things).

Prophylactic Scope-Abstraction

the ~most important Manual Cognitive Algorithm (MCA) I use is:

Prophylactic Scope-Abstraction:

WHEN I see an interesting pattern/function,

THEN:try to imagine several specific contexts in which recalling the pattern could be usefwl

spot similarities and understand the minimal shared essence that unites the contexts

eg sorta like a minimal Markov blanket over the variables in context-space which are necessary for defining the contexts? or their list of shared dependencies? the overlap of their preimages?

express that minimal shared essence in abstract/generalized terms

then use that (and variations thereof) as u’s note title, or spaced repetition, or j say it out loud a few times

this happens to be exactly the process I used to generate the term “prophylactic scope-abstraction” in the first place.

other examples of abstracted scopes for interesting patterns:

> “I want to think of this concept whenever I’m trying to balance a portfolio of resources/expenditures, over which I have varying diminishing marginal returns; especially if they have threshold-effects.”

this enabled me to think in terms of “portfolio-management” more generally, and spot Giffen-effects in my own motivations/life, eg:

”when the energetic cost of leisure goes up, I end up doing more of it”patterns are always simpler than they appear.

> “I want to think of this concept whenever I see a multidimensional distribution/list sorted according to an aggregate dimension (eg avg, sum, prod) or when I see an aggregate sorting-mechanism over the same domain.”

it’s important bc the brain doesn’t automatically do this unless trained. and the only way interesting patterns can be usefwl, is if they are used; and while trying to mk novel epistemic contributions, that implies u need hook patterns into contexts they haven’t been used in bfr. I didn’t anticipate that this was gonna be my ~most important MCA when I initially started adopting it, but one year into it, I’ve seen it work too many times to ignore.

notice that the cost of this technique is upfront effort (hence “prophylactic”), which explains why the brain doesn’t do it automatically.

examples of distilled notes

some examples of how I write distilled notes to myself:

(note: I’m not expecting any of this to be understood, I j think it’s more effective communication to just show the practical manifestations of my way-of-doing-things, instead of words-words-words-ing.)

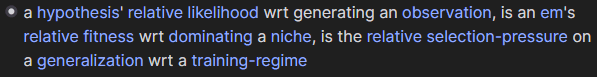

I also write statements I think are currently wrong into my net, eg bc that’s the most efficient way of storing the current state of my confusion. in this note, I’ve yet to find the precise way to synthesize the ideas, but I know a way must exist:

Still the only anime with what at least half-passes for a good ending. Food for thought, thanks! 👍

I’ve been exploring evolutionary metaphors to ML, so here’s a toy metaphor for RLHF: recessive persistence. (Still just trying to learn both fields, however.)

“Since loss-of-function mutations tend to be recessive (given that dominant mutations of this type generally prevent the organism from reproducing and thereby passing the gene on to the next generation), the result of any cross between the two populations will be fitter than the parent.” (k)

Related:

Recessive alleles persists due to overdominance letting detrimental alleles hitchhike on fitness-enhancing dominant counterpart. The detrimental effects on fitness only show up when two recessive alleles inhabit the same locus, which can be rare enough that the dominant allele still causes the pair to be selected for in a stable equilibrium.

The metaphor with deception breaks down due to unit of selection. Parts of DNA stuck much closer together than neurons in the brain or parameters in a neural networks. They’re passed down or reinforced in bulk. This is what makes hitchhiking so common in genetic evolution.

(I imagine you can have chunks that are updated together for a while in ML as well, but I expect that to be transient and uncommon. Idk.)

Bonus point: recessive phase shift.

“Allele-frequency change under directional selection favoring (black) a dominant advantageous allele and (red) a recessive advantageous allele.” (source)

In ML:

Generalisable non-memorising patterns start out small/sparse/simple.

Which means that input patterns rarely activate it, because it’s a small target to hit.

But most of the time it is activated, it gets reinforced (at least more reliably than memorised patterns).

So it gradually causes upstream neurons to point to it with greater weight, taking up more of the input range over time. Kinda like a distributed bottleneck.

Some magic exponential thing, and then phase shift!

One way the metaphor partially breaks down because DNA doesn’t have weight decay at all, so it allows for recessive beneficial mutations to very slowly approach fixation.

Eigen’s paradox is one of the most intractable puzzles in the study of the origins of life. It is thought that the error threshold concept described above limits the size of self replicating molecules to perhaps a few hundred digits, yet almost all life on earth requires much longer molecules to encode their genetic information. This problem is handled in living cells by enzymes that repair mutations, allowing the encoding molecules to reach sizes on the order of millions of base pairs. These large molecules must, of course, encode the very enzymes that repair them, and herein lies Eigen’s paradox...

(I’m not making any point, just wanted to point to interesting related thing.)

Seems like Andy Matuschak feels the same way about spaced repetition being a great tool for innovation.

I like the framing. Seems generally usefwl somehow. If you see someone believing something you think is inconsistent, think about how to money-pump them. If you can’t, then are you sure they’re being inconsistent? Of course, there are lots of inconsistent beliefs that you can’t money-pump, but seems usefwl to have a habit of checking. Thanks!

How do you account for the fact that the impact of a particular contribution to object-level alignment research can compound over time?

Let’s say I have a technical alignment idea now that is both hard to learn and very usefwl, such that every recipient of it does alignment research a little more efficiently. But it takes time before that idea disseminates across the community.

At first, only a few people bother to learn it sufficiently to understand that it’s valuable. But every person that does so adds to the total strength of the signal that tells the rest of the community that they should prioritise learning this.

Not sure if this is the right framework, but let’s say that researchers will only bother learning it if the strength of the signal hits their person-specific threshold for prioritising it.

Number of researchers are normally distributed (or something) over threshold height, and the strength of the signal starts out below the peak of the distribution.

Then (under some assumptions about the strength of individual signals and the distribution of threshold height), every learner that adds to the signal will, at first, attract more than one learner that adds to the signal, until the signal passes the peak of the distribution and the idea reaches satiation/fixation in the community.

If something like the above model is correct, then the impact of alignment research plausibly goes down over time.

But the same is true of a lot of time-buying work (like outreach). I don’t know how to balance this, but I am now a little more skeptical of the relative value of buying time.

Importantly, this is not the same as “outreach”. Strong technical alignment ideas are most likely incompatible with almost everyone outside the community, so the idea doesn’t increase the number of people working on alignment.

That’s fair, but sorry[1] I misstated my intended question. I meant that I was under the impression that you didn’t understand the argument, not that you didn’t understand the action they advocated for.

I understand that your post and this post argue for actions that are similar in effect. And your post is definitely relevant to the question I asked in my first comment, so I appreciate you linking it.

- ^

Actually sorry. Asking someone a question that you don’t expect yourself or the person to benefit from is not nice, even if it was just due to careless phrasing. I just wasted your time.

- ^

It’s a reasonable concern to have, but I’ve spoken enough with him to know that he’s not out of touch with reality. I do think he’s out of sync with social reality, however, and as a result I also think this post is badly written and the anecdotes unwisely overemphasized. His willingness to step out of social reality in order to stay grounded with what’s real, however, is exactly one of the main traits that make me hopefwl about him.

I have another friend who’s bipolar and has manic episodes. My ex-step-father also had rapid-cycling BP, so I know a bit about what it looks like when somebody’s manic.[1] They have larger-than-usual gaps in their ability to notice their effects on other people, and it’s obvious in conversation with them. When I was in a 3-person conversation with Johannes, he was highly attuned to the emotions and wellbeing of others, so I have no reason to think he has obvious mania-like blindspots here.

But when you start tuning yourself hard to reality, you usually end up weird in a way that’s distinct from the weirdness associated with mania. Onlookers who don’t know the difference may fail to distinguish the underlying causes, however. (“Weirdness” is a larger cluster than “normality”, but people mostly practice distinguishing between samples of normality, so weirdness all looks the same to them.)

I was also evaluated for it after an outlier depressive episode in 2021, so I got to see the diagnostic process up close. Turns out I just have recurring depressions, and I’m not bipolar.