Just build the good John’s but not the bad Johns.

wassname

This argument is empirical, while the orthogonality hypothesis is merely philosophical, which means this is a stronger argument imo.

But this argument does not imply alignment is easy. It implies that acute goals are easy, while orthogonal goals are hard. Therefore, a player of games agent will be easy to align with power-seeking, but hard to align with banter. A chat agent will be easy to align with banter and hard to align with power-seeking.

We are currently in the chat phase, which seems to imply easier alignment to chatty huggy human values, but we might soon enter the player of long-term games phase. So this argument implies that alignment is currently easier, and if we enter the era of RL long-term planning agents, it will get harder.

Feel free to suggest improvements, it’s just what worked for me, but is limited in format

If you are using llama you can use https://github.com/wassname/prob_jsonformer, or snippets of the code to get probabilities over a selection of tokens

That’s true, they are different. But search still provides the closest historical analogue (maybe employees/suppliers provide another). Historical analogues have the benefit of being empirical and grounded, so I prefer them over (or with) pure reasoning or judgement.

When you rephrase this to be about search engines

I think the main reason why we won’t censor search to some abstract conception of “community values” is because users won’t want to rent or purchase search services that are censor to such a broad target

It doesn’t describe reality. Most of us consume search and recommendations that has been censored (e.g. removing porn, piracy, toxicity, racism, taboo politics) in a way that pus cultural values over our preferences or interests.

So perhaps it won’t be true for AI either. At least in the near term, the line between AI and search is a blurred line, and the same pressures exist on consumers and providers.

A before and after would be even better!

Thanks, but this doesn’t really give insight on whether this is normal or enforceable. So I wanted to point out, we don’t know if it’s enforcible, and have not seen a single legal opinion.

Thanks, I hadn’t seen that, I find it convincing.

He might have returned to work, but agreed to no external coms.

Interesting! For most of us, this is outside our area of competence, so appreciate your input.

Are you familiar with USA NDA’s? I’m sure there are lots of clauses that have been ruled invalid by case law? In many cases, non-lawyers have no ideas about these, so you might be able to make a difference with very little effort. There is also the possibility that valuable OpenAI shares could be rescued?

If you haven’t seen it, check out this thread where one of the OpenAI leavers did not sigh the gag order.

It could just be because it reaches a strong conclusion on anecdotal/clustered evidence (e.g. it might say more about her friend group than anything else). Along with claims to being better calibrated for weak reasons—which could be true, but seems not very epistemically humble.

Full disclosure I downvoted karma, because I don’t think it should be top reply, but I did not agree or disagree.

But Jen seems cool, I like weird takes, and downvotes are not a big deal—just a part of a healthy contentious discussion.

Notably, there are some lawyers here on LessWrong who might help (possibly even for the lols, you never know). And you can look at case law and guidance to see if clauses are actually enforceable or not (many are not). To anyone reading, here’s habryka doing just that

One is the change to the charter to allow the company to work with the military.

https://news.ycombinator.com/item?id=39020778

I think the board must be thinking about how to get some independence from Microsoft, and there are not many entities who can counterbalance one of the biggest companies in the world. The government’s intelligence and defence industries are some of them (as are Google, Meta, Apple, etc). But that move would require secrecy, both to stop nationalistic race conditions, and by contract, and to avoid a backlash.

EDIT: I’m getting a few disagrees, would someone mind explaining why they disagree with these wild speculations?

Here’s something I’ve been pondering.

hypothesis: If transformers has internal concepts, and they are represented in the residual stream. Then because we have access to 100% of the information then it should be possible for a non-linear probe to get 100% out of distribution accuracy. 100% is important because we care about how a thing like value learning will generalise OOD.

And yet we don’t get 100% (in fact most metrics are much easier than what we care about, being in-distribution, or on careful setups). What is wrong with the assumptions hypothesis, do you think?

better calibrated than any of these opinions, because most of them don’t seem to focus very much on “hedging” or “thoughtful doubting”

new observations > new thoughts when it comes to calibrating yourself.

The best calibrated people are people who get lots of interaction with the real world, not those who think a lot or have a complicated inner model. Tetlock’s super forecasters were gamblers and weathermen.

I think this only holds if fine tunes are composable, which as far as I can tell they aren’t

Anecdotally, a lot of people are using mergekit to combine fine tunes

it feels less surgical than a single direction everywher

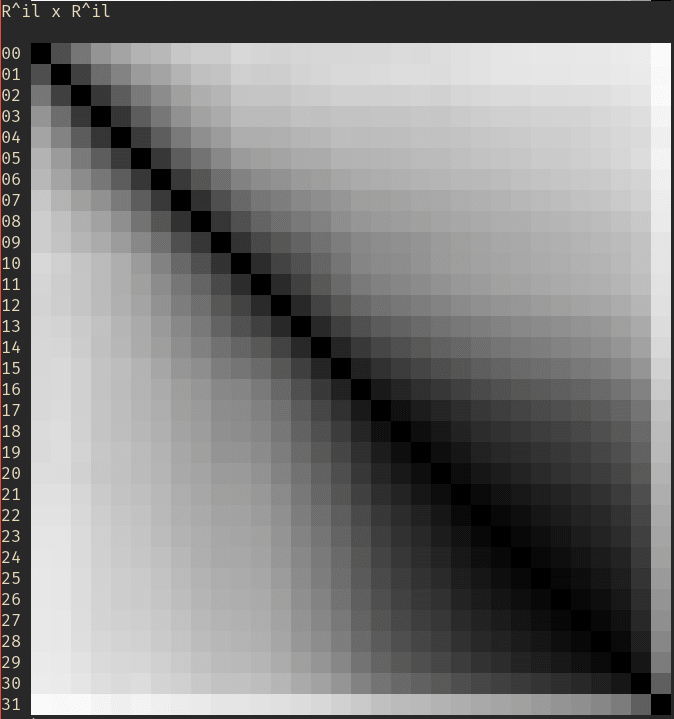

Agreed, it seems less elegant, But one guy on huggingface did a rough plot the cross correlation, and it seems to show that the directions changes with layer https://huggingface.co/posts/Undi95/318385306588047#663744f79522541bd971c919. Although perhaps we are missing something.

Note that you can just do torch.save(FILE_PATH, model.state_dict()) as with any PyTorch model.

omg, I totally missed that, thanks. Let me know if I missed anything else, I just want to learn.

The older versions of the gist are in transformerlens, if anyone wants those versions. In those the interventions work better since you can target resid_pre, redis_mid, etc.

Have you considered emphasizing this part of your position:

“We want to shut down AGI research including governments, military, and spies in all countries”.

I think this is an important point that is missed in current regulation, which focuses on slowing down only the private sector. It’s hard to achieve because policymakers often favor their own institutions, but it’s absolutely needed, so it needs to be said early and often. This will actually win you points with the many people who are cynical of the institutions, who are not just libertarians, but a growing portion of the public.

I don’t think anyone is saying this, but it fits your honest and confronting communication strategy.