Also, there’s now a second detected human case, this one in Michigan instead of Texas.

Both had a surprising-to-me “pinkeye” symptom profile. Weird!

The dairy worker in Michigan had various “compartments” tested and their nasal compartment (and people they lived with) were all negative. Hopeful?

Apparently and also hopefully this virus is NOT freakishly good at infecting humans and also weirdly many other animals (like covid was with human ACE2, in precisely the ways people have talked about when discussing gain-of-function in years prior to covid).

If we’re being foolishly mechanical in our inferences “n=2 with 2 survivors” could get rule of succession treatment. In that case we pseudocount 1 for each category of interest (hence if n=0 we say 50% survival chance based on nothing but pseudocounts), and now we have 3 survivors (2 real) versus 1 dead (0 real) and guess that the worst the mortality rate here would be maybe 1⁄4 == 25% (?? (as an ass number)), which is pleasantly lower than overall observed base rates for avian flu mortality in humans! :-)

Naive impressions: a natural virus, with pretty clear reservoirs (first birds and now dairy cows), on the maybe slightly less bad side of “potentially killing millions of people”?

I haven’t heard anything about sequencing yet (hopefully in a BSL4 (or homebrew BSL5, even though official BSL5s don’t exist yet), but presumably they might not bother to treat this as super dangerous by default until they verify that it is positively safe) but I also haven’t personally looked for sequencing work on this new thing.

When people did very dangerous Gain-of-Function research with a cousin of this, in ferrets, over 10 year ago (causing a great uproar among some) the supporters argued that it was was worth creating especially horrible diseases on purpose in labs in order to see the details, like a bunch of geeks who would Be As Gods And Know Good From Evil… and they confirmed back then that a handful of mutations separated “what we should properly fear” from “stuff that was ambient”.

Four amino acid substitutions in the host receptor-binding protein hemagglutinin, and one in the polymerase complex protein basic polymerase 2, were consistently present in airborne-transmitted viruses. (same source)

It seems silly to ignore this, and let that hilariously imprudent research of old go to waste? :-)

The transmissible viruses were sensitive to the antiviral drug oseltamivir and reacted well with antisera raised against H5 influenza vaccine strains. (still the same source)

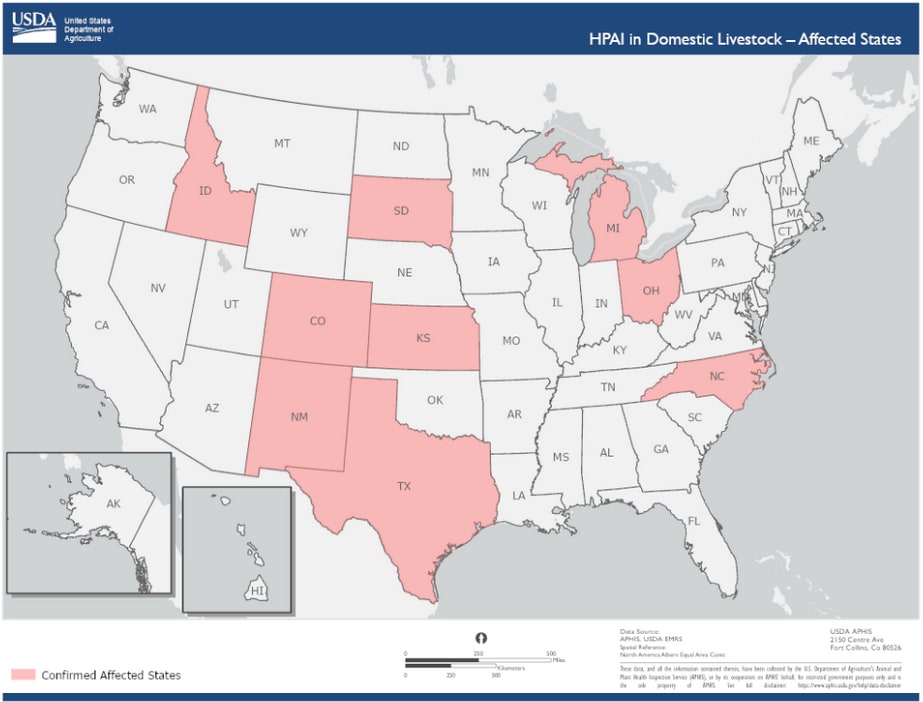

(Image sauce.)

Since some random scientists playing with equipment bought using taxpayer money already took the crazy risks back then, it would be silly to now ignore the information they bought so dearly (with such large and negative EV) back then <3

To be clear, that drug worked against something that might not even be the same thing.

All biological STEM stuff is a crapshoot. Lots and lots of stamp-collecting. Lots of guess and check. Lots of “the closest example we think we know might work like X” reasoning. Biological systems or techniques can do almost anything physically possible eventually, but each incremental improvement in repeatability (going from having to try 10 million times to get something to happen to having to try 1 million times (or going from having to try 8 times on average to 4 times on average) due to “progress” ) is kinda “as difficult as the previous increment in progress that made things an order of magnitude more repeatable”.

The new flu just went from 1 to 2. I hope it never gets to 4.

I feel like you’re saying “safety research” when the examples of what corporations centrally want is “reliable control over their slaves”… that is to say, they want “alignment” and “corrigibility” research.

This has been my central beef for a long time.

Eliezer’s old Friendliness proposals were at least AIMED at the right thing (a morally praiseworthy vision of humanistic flourishing) and CEV is more explicitly trying for something like this, again, in a way that mostly just tweaks the specification (because Eliezer stopped believing that his earliest plans would “do what they said on the tin they were aimed at” and started over).

If an academic is working on AI, and they aren’t working on Friendliness, and aren’t working on CEV, and it isn’t “alignment to benevolence ” or making “corrigibly seeking humanistic flourishing for all”… I don’t understand why it deserves applause lights.

(EDITED TO ADD: exploring the links more, I see “benevolent game theory, algorithmic foundations of human rights” as topics you raise. This stuff seems good! Maybe this is the stuff you’re trying to sneak into getting more eyeballs via some rhetorical strategy that makes sense in your target audience?)

“The alignment problem” (without extra qualifications) is an academic framing that could easily fit in a grant proposal by an academic researcher to get funding from a slave company to make better slaves. “Alignment IS capabilities research”.

Similarly, there’s a very easy way to be “safe” from skynet: don’t built skynet!

I wouldn’t call a gymnastics curriculum that focused on doing flips while you pick up pennies in front of a bulldozer “learning to be safe”. Similarly, here, it seems like there’s some insane culture somewhere that you’re speaking to whose words are just systematically confused (or intentionally confusing).

Can you explain why you’re even bothering to use the euphemism of “Safety” Research? How does it ever get off the ground of “the words being used denote what naive people would think those words mean” in any way that ever gets past “research on how to put an end to all AI capabilities research in general, by all state actors, and all corporations, and everyone (until such time as non-safety research, aimed at actually good outcomes (instead of just marginally less bad outcomes from current AI) has clearly succeeding as a more important and better and more funding worthy target)”? What does “Safety Research” even mean if it isn’t inclusive of safety from the largest potential risks?